What You Can Learn by Staring at a Blank Wall

Authors

Prafull Sharma, Miika Aittala, Yoav Y. Schechner, Antonio Torralba, Gregory W. Wornell, William T. Freeman, Fredo Durand

Abstract

We present a passive non-line-of-sight method that infers the number of people or activity of a person from the observation of a blank wall in an unknown room. Our technique analyzes complex imperceptible changes in indirect illumination in a video of the wall to reveal a signal that is correlated with motion in the hidden part of a scene. We use this signal to classify between zero, one, or two moving people, or the activity of a person in the hidden scene. We train two convolutional neural networks using data collected from 20 different scenes, and achieve an accuracy of $\approx94\%$ for both tasks in unseen test environments and real-time online settings. Unlike other passive non-line-of-sight methods, the technique does not rely on known occluders or controllable light sources, and generalizes to unknown rooms with no re-calibration. We analyze the generalization and robustness of our method with both real and synthetic data, and study the effect of the scene parameters on the signal quality.

Concepts

The Big Picture

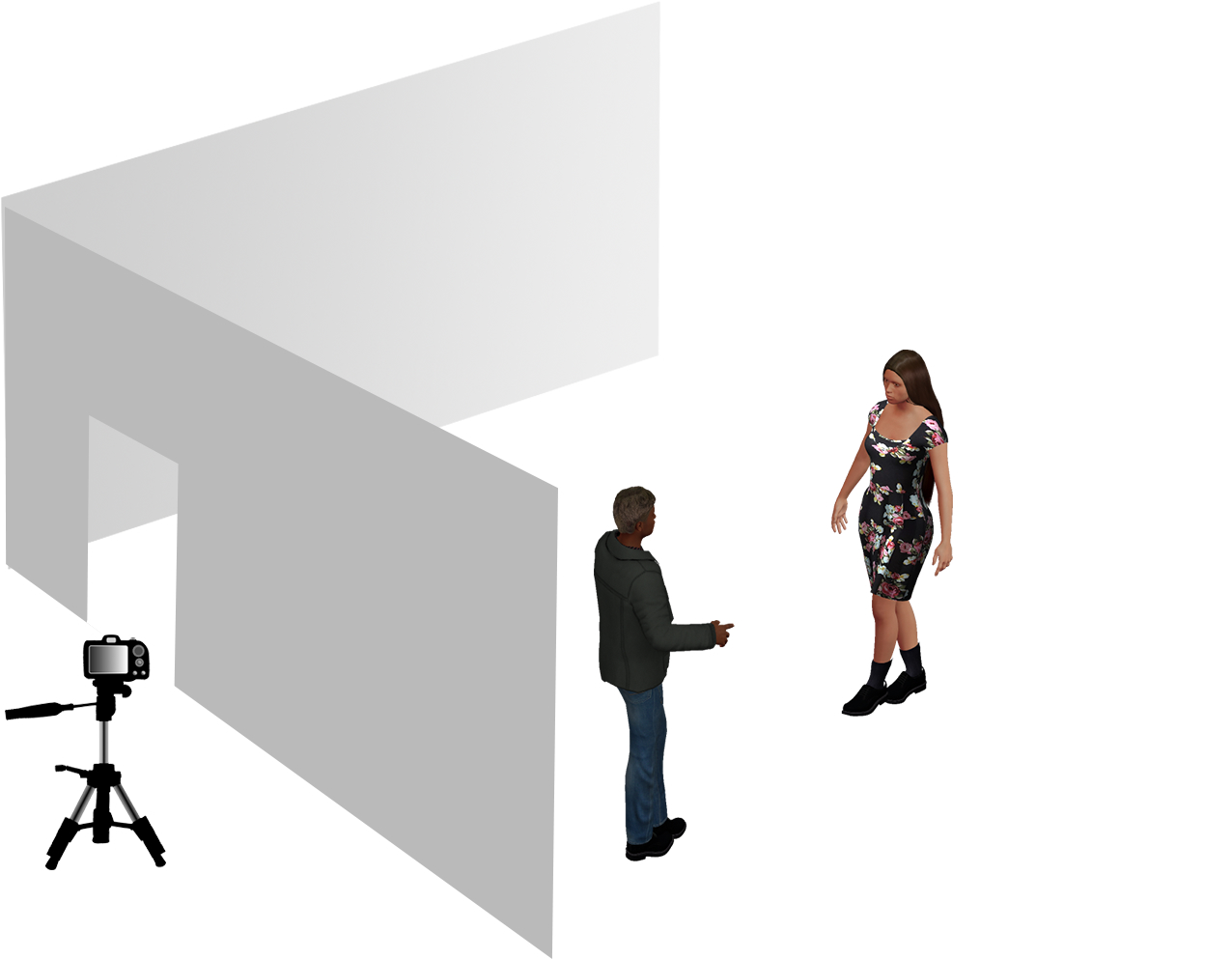

Imagine standing outside a room with the door closed. You can’t see inside, can’t hear anything, and you’re not emitting any signal. All you have is a view of the blank wall opposite the doorway. Can you tell if someone is moving in that room? To the naked eye, absolutely not. But a camera and a neural network can, with roughly 94% accuracy.

It sounds like it belongs in a spy thriller, but the physics is straightforward. Every time a person moves in a room, they subtly alter how light bounces around that space, blocking some light paths, opening up others, creating new reflections.

These changes touch every surface, including walls that appear to be doing nothing. The signal is buried under noise, imperceptible to any human observer. But it’s there.

The team at MIT built a system that extracts these ghost signals from ordinary video and uses them to classify hidden human activity and count hidden people, all without placing a sensor in the room or knowing anything about its geometry in advance.

Key Insight: Motion in a hidden scene leaves a detectable fingerprint on the indirect illumination of a blank wall, and a convolutional neural network can read that fingerprint with ~94% accuracy, even in rooms it has never seen before.

How It Works

The physics starts with indirect illumination: light that reaches a wall not directly from a lamp, but after bouncing off one or more surfaces in the room. When a person walks behind a closed door, they change which light paths are open and which are blocked, reshuffling the patchwork of faint reflected light landing on the visible wall.

The effect is extraordinarily subtle. The researchers measured the signal at between −20 dB and −35 dB below background imaging noise, roughly 100 to 3,000 times weaker than the camera’s own noise floor. You’d never notice it, but it’s mathematically present in every pixel.

To extract this signal, the team built a multi-stage pipeline:

- Video capture: A regular camera records the blank wall. No lasers, no projectors, no specialized hardware.

- Signal extraction: The video is projected into a compact 2D representation that summarizes how the wall’s illumination changes over time and across space.

- Classification: Two separate convolutional neural networks (CNNs) take this representation as input. One classifies the number of people present (zero, one, or two); the other classifies the type of activity.

Training required data from 20 different scenes with varying geometries, furniture arrangements, lighting setups, and wall materials. The key test: could the networks generalize to rooms they’d never seen, with no re-calibration?

They could. On held-out test scenes, the system hit approximately 94% accuracy on both people-counting and activity recognition, running in real time.

Most passive non-line-of-sight (NLOS) techniques depend on known occluders: a visible corner, a table edge, or a post that acts as an accidental lens imposing structure on the incoming light. Remove those occluders and existing methods collapse. This system works on a featureless wall with no known geometry and no cooperation from the environment. That’s the real advance.

The team also built a synthetic simulation to figure out which scene parameters most affect signal quality. Distance between people and the wall, number and placement of light sources, and surface reflectance all shape how much signal bleeds through the noise floor. The simulation helped pin down both the limits and the surprising robustness of the approach.

Why It Matters

Search and rescue teams locating survivors in collapsed buildings. Autonomous vehicles detecting pedestrians around corners. Elderly care systems monitoring falls without putting cameras in private spaces. Because the system is entirely passive (no emitter, no active probing), it works where emitting signals would be impractical or impossible.

But set the applications aside for a moment. The deeper result is about what a blank wall actually contains. We’ve long assumed a featureless wall carries no useful information about what’s happening on the other side. That assumption turns out to be wrong.

Light transport is not a one-way street. It’s a tangled web of multi-bounce dependencies, and perturbing any part of it leaves traces everywhere else. The right signal representation, paired with a CNN trained across enough environments, can pull those traces out of the noise.

The current system classifies coarse categories: it can tell you someone is walking, but not who they are or exactly where. Finer-grained localization, trajectory tracking, and more complex multi-person scenarios will need richer representations and more data. And if someone knows this technique exists, can they defeat it? How the signal holds up against deliberate countermeasures is an open question worth watching.

Bottom Line: A blank wall is not a blank canvas. It’s a noisy, low-fidelity record of everything happening in the room it faces. MIT researchers have shown that convolutional neural networks can decode that record well enough to classify human activity and count people with ~94% accuracy, in real time, in rooms they’ve never visited.

IAIFI Research Highlights

This work combines computational physics and machine learning, using light transport theory to design a signal representation that neural networks can exploit. Neither field alone would have gotten there.

The CNNs generalize across highly variable physical environments when trained on well-structured signal representations, achieving ~94% accuracy on unseen rooms without fine-tuning or re-calibration.

By modeling how motion perturbs indirect illumination through multi-bounce light transport, the work clarifies what information is actually encoded in scattered light and where passive sensing hits its physical limits.

Future work will push toward finer-grained localization and tracking in more complex scenes. The full paper is available at [arXiv:2108.13027](https://arxiv.org/abs/2108.13027).

Original Paper Details

What You Can Learn by Staring at a Blank Wall

2108.13027

Prafull Sharma, Miika Aittala, Yoav Y. Schechner, Antonio Torralba, Gregory W. Wornell, William T. Freeman, Fredo Durand

We present a passive non-line-of-sight method that infers the number of people or activity of a person from the observation of a blank wall in an unknown room. Our technique analyzes complex imperceptible changes in indirect illumination in a video of the wall to reveal a signal that is correlated with motion in the hidden part of a scene. We use this signal to classify between zero, one, or two moving people, or the activity of a person in the hidden scene. We train two convolutional neural networks using data collected from 20 different scenes, and achieve an accuracy of $\approx94\%$ for both tasks in unseen test environments and real-time online settings. Unlike other passive non-line-of-sight methods, the technique does not rely on known occluders or controllable light sources, and generalizes to unknown rooms with no re-calibration. We analyze the generalization and robustness of our method with both real and synthetic data, and study the effect of the scene parameters on the signal quality.