Variance reduction in lattice QCD observables via normalizing flows

Authors

Ryan Abbott, Denis Boyda, Yang Fu, Daniel C. Hackett, Gurtej Kanwar, Fernando Romero-López, Phiala E. Shanahan, Julian M. Urban

Abstract

Normalizing flows can be used to construct unbiased, reduced-variance estimators for lattice field theory observables that are defined by a derivative with respect to action parameters. This work implements the approach for observables involving gluonic operator insertions in the SU(3) Yang-Mills theory and two-flavor Quantum Chromodynamics (QCD) in four space-time dimensions. Variance reduction by factors of $10$-$60$ is achieved in glueball correlation functions and in gluonic matrix elements related to hadron structure, with demonstrated computational advantages. The observed variance reduction is found to be approximately independent of the lattice volume, so that volume transfer can be utilized to minimize training costs.

Concepts

The Big Picture

Imagine trying to measure the weight of a single grain of sand on a noisy beach. The signal you want is buried in overwhelming random fluctuation. That’s roughly the challenge physicists face when computing certain properties of protons and neutrons from first principles using lattice QCD, the computational framework for the theory of the strong nuclear force. Some of the most physically interesting quantities, like how gluons contribute to the internal structure of protons and neutrons, are extraordinarily difficult to extract. Statistical noise swamps the signal, demanding enormous computational resources just to see the effect at all.

Quantum Chromodynamics (QCD) governs quarks and gluons, the fundamental building blocks of atomic nuclei. Lattice QCD encodes this theory on a discrete spacetime grid, then uses Monte Carlo sampling (running the simulation millions of times with random variations) to build up a statistical picture of measurable quantities.

For quantities involving gluonic operator insertions, which probe how gluons behave inside particles like protons, the signal drowns in statistical noise. The community has long needed a smarter statistical approach.

A team from MIT, Fermilab, Columbia, Edinburgh, and Bern has now shown that machine-learned normalizing flows, AI models that learn to transform one set of random field configurations into another, can slash that noise by factors of 10 to 60. These gains hold up in full QCD with dynamical fermions, a realistic simulation where quarks actively shape the gluon field.

By using normalizing flows to construct improved estimators for derivative observables, the researchers reduce statistical variance by 10–60× in physically important lattice QCD calculations, without introducing any bias. The improvement holds up even on large lattice volumes.

How It Works

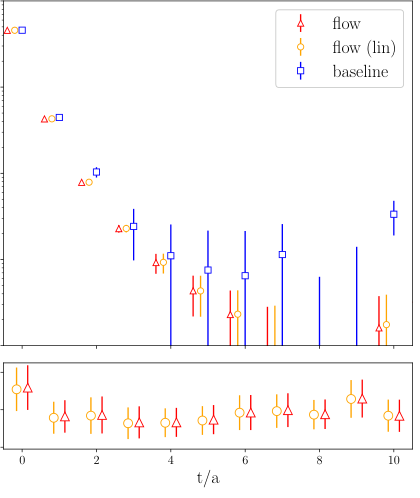

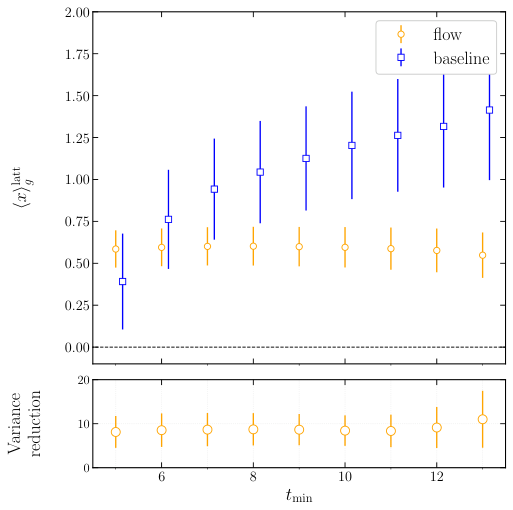

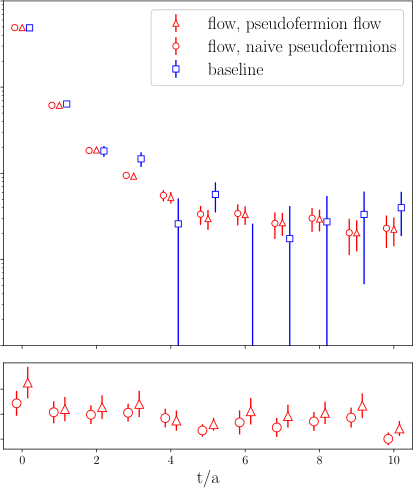

Many important QCD observables can be written as derivative observables: quantities computed by measuring how the simulation’s output changes when you nudge one of its underlying parameters. Two canonical examples are vacuum-subtracted correlation functions (via the “derivative trick”) and hadron matrix elements computed through the Feynman-Hellmann theorem. That theorem says you can infer how a particle responds to a weak interaction by measuring how its energy shifts under a tiny external perturbation.

The standard approach estimates these derivatives as finite differences. Compute the same observable at two slightly different action parameters, divide by the step size. Both measurements carry enormous fluctuations, their difference is tiny, and the noise-to-signal ratio explodes.

Normalizing flows offer a way out. The core idea works like this:

- Train a normalizing flow to map gauge-field configurations sampled under one action parameter to those under a nearby, slightly shifted action, learning the transformation between nearby probability distributions.

- Use the flow as a control variate, a supplementary measurement correlated with your target quantity whose expected value is already known. Subtracting it from the naive estimator cancels much of the statistical noise.

- Alternatively, linearize the flow to extract an exact instantaneous flow field, yielding an unbiased estimator for the derivative with no finite-difference approximation needed. The paper proves this is equivalent to the control variate approach at leading order.

The team used residual flow architectures, neural networks where each layer applies a small, invertible transformation to the field data and builds up a complex mapping through many successive steps. Training minimized the reverse Kullback-Leibler (KL) divergence, a standard measure of how much the learned distribution differs from the target. In the short-distance limit, the paper shows this is equivalent to training with a Physics-Informed Neural Network (PINN) loss, which incorporates known physical equations directly into the learning objective. That connection between the two communities’ methods is worth watching.

The researchers tested this on lattices up to 16³ × 32, approaching state-of-the-art phenomenological scales, in two-flavor QCD with dynamical fermions. Both glueball correlation functions (probing hypothetical bound states made entirely of gluons) and gluonic matrix elements (quantities describing how gluons contribute to the internal structure of hadrons like protons and neutrons) showed 10–60× variance reduction.

Why It Matters

A 10–60× variance reduction is not a technical nicety. Monte Carlo statistical errors shrink as 1/√N with the number of samples, so achieving the same precision requires 100 to 3,600 times fewer samples. That translates directly to orders-of-magnitude savings in supercomputer time, or the ability to tackle harder problems with existing resources.

There’s a practical bonus worth highlighting: the variance reduction is approximately independent of lattice volume. Researchers can train flows on small, cheap lattices and transfer the trained model to larger volumes, a strategy called volume transfer, without losing the benefits. This removes a potential scaling barrier and keeps training costs modest even when the target calculation lives on a large lattice.

The connection to PINN-style training suggests a future workflow where both training and evaluation happen in the exact linearized limit, simplifying the pipeline. And the framework extends to other derivative observables throughout lattice QCD, including quantities relevant to nuclear physics, electroweak matrix elements, and precision Standard Model tests.

Normalizing flows can now deliver 10–60× variance reduction in physically critical lattice QCD calculations at scale, with training costs that don’t grow with volume. That’s a genuine step toward making precision nuclear physics dramatically more computationally accessible.

IAIFI Research Highlights

This work merges deep generative modeling (normalizing flows with residual architectures) with nonperturbative QCD calculations, showing that modern machine learning can break through longstanding statistical bottlenecks in fundamental physics at real-world scales.

The paper establishes a formal equivalence between reverse-KL flow training and Physics-Informed Neural Network objectives, unifying two separate ML methodologies and pointing toward new hybrid training strategies for physics-constrained generative models.

Achieving 10–60× variance reduction in glueball correlators and gluonic matrix elements with dynamical fermions on lattices up to 16³ × 32 brings AI-assisted lattice QCD within reach of the precision regime needed for Standard Model tests and hadron structure physics.

Future extensions could target electroweak matrix elements and nuclear observables; the volume-transfer capability suggests the method will remain practical as lattice sizes grow. The preprint is available as FERMILAB-PUB-26-0130-T / MIT-CTP/6010 ([arXiv:2603.02984](https://arxiv.org/abs/2603.02984)).

Original Paper Details

Variance reduction in lattice QCD observables via normalizing flows

[arXiv:2603.02984](https://arxiv.org/abs/2603.02984)

Ryan Abbott, Denis Boyda, Yang Fu, Daniel C. Hackett, Gurtej Kanwar, Fernando Romero-López, Phiala E. Shanahan, Julian M. Urban

Normalizing flows can be used to construct unbiased, reduced-variance estimators for lattice field theory observables that are defined by a derivative with respect to action parameters. This work implements the approach for observables involving gluonic operator insertions in the SU(3) Yang-Mills theory and two-flavor Quantum Chromodynamics (QCD) in four space-time dimensions. Variance reduction by factors of $10$-$60$ is achieved in glueball correlation functions and in gluonic matrix elements related to hadron structure, with demonstrated computational advantages. The observed variance reduction is found to be approximately independent of the lattice volume, so that volume transfer can be utilized to minimize training costs.