Symmetry-via-Duality: Invariant Neural Network Densities from Parameter-Space Correlators

Authors

Anindita Maiti, Keegan Stoner, James Halverson

Abstract

Parameter-space and function-space provide two different duality frames in which to study neural networks. We demonstrate that symmetries of network densities may be determined via dual computations of network correlation functions, even when the density is unknown and the network is not equivariant. Symmetry-via-duality relies on invariance properties of the correlation functions, which stem from the choice of network parameter distributions. Input and output symmetries of neural network densities are determined, which recover known Gaussian process results in the infinite width limit. The mechanism may also be utilized to determine symmetries during training, when parameters are correlated, as well as symmetries of the Neural Tangent Kernel. We demonstrate that the amount of symmetry in the initialization density affects the accuracy of networks trained on Fashion-MNIST, and that symmetry breaking helps only when it is in the direction of ground truth.

Concepts

The Big Picture

Imagine understanding a crowd’s personality without observing every individual. You could study each person one by one, or you could look at collective behaviors to infer something deeper about their shared nature. Physicists have used this trick for decades to uncover hidden symmetries of fundamental forces. A team at IAIFI is now applying it to neural networks.

Neural networks have a dirty secret: even though millions are trained every day, we understand very little about the population of all possible networks. The full range of behaviors any network with a given design could exhibit is described by what’s called the network density. It encodes everything about a network’s possible behaviors before training begins. Knowing its symmetries (the transformations that leave it unchanged) would constrain predictions and guide better designs. The catch? The density is almost always unknown and impossible to calculate directly.

Researchers Anindita Maiti, Keegan Stoner, and James Halverson found a way around this. They borrow duality from theoretical physics, the idea that the same object can be described in two different but equivalent ways. With it, they can determine the symmetries of a neural network density without ever computing it.

Key Insight: Symmetries of an unknown neural network density can be read off from correlation functions computed in the more tractable parameter space. The technique, called symmetry-via-duality, connects quantum field theory with deep learning.

How It Works

The central idea exploits a duality in how you can think about a neural network. On one side is parameter space: a network as a collection of weights and biases drawn from some underlying distribution. On the other is function space: the same network viewed as a randomly sampled function from all possible input-output behaviors the architecture allows. Two frames for the same object, exactly the kind of duality physicists exploit in quantum field theory (QFT).

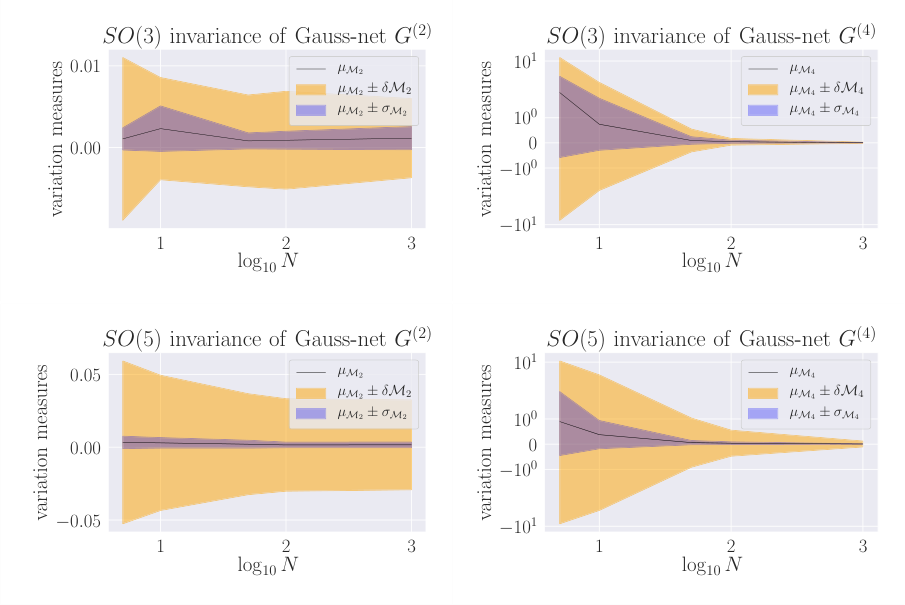

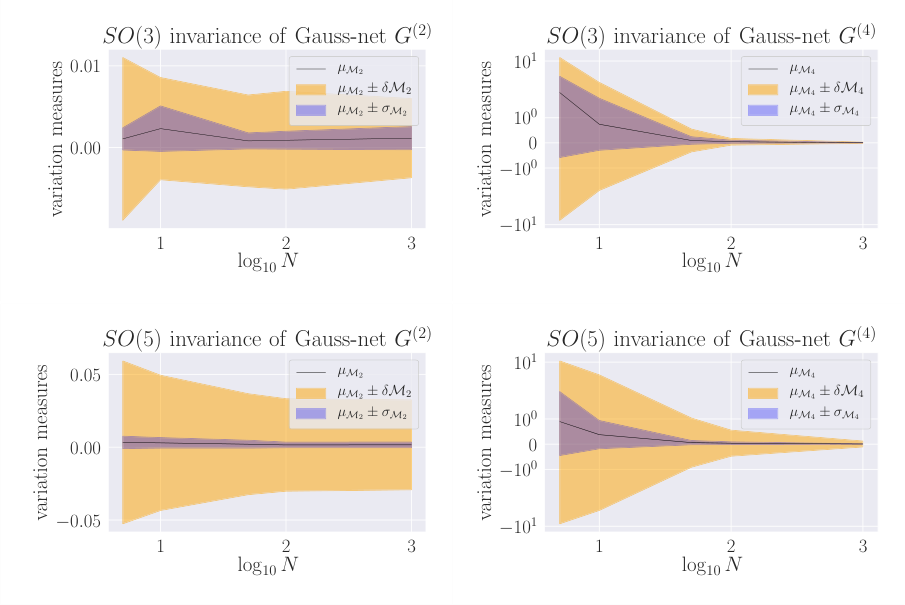

In QFT, physicists compute n-point correlation functions (averages measuring how multiple points in a field relate) to extract symmetry constraints without knowing the complete theory. Maiti, Stoner, and Halverson import this machinery directly. The n-point correlators of a network are:

$$G^{(n)}(x_1, \ldots, x_n) = \mathbb{E}[f(x_1) \cdots f(x_n)]$$

The core theorem: if these correlation functions are invariant under a transformation, the underlying density over functions must also be invariant. You don’t need to know the density. The correlators tell you everything.

The team applies this across two symmetry types:

- Input symmetries: transformations of the network’s inputs, such as translations, rotations, or other operations on data

- Output symmetries: transformations of the network’s outputs, like permuting output neurons

In each case, invariance of the correlation functions (computable directly from the parameter distribution) implies invariance of the functional density. This holds even when no single network in the ensemble is equivariant. The density can be symmetric even if individual networks are not.

The approach extends beyond initialization. When networks train, their parameters become correlated, which creates trouble for most analytical tools. Symmetry-via-duality handles this: if the correlated parameter distribution preserves the relevant invariances, the symmetry carries through. The framework also applies to the Neural Tangent Kernel (NTK), the mathematical object governing how a wide network’s outputs evolve during gradient descent. Its symmetry properties follow by the same mechanism.

In the infinite-width limit, the results recover known Neural Network Gaussian Process (NNGP) behavior, where networks act like Gaussian processes with especially clean mathematical properties. This is a consistency check. The new framework generalizes the known story to finite-width networks with richer, non-Gaussian behavior.

Why It Matters

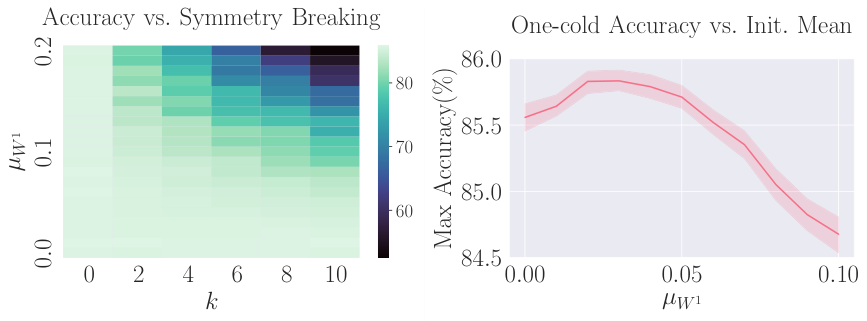

The practical stakes show up in Fashion-MNIST experiments. The team constructs initialization distributions with varying amounts of symmetry and measures classification accuracy after training. The results sharpen an intuition that had previously been fuzzy: more symmetry at initialization is not always better. What matters is whether the symmetry breaking points toward the true structure of the data.

When it does, breaking symmetry helps. When it doesn’t, it hurts.

For network design, the implication is concrete. If you know the symmetry structure of your problem (rotational symmetry in a physics dataset, permutation symmetry in a particle physics task), you can engineer the initialization distribution to match, or purposely break it in the right directions. Symmetry-via-duality lets you verify that your chosen parameter distribution produces the desired functional symmetry, all without computing the density itself.

There’s a longer-term payoff too. QFT physicists have spent decades building tools for extracting symmetry constraints from correlation functions. Neural network theory is young enough that importing even the basic vocabulary of that framework could open up a lot of ground quickly.

Bottom Line: Symmetry-via-duality lets researchers determine the hidden symmetries of neural network densities using parameter-space correlation functions, no explicit density required, and shows that initialization symmetry has measurable effects on trained network performance.

IAIFI Research Highlights

This work transplants duality and correlation-function machinery from quantum field theory into neural network theory, drawing a direct formal connection between fundamental physics and deep learning foundations.

Symmetry-via-duality gives researchers a practical diagnostic for network initialization: by computing parameter-space correlators, they can verify or engineer the symmetry properties of a network density before training begins, with measurable effects on accuracy.

By recovering NNGP and NTK symmetry results as special cases and extending them to finite-width non-Gaussian regimes, the framework advances a QFT-inspired understanding of neural network densities and tightens the formal link between the two fields.

Future work can extend symmetry-via-duality to characterize how symmetry evolves during gradient-descent training and to constrain non-Gaussian process models at finite width; the paper is available at [arXiv:2106.00694](https://arxiv.org/abs/2106.00694).

Original Paper Details

Symmetry-via-Duality: Invariant Neural Network Densities from Parameter-Space Correlators

[arXiv:2106.00694](https://arxiv.org/abs/2106.00694)

Anindita Maiti, Keegan Stoner, James Halverson

Parameter-space and function-space provide two different duality frames in which to study neural networks. We demonstrate that symmetries of network densities may be determined via dual computations of network correlation functions, even when the density is unknown and the network is not equivariant. Symmetry-via-duality relies on invariance properties of the correlation functions, which stem from the choice of network parameter distributions. Input and output symmetries of neural network densities are determined, which recover known Gaussian process results in the infinite width limit. The mechanism may also be utilized to determine symmetries during training, when parameters are correlated, as well as symmetries of the Neural Tangent Kernel. We demonstrate that the amount of symmetry in the initialization density affects the accuracy of networks trained on Fashion-MNIST, and that symmetry breaking helps only when it is in the direction of ground truth.