Relaxed Equivariant Graph Neural Networks

Authors

Elyssa Hofgard, Rui Wang, Robin Walters, Tess Smidt

Abstract

3D Euclidean symmetry equivariant neural networks have demonstrated notable success in modeling complex physical systems. We introduce a framework for relaxed $E(3)$ graph equivariant neural networks that can learn and represent symmetry breaking within continuous groups. Building on the existing e3nn framework, we propose the use of relaxed weights to allow for controlled symmetry breaking. We show empirically that these relaxed weights learn the correct amount of symmetry breaking.

Concepts

The Big Picture

Imagine teaching a child to recognize a coffee mug. You’d want them to know it’s the same mug whether it’s right-side up or tilted. That consistency makes learning easier. Now imagine the mug is melting. The symmetry breaks, and a neural network that only knows about perfect mugs will struggle with the melting one.

This tension runs through modern physics-informed machine learning. Researchers have spent years building neural networks that respect nature’s fundamental symmetries: rotational, mirror, and translational. These symmetry-aware neural networks work well for modeling molecules, crystals, and quantum systems.

But nature isn’t always symmetric. Crystals undergo phase transitions, sudden structural changes like water turning to ice, where the regular symmetry of the material breaks down. Fluids respond to temperature gradients. Biological molecules feel the asymmetric tug of their environment. When symmetry breaks, the best physics-AI tools can become the least flexible.

A team from MIT and Northeastern University, led by Elyssa Hofgard, has built a framework that lets these networks learn how much symmetry to break, and when, directly from data.

Key Insight: Rather than forcing a neural network to be fully symmetric or fully asymmetric, these adaptive networks learn the degree of symmetry breaking that the data actually requires.

How It Works

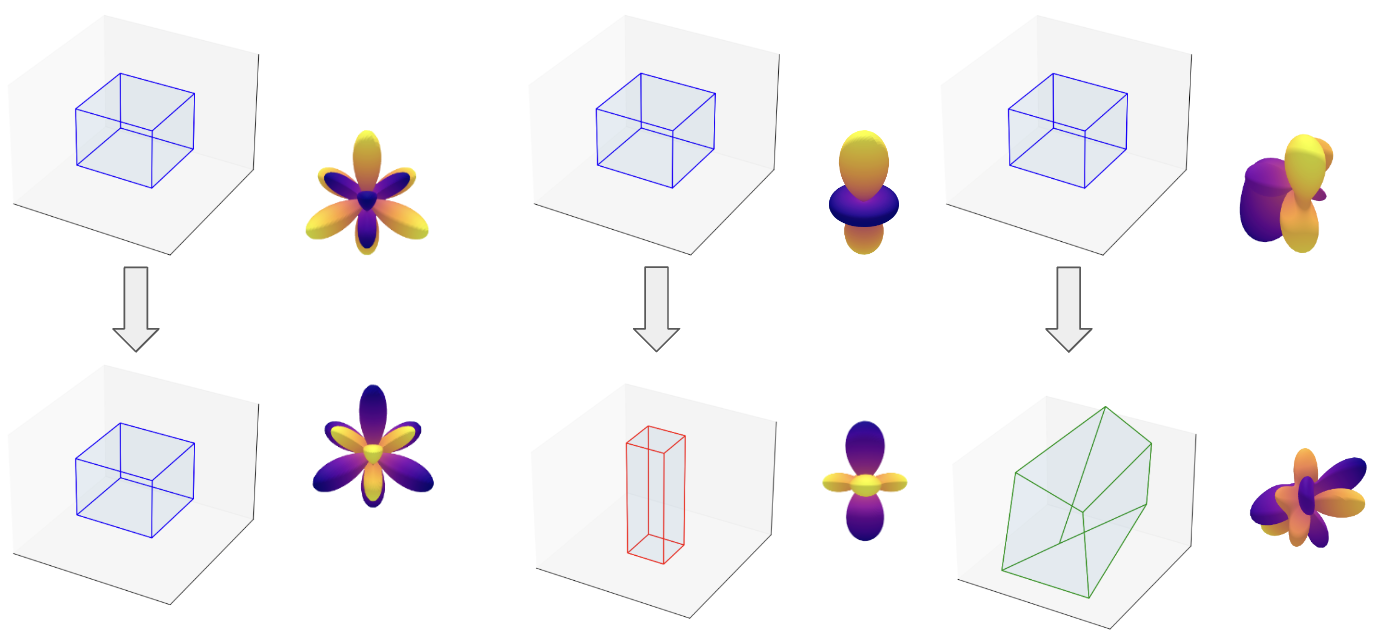

The starting point is the E(3) group, the mathematical structure encoding all symmetries of 3D Euclidean space: translations, rotations, and reflections. Standard equivariant neural networks are built so that rotating your input molecule produces a rotated but otherwise identical output. That makes them data-efficient and well-behaved.

The e3nn framework is an open-source library for building these networks, and it’s what this work extends. It gets equivariance through a two-part recipe:

- Spherical harmonics project geometric relationships between atoms onto basis functions that capture directional information in a form that transforms predictably under rotation. Think of it as a mathematical language for encoding “which way things point.”

- Tensor product decompositions combine inputs and filters using Clebsch-Gordan coefficients, numbers that specify the rules for how geometric quantities (scalars, vectors, and their higher-dimensional relatives) combine while obeying the group’s symmetry rules.

Each “path” in this tensor product can be independently weighted by a scalar. Here’s where it gets interesting. Normally, those scalar weights must be identical across all orientations, which guarantees equivariance. Hofgard and colleagues introduce relaxed weights: weights that are mostly equivariant but carry a small learnable perturbation that can break symmetry in a controlled way.

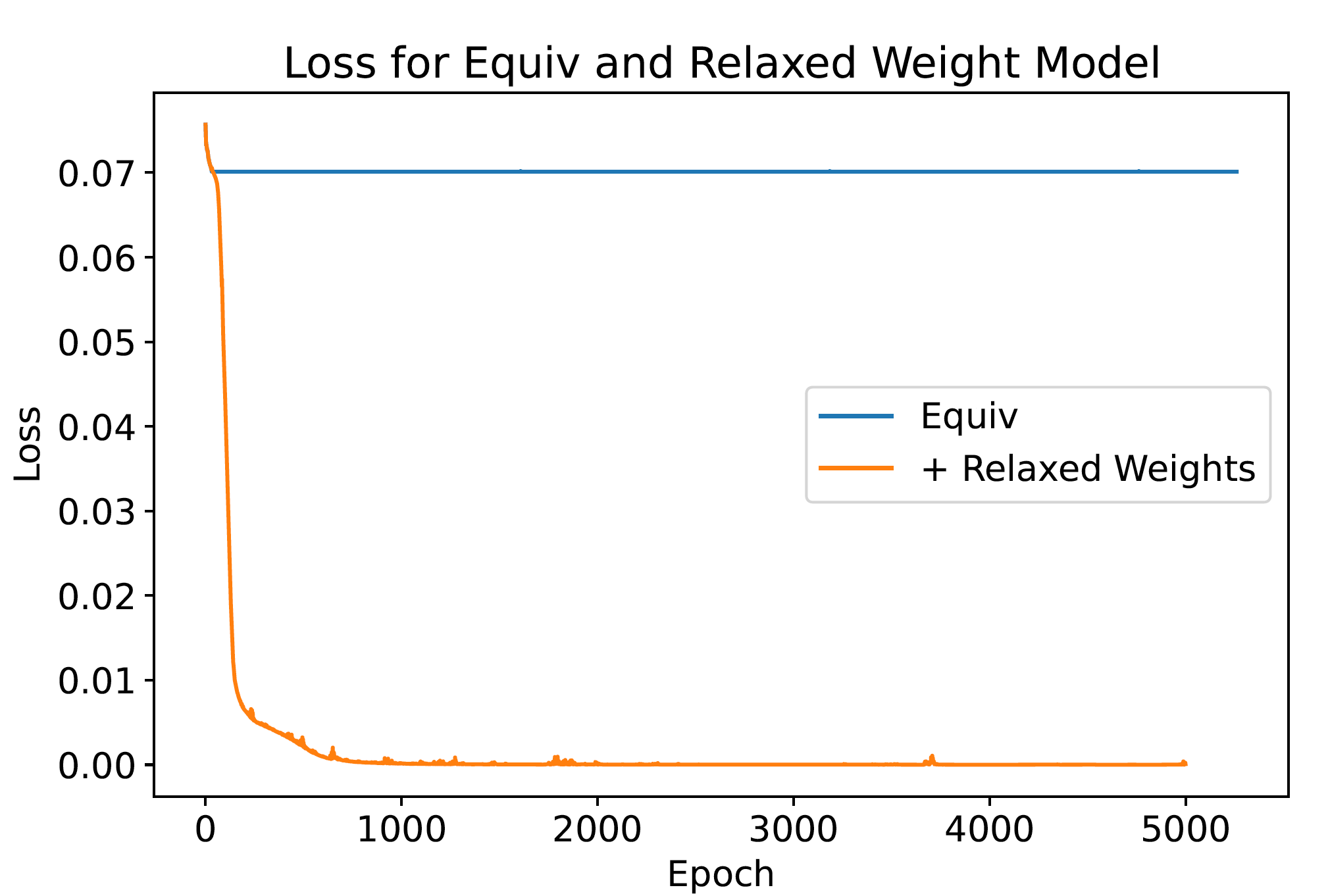

The relaxed weight for each tensor product path takes the standard equivariant weight and adds a symmetry-breaking correction. The network learns how large this correction should be from training data. If the physics truly respects E(3) symmetry, the correction learns to be zero. If the system has broken symmetry (say, a crystal undergoing a phase transition), the correction grows to capture that asymmetry.

There’s a nice connection to Landau theory, the classical physics framework for describing phase transitions. In Landau theory, an “order parameter” tracks how much symmetry a system has broken: zero in the disordered phase, nonzero below the transition temperature. The relaxed weights function as learned order parameters, measures of symmetry breaking that emerge from training rather than being specified by hand.

In practice, the relaxed weights converge to the correct amount of symmetry breaking for systems with known, controllable asymmetry. The network neither over-breaks nor under-breaks symmetry. It finds the physics.

Why It Matters

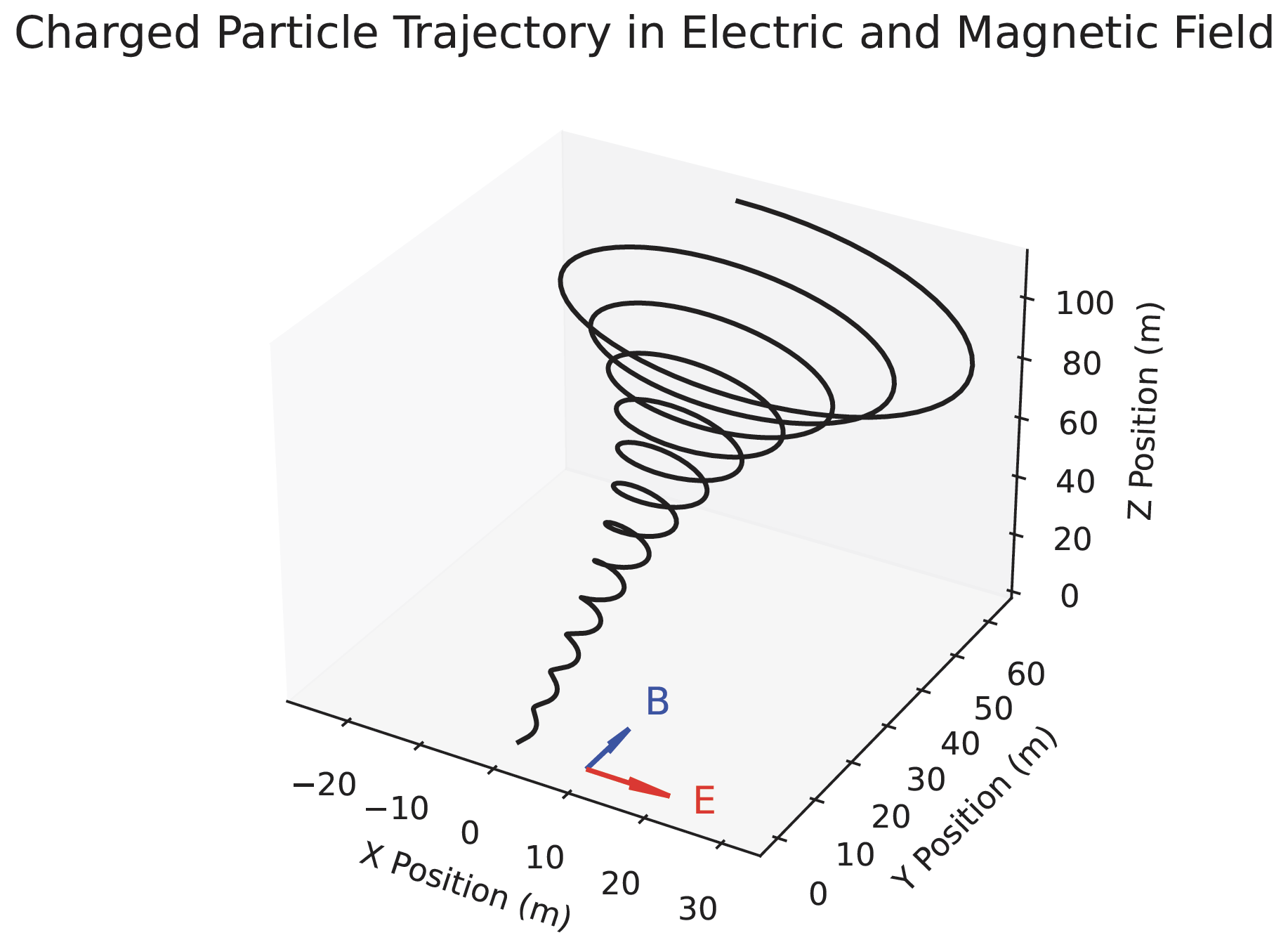

Many scientifically important systems live where symmetry is approximate rather than exact. Superconductors lose all electrical resistance near a critical temperature. Proteins fold in asymmetric cellular environments. Fluids driven by external fields break the symmetry of undisturbed flow.

Previous equivariant networks forced a binary choice: impose full symmetry and accept model errors, or abandon equivariance entirely and lose the efficiency and generalization that made these networks useful. Relaxed equivariant networks eliminate that tradeoff.

A model trained on crystal structures can now handle both the symmetric high-temperature phase and the symmetry-broken low-temperature phase within a single architecture. For fluid dynamics, a network can encode the baseline Euclidean symmetry of an undisturbed fluid while learning the asymmetry introduced by a temperature gradient. Materials science, climate modeling, biophysics: wherever the physics happens at the boundary between order and disorder, this framework applies.

Symmetry constraints remain one of the best tools for building models that generalize from limited data. What this work adds is a principled way to handle approximate symmetry, which is far more common in practice than perfect symmetry.

Bottom Line: Relaxed equivariant graph neural networks let physicists and AI researchers model symmetry breaking in continuous 3D space, learning the right amount of asymmetry from data without sacrificing the geometric structure that makes equivariant networks powerful.

IAIFI Research Highlights

This work connects abstract group representation theory with practical neural network architecture design, producing a framework equally at home with Clebsch-Gordan coefficients and PyTorch tensor operations.

Relaxed equivariant networks extend learnable symmetry structures in geometric deep learning from discrete groups to the continuous E(3) group, allowing more expressive inductive biases for physical data.

By tying learned symmetry-breaking weights to Landau order parameters, the framework provides a data-driven route to characterizing phase transitions and spontaneous symmetry breaking in condensed matter systems.

Future directions include applying relaxed equivariance to molecular dynamics simulations under external fields and crystallographic phase transition modeling. The work appeared at the Geometry-grounded Representation Learning workshop at ICML 2024.

Original Paper Details

Relaxed Equivariant Graph Neural Networks

[arXiv:2407.20471](https://arxiv.org/abs/2407.20471)

Elyssa Hofgard, Rui Wang, Robin Walters, Tess Smidt

3D Euclidean symmetry equivariant neural networks have demonstrated notable success in modeling complex physical systems. We introduce a framework for relaxed E(3) graph equivariant neural networks that can learn and represent symmetry breaking within continuous groups. Building on the existing e3nn framework, we propose the use of relaxed weights to allow for controlled symmetry breaking. We show empirically that these relaxed weights learn the correct amount of symmetry breaking.