Pruning a restricted Boltzmann machine for quantum state reconstruction

Authors

Anna Golubeva, Roger G. Melko

Abstract

Restricted Boltzmann machines (RBMs) have proven to be a powerful tool for learning quantum wavefunction representations from qubit projective measurement data. Since the number of classical parameters needed to encode a quantum wavefunction scales rapidly with the number of qubits, the ability to learn efficient representations is of critical importance. In this paper we study magnitude-based pruning as a way to compress the wavefunction representation in an RBM, focusing on RBMs trained on data from the transverse-field Ising model in one dimension. We find that pruning can reduce the total number of RBM weights, but the threshold at which the reconstruction accuracy starts to degrade varies significantly depending on the phase of the model. In a gapped region of the phase diagram, the RBM admits pruning over half of the weights while still accurately reproducing relevant physical observables. At the quantum critical point however, even a small amount of pruning can lead to significant loss of accuracy in the physical properties of the reconstructed quantum state. Our results highlight the importance of tracking all relevant observables as their sensitivity varies strongly with pruning. Finally, we find that sparse RBMs are trainable and discuss how a successful sparsity pattern can be created without pruning.

Concepts

The Big Picture

Take thousands of measurements of a quantum system: qubits flickering between states. From that data alone, you want to reconstruct the underlying wavefunction, the complete mathematical portrait of the system. It encodes every probability, every correlation, every possible measurement outcome. The problem is that this description grows exponentially with the number of particles. For 50 qubits, writing it down exactly would require more numbers than atoms in the observable universe.

Machine learning can help, but only if it learns efficient representations. Compressed summaries that capture what matters without drowning in parameters.

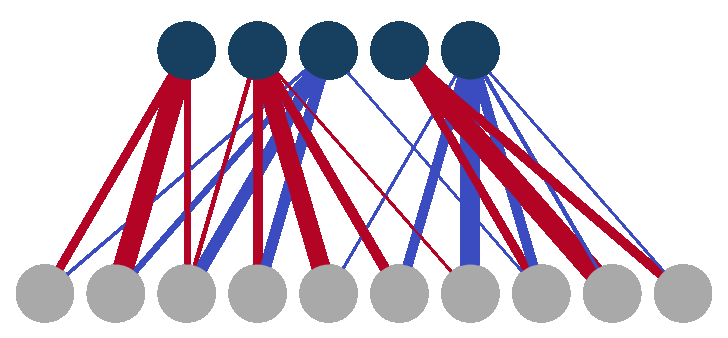

Restricted Boltzmann machines (RBMs) are a natural fit. They’re compact neural networks with two layers: one that “sees” raw measurement data and one that learns hidden patterns, connected by a matrix of tunable weights. More qubits means a bigger RBM. But does every connection actually matter? If you could snip away the weakest links without losing accuracy, you’d have a faster, leaner machine.

Anna Golubeva and Roger Melko tested exactly this. Whether pruning works depends on where you sit in the quantum phase diagram, the map of how a system behaves as you tune physical conditions like magnetic field strength. Get the phase wrong, and pruning falls apart.

Key Insight: Pruning an RBM works well in ordinary quantum phases, where you can eliminate more than half the weights without losing accuracy. But at a quantum critical point, even minor pruning destroys the fidelity of the reconstructed wavefunction.

How It Works

The team trained RBMs on data from the one-dimensional transverse-field Ising model (TFIM), a workhorse of quantum physics. It describes a chain of interacting qubits subject to a tunable magnetic field, with two distinct phases. Spins in the ferromagnetic phase align like compass needles; in the paramagnetic phase, quantum randomness prevents any stable alignment. Between them lies a quantum critical point, a transition driven purely by quantum mechanics.

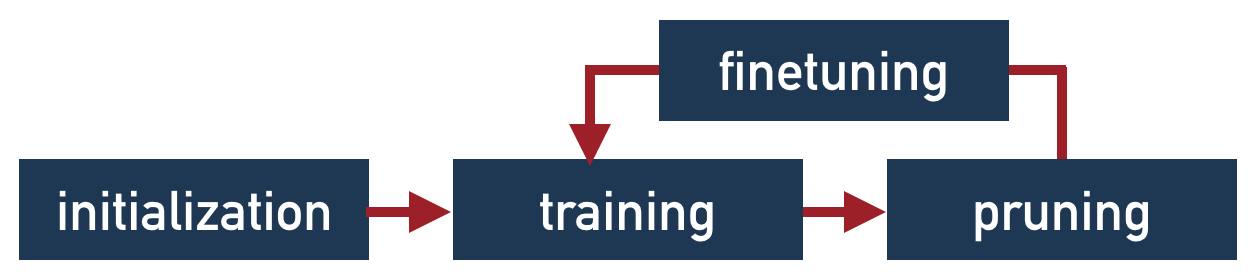

They used DMRG (Density Matrix Renormalization Group), a standard numerical method for finding the lowest-energy state of a quantum system, to generate realistic measurement samples. Training used the QuCumber software package. After training, they applied iterative magnitude-based pruning: find the smallest weight, zero it out, let the remaining weights readjust, repeat. Same strategy used to compress deep learning models onto smartphones and edge devices.

How much pruning can an RBM tolerate? They tracked four observables:

- KL divergence: how well the learned distribution matches the true one

- Fidelity: the overlap between the RBM wavefunction and the true ground state

- Energy: the system’s average energy, computed from the Hamiltonian (the mathematical operator defining how the system evolves)

- Two-point correlation function: how spins are correlated across the chain

In the ferromagnetic phase, pruning more than half the weights left all four observables intact. The RBM had learned a redundant representation. The extra connections were genuinely superfluous.

At the critical point, things break down. Quantum correlations become long-ranged, stretching across the entire system with no preferred scale. Capturing this structure requires full connectivity, and removing even a small fraction of weights caused measurable degradation.

Different observables broke at different pruning thresholds, too. The energy might look fine while correlation functions had already degraded significantly. A single metric can give false confidence. You need the full set of relevant physical observables, not just training loss.

The team also tried a more direct approach: start sparse and train from scratch, skipping the expensive detour of building a full model first. It works, but the sparsity pattern matters. A random sparse network fails. One informed by the known locality of TFIM interactions succeeds.

Why It Matters

How well RBM representations compress determines how large a quantum system we can feasibly study. If a given phase admits highly sparse representations, computational resources can target only where quantum complexity actually lives.

Quantum physics also offers something unusual here: a test bed where “correct” has a rigorous definition. The known Hamiltonian specifies the exact ground state, so reconstruction accuracy is measurable with mathematical precision. Few other machine learning domains have this, making quantum state reconstruction a sharp setting for understanding when pruning works and when it doesn’t.

That pruning sensitivity tracks quantum phase structure is itself a physics result. It ties the physical complexity of a quantum state to the information-theoretic structure of the network representing it. Future work might ask whether pruning criteria based on physical symmetries, or on quantum entanglement (correlations linking distant qubits that have no classical analogue), could extend sparse RBMs into the critical regime.

Bottom Line: Pruning half an RBM’s weights is safe in ordinary quantum phases, but quantum critical points demand full connectivity. This matters both for scaling up quantum state reconstruction and for the theory of neural network compression.

IAIFI Research Highlights

This work connects classical machine learning compression to the physical structure of quantum phases, showing that neural network compressibility tracks the entanglement complexity of the underlying quantum state.

Standard pruning metrics can be misleading for generative models: different physical observables fail at different sparsity thresholds. This motivates physically-informed pruning criteria for quantum ML.

By mapping how RBM pruning sensitivity varies across the quantum phase diagram of the transverse-field Ising model, the work reveals a concrete signature of quantum criticality in neural network structure.

Future directions include developing sparsity patterns informed by physical symmetries and extending these results to two-dimensional systems and experimental quantum devices; the full paper is available at [arXiv:2110.03676](https://arxiv.org/abs/2110.03676).

Original Paper Details

Pruning a restricted Boltzmann machine for quantum state reconstruction

2110.03676

Anna Golubeva, Roger G. Melko

Restricted Boltzmann machines (RBMs) have proven to be a powerful tool for learning quantum wavefunction representations from qubit projective measurement data. Since the number of classical parameters needed to encode a quantum wavefunction scales rapidly with the number of qubits, the ability to learn efficient representations is of critical importance. In this paper we study magnitude-based pruning as a way to compress the wavefunction representation in an RBM, focusing on RBMs trained on data from the transverse-field Ising model in one dimension. We find that pruning can reduce the total number of RBM weights, but the threshold at which the reconstruction accuracy starts to degrade varies significantly depending on the phase of the model. In a gapped region of the phase diagram, the RBM admits pruning over half of the weights while still accurately reproducing relevant physical observables. At the quantum critical point however, even a small amount of pruning can lead to significant loss of accuracy in the physical properties of the reconstructed quantum state. Our results highlight the importance of tracking all relevant observables as their sensitivity varies strongly with pruning. Finally, we find that sparse RBMs are trainable and discuss how a successful sparsity pattern can be created without pruning.