Overcoming the Spectral Bias of Neural Value Approximation

Authors

Ge Yang, Anurag Ajay, Pulkit Agrawal

Abstract

Value approximation using deep neural networks is at the heart of off-policy deep reinforcement learning, and is often the primary module that provides learning signals to the rest of the algorithm. While multi-layer perceptron networks are universal function approximators, recent works in neural kernel regression suggest the presence of a spectral bias, where fitting high-frequency components of the value function requires exponentially more gradient update steps than the low-frequency ones. In this work, we re-examine off-policy reinforcement learning through the lens of kernel regression and propose to overcome such bias via a composite neural tangent kernel. With just a single line-change, our approach, the Fourier feature networks (FFN) produce state-of-the-art performance on challenging continuous control domains with only a fraction of the compute. Faster convergence and better off-policy stability also make it possible to remove the target network without suffering catastrophic divergences, which further reduces TD}(0)'s estimation bias on a few tasks.

Concepts

The Big Picture

Imagine tuning a radio receiver that only picks up bass frequencies. You’d catch the rhythm, maybe some melody, but the high-pitched details that make music rich and distinctive would be a blur. Now imagine this is your brain learning chess: you can grasp broad strategic ideas, but the subtle tactical patterns that separate a grandmaster from a club player stay perpetually fuzzy, no matter how long you study.

Neural networks in reinforcement learning have this same problem. When an AI agent learns to play a game or control a robot, it builds a value function, an internal map that scores every possible situation and tells the agent how favorable its position is. Deep neural networks approximate this function across vast, continuous spaces, but they carry a subtle flaw: they learn smooth, slowly-varying patterns quickly while sharp, high-frequency details take exponentially more training steps to acquire. Sometimes those details never get learned at all.

Researchers at MIT’s CSAIL and IAIFI identified this tendency, called spectral bias, as a root cause of inefficiency in deep reinforcement learning. Their fix requires a single line of code.

Key Insight: Standard neural networks are biased toward low-frequency value functions, causing them to miss high-frequency structure. Replacing the input layer with random Fourier features reshapes the learning dynamic across the full frequency spectrum, producing faster and more stable value approximation.

How It Works

The theoretical backbone comes from neural tangent kernel (NTK) theory, a framework that characterizes what kinds of patterns a neural network learns efficiently and which it struggles with. The core finding: training via gradient descent doesn’t learn all patterns at the same rate. Low-frequency components (gradual, broad trends) converge quickly. High-frequency components (sharp, rapid variations) converge exponentially slowly.

For value functions, this is trouble. Value functions tend to be complex and jagged because of the recursive structure of Bellman equations, the mathematical rules that govern how an agent’s value estimates feed into one another.

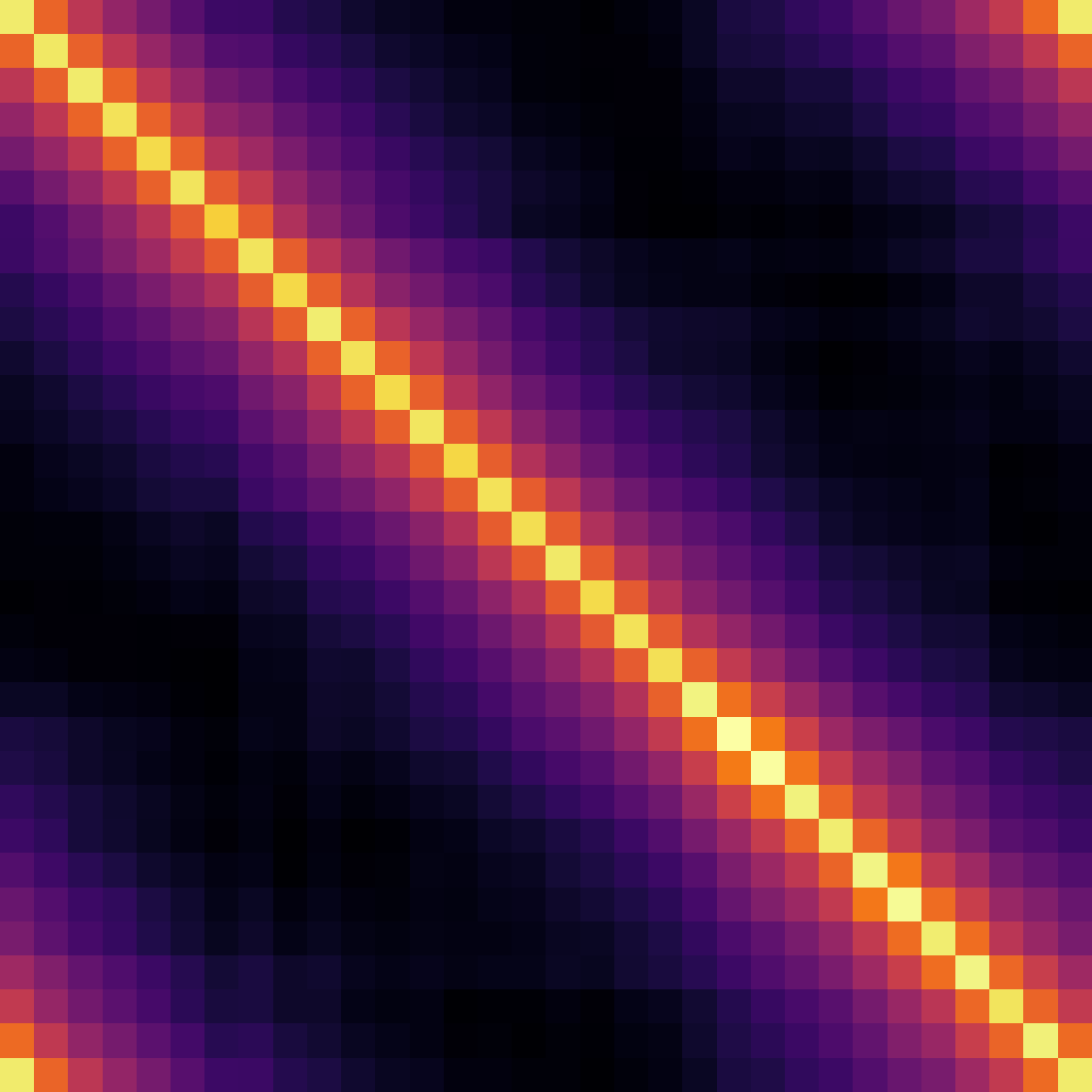

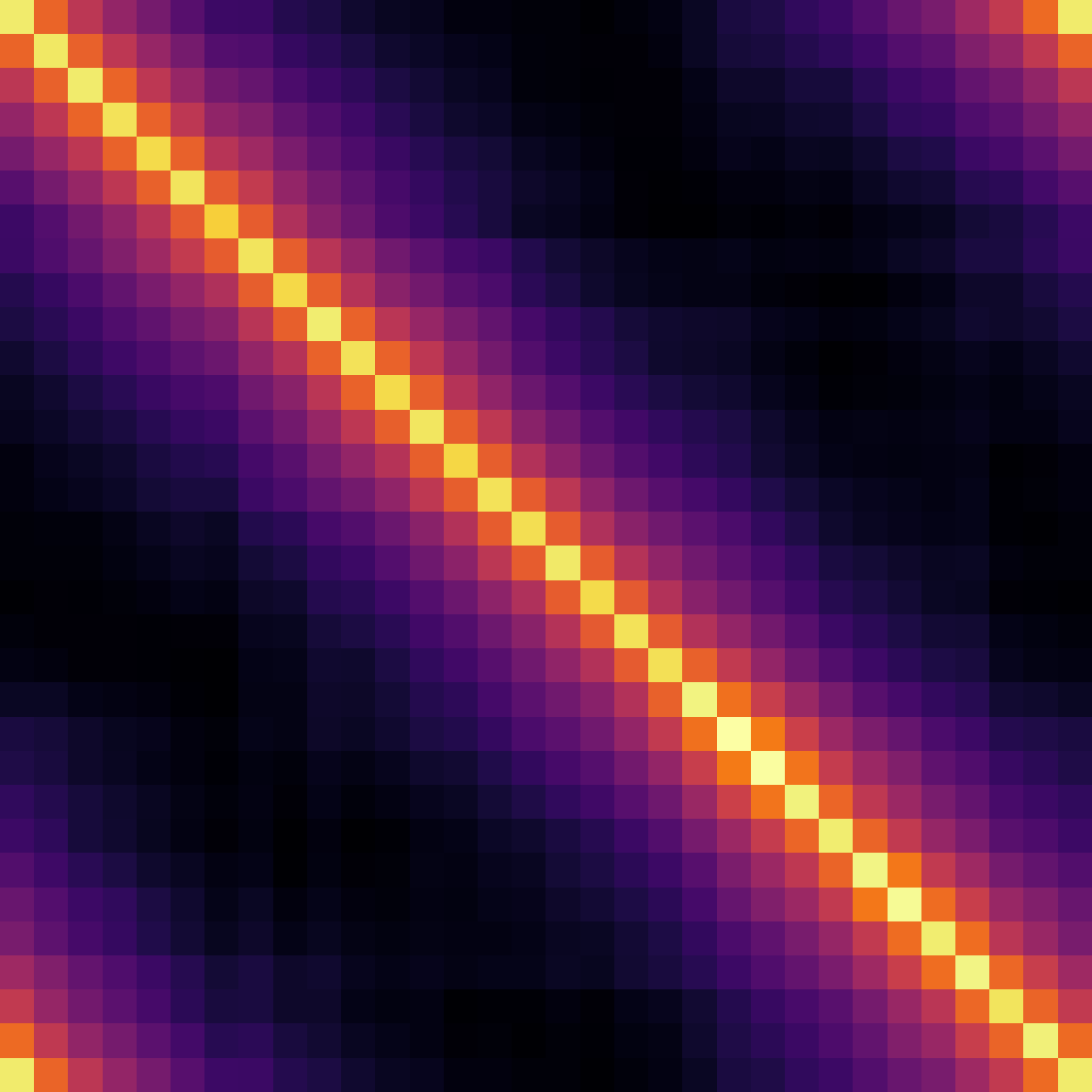

Toy experiments make this concrete. A standard 4-layer multi-layer perceptron (MLP) trained with fitted Q-iteration produces a smoothed-out, blurry approximation of the true value function. Making the network three times deeper or training five times longer doesn’t help. The architecture can’t capture the sharp value structure agents actually need.

The solution borrows from computer graphics, where researchers hit the same wall when neural networks tried to represent fine 3D scene details. The fix is to transform raw inputs through random Fourier features: sinusoidal functions at randomly sampled frequencies that lift low-dimensional inputs into a higher-dimensional, oscillation-rich space. Mathematically, this reshapes the network’s learning profile from a broad low-pass filter into a composite kernel tunable across a wide frequency range.

The resulting architecture, Fourier Feature Networks (FFN), works like this:

- Take the state (and action) as input

- Apply a fixed random Fourier feature embedding: $\gamma(\mathbf{x}) = [\sin(\mathbf{B}\mathbf{x}), \cos(\mathbf{B}\mathbf{x})]$, where $\mathbf{B}$ is a matrix of randomly sampled frequencies

- Feed the embedded input into a standard MLP

- Train with the same off-policy RL algorithm as before

The embedding is fixed, not trained, so the overhead is negligible. But the effect on learning dynamics is real. The network gains localized support: gradient updates for one state-action pair no longer bleed over and corrupt estimates for distant ones, which helps stabilize training.

Why It Matters

On continuous control benchmarks (physics-simulated locomotion and manipulation tasks that stress-test modern RL algorithms), FFN achieves state-of-the-art results with a fraction of the compute. Faster convergence means the agent reaches the same performance level in fewer environment interactions, directly cutting training time and sample requirements.

The most surprising result is what becomes possible once learning stabilizes: the target network can be dropped. Target networks, a second slowly-updated copy of the value network used to generate stable training targets, have been standard in deep RL since DQN. They exist because value network training tends to spiral into catastrophic divergence without them.

With FFN’s improved stability, the authors show successful training without this safety net on a few tasks. Removing the target network also reduces estimation bias, since the slowly-updated parameters introduce a systematic distortion. On those tasks, this further improves accuracy.

Spectral bias is a fundamental property of how neural networks learn, not an artifact of any particular algorithm. Any system using neural value approximation, from robotics to game-playing, potentially suffers from it. The Fourier feature fix is cheap, principled, and compatible with essentially any existing RL framework.

Open questions remain. How should one choose the frequency distribution for the Fourier features? Random Gaussian sampling works well, but a learned or adaptive distribution might do better. And while removing the target network succeeds on some tasks, understanding exactly when it’s safe is still an active area of research.

Bottom Line: A single-line fix, replacing the input layer of a value network with random Fourier features, addresses a fundamental bias in deep reinforcement learning. It delivers state-of-the-art performance at reduced compute while opening up training regimes that were previously too unstable to attempt.

IAIFI Research Highlights

This work pulls theoretical tools from machine learning theory (neural tangent kernels) and practical techniques from computer graphics (Fourier feature embeddings) into reinforcement learning, the kind of cross-domain synthesis that IAIFI encourages.

Fourier Feature Networks match or beat state-of-the-art on continuous control benchmarks with less compute, and enable stable training without the long-standard target network, improving both practical efficiency and theoretical understanding of how neural networks approximate value functions.

A more principled approach to neural function approximation could extend to physics-motivated AI applications, including those used to model complex dynamical systems in fundamental physics research.

Future work will explore adaptive frequency selection and broader applications across RL domains. The paper appeared at ICLR 2022 ([arXiv:2206.04672](https://arxiv.org/abs/2206.04672)) and code is available at [geyang.github.io/ffn](https://geyang.github.io/ffn). The theoretical analysis opens directions for further research into neural kernel methods in sequential decision-making.