Normalizing flows for lattice gauge theory in arbitrary space-time dimension

Authors

Ryan Abbott, Michael S. Albergo, Aleksandar Botev, Denis Boyda, Kyle Cranmer, Daniel C. Hackett, Gurtej Kanwar, Alexander G. D. G. Matthews, Sébastien Racanière, Ali Razavi, Danilo J. Rezende, Fernando Romero-López, Phiala E. Shanahan, Julian M. Urban

Abstract

Applications of normalizing flows to the sampling of field configurations in lattice gauge theory have so far been explored almost exclusively in two space-time dimensions. We report new algorithmic developments of gauge-equivariant flow architectures facilitating the generalization to higher-dimensional lattice geometries. Specifically, we discuss masked autoregressive transformations with tractable and unbiased Jacobian determinants, a key ingredient for scalable and asymptotically exact flow-based sampling algorithms. For concreteness, results from a proof-of-principle application to SU(3) lattice gauge theory in four space-time dimensions are reported.

Concepts

The Big Picture

Imagine trying to map every possible configuration of a jigsaw puzzle with trillions of pieces, then figure out which configurations are physically meaningful. That, in rough terms, is the sampling problem at the heart of lattice quantum chromodynamics (LQCD), the computational framework physicists use to study quarks, gluons, and the strong force from first principles.

The puzzle pieces are quantum fields: values assigned to every point on a discrete grid representing space and time. Traditional algorithms struggle as the lattice grows, getting trapped in critical slowing-down, where the simulation forgets where it started and grinds to a near halt.

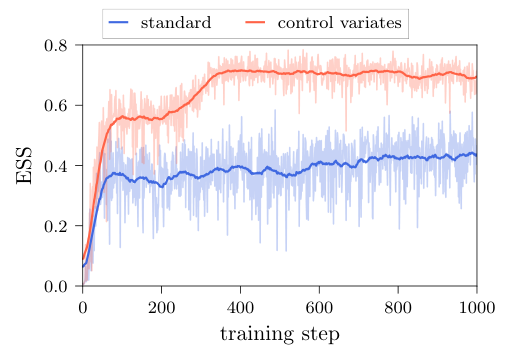

Machine learning offers a way out. Normalizing flows are generative models that produce physically valid field configurations in a single step rather than incrementally. Instead of proposing small changes and waiting for the simulation to wander through configuration space, a flow conjures a completely new configuration at once, bypassing the correlated-sample bottleneck that cripples traditional methods.

The catch: nearly all flow-based work in lattice gauge theory has only been demonstrated in two space-time dimensions. The real world has four. Going from two to four dimensions isn’t a matter of turning a dial. The geometry, symmetry constraints, and computational costs all scale brutally.

A team spanning MIT, NYU, the University of Wisconsin–Madison, Bern, and DeepMind now reports algorithmic advances that bring four-dimensional flow-based sampling within reach. They provide proof-of-principle results for SU(3) gauge theory, the mathematical structure underlying the strong force.

Key Insight: By designing flow architectures that respect the symmetries of gauge theory and compute Jacobians efficiently via masking, the team has opened a path to asymptotically exact sampling in four-dimensional lattice QCD.

How It Works

The central object is a normalizing flow: a learned, invertible function that maps samples from a simple “prior” distribution (a starting population of random, unphysical configurations) to configurations that look as if they were drawn from the physically correct distribution. For exact probability evaluation, the flow must allow efficient computation of its Jacobian determinant, a bookkeeping device that tracks how the transformation stretches or compresses different regions of a high-dimensional space. For large systems, this computation can become ruinously expensive.

The team attacks this with two complementary flow architectures:

-

Spectral flows transform the eigenvalue spectra of untraced Wilson loops (products of gauge link matrices around closed paths on the lattice). Think of a Wilson loop as “going around the block”: multiply field values along a closed path and extract eigenvalues that describe how the field twists space. These eigenvalues encode gauge-invariant information, and manipulating them lets the flow reshape the distribution while automatically obeying SU(3) symmetry constraints. Because the transformation acts on a lower-dimensional object (the spectrum, not the full matrix), the Jacobian stays manageable.

-

Residual flows update gauge links directly using gradient information from a learned potential function. Each step is a small, invertible perturbation whose Jacobian can be estimated efficiently, without ever computing the full matrix.

Both architectures rely on masked autoregressive decomposition to make the math tractable. The lattice is partitioned into subsets, with one subset updated at a time based on information from all others. This masking guarantees a block-triangular Jacobian at each step, so its determinant reduces to a product of much smaller pieces. The computational savings are enormous.

Getting the masking patterns right is nontrivial. The patterns must respect the periodic boundary conditions and gauge invariance of the lattice, and the constraints differ between two and four dimensions.

Four-dimensional geometry also introduces new design choices: how to tile the lattice with masks, how to parameterize the neural networks generating context-dependent transformations, and how to schedule training. The paper lays out these choices systematically and reports what works and what doesn’t.

Why It Matters

LQCD calculations are the gold standard for testing the Standard Model, connecting quark-level descriptions to measurable quantities like proton structure, nuclear binding energies, and rare decay rates. But they are bottlenecked by sampling efficiency.

The problem is worst at fine lattice spacings. There, topological freezing traps the simulation in a single topological sector (a broad class of field configurations sharing the same global winding structure) for millions of steps, producing biased estimates. Flow-based samplers that generate statistically independent configurations would solve this directly.

On the machine learning side, this work pushes generative modeling into structured, symmetry-constrained territory. Gauge equivariance (the requirement that flows commute with local symmetry transformations) cannot be satisfied by off-the-shelf architectures. The masked autoregressive framework developed here is general enough to apply to other non-Abelian gauge theories and potentially to field theories beyond the Standard Model. The unbiased Jacobian estimators for high-dimensional group-valued variables are themselves a useful contribution to the normalizing flows literature.

The big open question is scaling. Production LQCD calculations involve lattices requiring $O(10^{11})$ floating-point numbers per configuration. Whether flow architectures can reach these sizes, or be usefully combined with traditional HMC algorithms in hybrid schemes, remains to be seen.

Bottom Line: This team has cracked open the door to normalizing-flow sampling in four-dimensional SU(3) gauge theory by building flow architectures that are both symmetry-respecting and computationally tractable, a real step toward solving critical slowing-down in lattice QCD.

IAIFI Research Highlights

This work combines masked autoregressive generative modeling with the symmetry constraints of non-Abelian lattice gauge theories, showing that modern AI architectures can be re-engineered to respect the fundamental structure of quantum field theory.

The team's gauge-equivariant coupling layers, with provably tractable Jacobians, extend normalizing flow methodology to a new class of high-dimensional, group-valued distributions. The techniques apply to any domain that requires symmetry-preserving generative models.

By extending flow-based sampling to SU(3) in four space-time dimensions, this research offers a concrete route to eliminating topological freezing and critical slowing-down, the two primary computational obstacles to high-precision, first-principles calculations of hadron and nuclear physics.

Future work will focus on scaling these architectures to production-level lattice volumes and exploring hybrid algorithms that combine normalizing flows with traditional HMC. Full details appear at [arXiv:2305.02402](https://arxiv.org/abs/2305.02402).

Original Paper Details

Normalizing flows for lattice gauge theory in arbitrary space-time dimension

2305.02402

["Ryan Abbott", "Michael S. Albergo", "Aleksandar Botev", "Denis Boyda", "Kyle Cranmer", "Daniel C. Hackett", "Gurtej Kanwar", "Alexander G. D. G. Matthews", "Sébastien Racanière", "Ali Razavi", "Danilo J. Rezende", "Fernando Romero-López", "Phiala E. Shanahan", "Julian M. Urban"]

Applications of normalizing flows to the sampling of field configurations in lattice gauge theory have so far been explored almost exclusively in two space-time dimensions. We report new algorithmic developments of gauge-equivariant flow architectures facilitating the generalization to higher-dimensional lattice geometries. Specifically, we discuss masked autoregressive transformations with tractable and unbiased Jacobian determinants, a key ingredient for scalable and asymptotically exact flow-based sampling algorithms. For concreteness, results from a proof-of-principle application to SU(3) lattice gauge theory in four space-time dimensions are reported.