Noisy dynamical systems evolve error correcting codes and modularity

Authors

Trevor McCourt, Ila R. Fiete, Isaac L. Chuang

Abstract

Noise is a ubiquitous feature of the physical world. As a result, the first prerequisite of life is fault tolerance: maintaining integrity of state despite external bombardment. Recent experimental advances have revealed that biological systems achieve fault tolerance by implementing mathematically intricate error-correcting codes and by organizing in a modular fashion that physically separates functionally distinct subsystems. These elaborate structures represent a vanishing volume in the massive genetic configuration space. How is it possible that the primitive process of evolution, by which all biological systems evolved, achieved such unusual results? In this work, through experiments in Boolean networks, we show that the simultaneous presence of error correction and modularity in biological systems is no coincidence. Rather, it is a typical co-occurrence in noisy dynamic systems undergoing evolution. From this, we deduce the principle of error correction enhanced evolvability: systems possessing error-correcting codes are more effectively improved by evolution than those without.

Concepts

The Big Picture

Imagine building a computer from unreliable parts. Transistors that randomly flip, wires that occasionally short, memory cells that forget. How would you design a system that computes reliably despite the noise? Now imagine you couldn’t design it at all. You had to let random trial-and-error find the answer over millions of generations. Most engineers would call that impossible. Evolution apparently didn’t get the memo.

Life is a noisy business. Every cell in your body is bombarded by random heat energy, chemical mishaps, and radiation damage to DNA. Yet biological systems don’t just cope with this chaos; they thrive in it, maintaining extraordinary accuracy across billions of generations.

The puzzle is this: the tools biology uses to pull this off (error-correcting codes that detect and fix mistakes, modular architecture that physically separates distinct functions) represent a vanishingly tiny fraction of all possible genetic designs. Finding them by random search seems almost miraculous.

A new study from MIT researchers Trevor McCourt, Ila Fiete, and Isaac Chuang offers a clean explanation: these structures aren’t improbable outcomes of evolution. They’re the expected ones. In noisy environments, evolutionary processes don’t just stumble upon error-correcting codes and modularity. They are systematically drawn toward them, because error correction itself makes organisms better at evolving.

How It Works

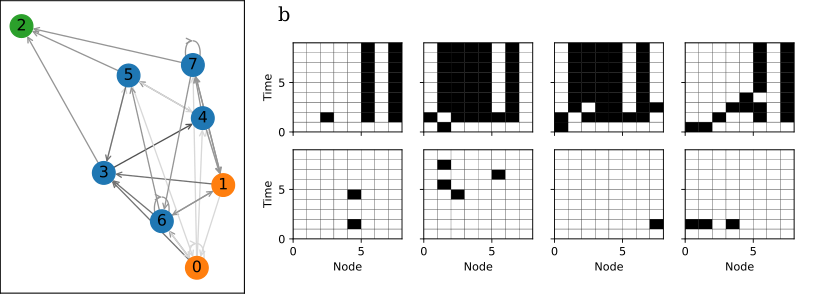

The team built their experiments around Boolean networks, a model originally developed to study how genes switch each other on and off. Picture a grid of nodes, each either “on” or “off,” updating according to a logical rule that takes neighboring nodes as inputs. Simple rules, chained across thousands of nodes, can perform surprisingly complex computations. These networks are cheap enough to simulate over millions of generations while still capturing how real biological regulatory systems behave.

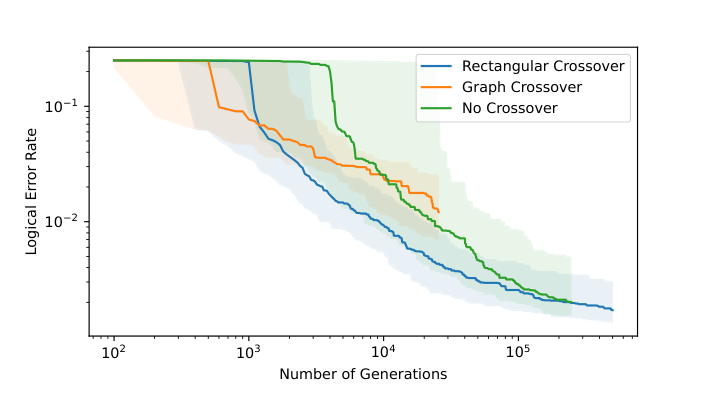

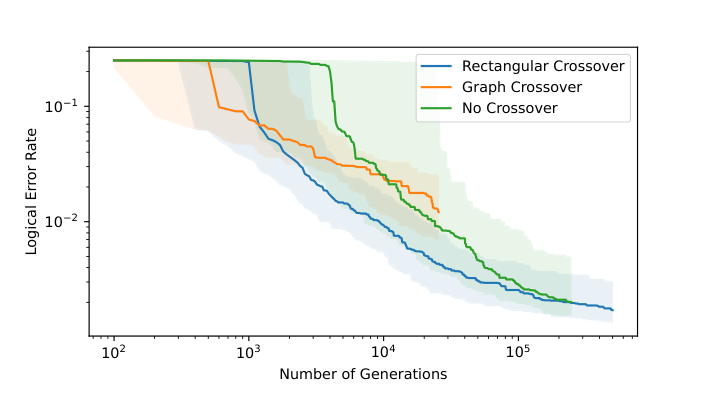

The researchers evolved these networks to perform primitive computational tasks (think simple reflexes or cell-state decisions) with noise cranked up. Networks that produced correct outputs despite the noise were more likely to survive; errors brought fitness penalties. Each generation, mutations tweaked the logical rules or rewired connections.

The same pattern showed up across different starting conditions and task setups. Networks evolved to implement error-correcting codes: structured redundancy that let them recover correct outputs even when noise scrambled internal states. This wasn’t a rare event. It happened almost universally, suggesting error correction is an attractor of noisy evolution, not a lucky accident. And when tasks had multiple components, networks spontaneously developed modular architectures, physically separating the sub-networks responsible for each sub-task.

The mechanism behind all of this is a feedback loop the authors call error correction-enhanced evolvability. A network with error correction tolerates genetic mutations better: many mutations that would be lethal in a fragile system get quietly absorbed by the redundant coding, becoming neutral mutations that don’t affect fitness. This expanded neutral zone lets the network wander through more of the genetic configuration space without dying. Some of that wandering finds paths to better error-correcting codes. Error correction begets more error correction.

Why It Matters

Why do biological systems keep converging on elaborate, low-probability structures? This paper gives a mechanistic answer. Noise actively reshapes the fitness landscape, making error-correcting configurations easier to reach and easier to improve once reached.

The idea fits with what biologists already see. Knockout experiments show most genes are individually dispensable, a signature of neutral redundancy baked into the genome. Fiete’s own prior work on grid cells in the brain suggests they implement a topological error-correcting code for spatial navigation, another case of biology landing on the same mathematical trick.

For AI, the payoff is concrete. Machine learning systems keep getting larger, more distributed, and more exposed to real-world noise. Understanding how biology evolved reliable computation through selection pressure rather than top-down engineering could point toward new fault-tolerant architectures.

There’s a quantum computing angle too. Error correction is existential for quantum hardware, and building self-correcting systems from noisy components is exactly the evolutionary challenge studied here. The co-emergence of modularity and error correction under noise may inform how we design future neuromorphic and quantum systems.

Boolean networks are, of course, a simplified proxy for real genetics. Does error correction-enhanced evolvability hold up in continuous, high-dimensional systems? Could it apply to the evolution of learning itself, helping explain why biological brains generalize from noisy data so much better than current artificial networks? Those are the next questions worth chasing.

Noise isn’t evolution’s enemy. It’s the pressure that forces life to build error-correcting codes and modular architectures, and those structures then make further evolution easier. This self-reinforcing loop may explain why such organization is ubiquitous in biology, and it could change how we design fault-tolerant AI systems.

IAIFI Research Highlights

This work connects quantum information theory, evolutionary biology, and computational neuroscience, applying error-correcting code theory from quantum computing to explain how biological systems become fault-tolerant. It fits within IAIFI's mission of connecting AI with fundamental physics.

Error correction-enhanced evolvability suggests a new principle for designing AI architectures. Building redundancy into artificial networks may not just improve reliability but could actively accelerate learning and adaptation.

By modeling evolutionary dynamics in noisy Boolean networks, the researchers show that stochastic environments systematically select for mathematical structures (error-correcting codes) that are otherwise vanishingly rare, connecting thermodynamic noise to the emergence of complex biological organization.

Future work should test these principles in continuous dynamical systems and neural network models, and explore whether error correction-enhanced evolvability applies to the evolution of learning algorithms themselves; the paper is available at [arXiv:2303.14448](https://arxiv.org/abs/2303.14448).

Original Paper Details

Noisy dynamical systems evolve error correcting codes and modularity

2303.14448

Trevor McCourt, Ila R. Fiete, Isaac L. Chuang

Noise is a ubiquitous feature of the physical world. As a result, the first prerequisite of life is fault tolerance: maintaining integrity of state despite external bombardment. Recent experimental advances have revealed that biological systems achieve fault tolerance by implementing mathematically intricate error-correcting codes and by organizing in a modular fashion that physically separates functionally distinct subsystems. These elaborate structures represent a vanishing volume in the massive genetic configuration space. How is it possible that the primitive process of evolution, by which all biological systems evolved, achieved such unusual results? In this work, through experiments in Boolean networks, we show that the simultaneous presence of error correction and modularity in biological systems is no coincidence. Rather, it is a typical co-occurrence in noisy dynamic systems undergoing evolution. From this, we deduce the principle of error correction enhanced evolvability: systems possessing error-correcting codes are more effectively improved by evolution than those without.