Moment Unfolding

Authors

Krish Desai, Benjamin Nachman, Jesse Thaler

Abstract

Deconvolving ("unfolding'') detector distortions is a critical step in the comparison of cross section measurements with theoretical predictions in particle and nuclear physics. However, most existing approaches require histogram binning while many theoretical predictions are at the level of statistical moments. We develop a new approach to directly unfold distribution moments as a function of another observable without having to first discretize the data. Our Moment Unfolding technique uses machine learning and is inspired by Generative Adversarial Networks (GANs). We demonstrate the performance of this approach using jet substructure measurements in collider physics. With this illustrative example, we find that our Moment Unfolding protocol is more precise than bin-based approaches and is as or more precise than completely unbinned methods.

Concepts

The Big Picture

Imagine you’re trying to measure the true height of a mountain, but your ruler keeps warping in the cold. Every measurement comes back distorted, and the distortion isn’t uniform. To get at the real height, you need to undo what your faulty instrument did. Now scale that up to the Large Hadron Collider, where particles collide millions of times per second and every detector is, in some sense, a warped ruler. Correcting for these distortions is called unfolding, and it’s essential for comparing experimental data with theoretical predictions in particle physics.

Theorists often express their most precise predictions not as full probability distributions, but as statistical moments: the mean, variance, skewness, and higher-order summaries of a distribution. Moments are compressed fingerprints. A few numbers capture a distribution’s shape without spelling out every detail. For certain measurements in quantum chromodynamics (QCD), the theory of how quarks and gluons interact through the strong nuclear force, moments are easier to compute from first principles than the full distribution. But experimentalists have been stuck unfolding entire histograms first, then extracting moments afterward. That two-step process introduces unnecessary noise and systematic bias.

A paper from researchers at MIT, Lawrence Berkeley National Laboratory, and UC Berkeley proposes a smarter path: skip the histogram entirely and unfold directly to the moments you actually want.

Key Insight: Moment Unfolding uses machine learning to extract corrected statistical moments straight from particle physics data, no histograms needed. Removing the binning step eliminates a major source of discretization bias and sharpens measurement precision.

How It Works

The core idea draws on Boltzmann weight factors from statistical mechanics. A Boltzmann weight tells you how likely a system is to sit in a particular state, expressed as an exponential function of energy. The Moment Unfolding team realized that a reweighting function of this same form, an exponential of a polynomial in the observable, has a useful property: its parameters are the cumulants of the distribution, which map directly onto moments. You don’t need to reconstruct the full shape. Just fit the exponent, and the moments fall out.

The procedure has four steps:

- Start with simulation. A Monte Carlo simulation models both the true underlying physics and the detector’s response through random sampling. This is the bridge between theory and experiment.

- Learn a reweighting function. A neural network reweights simulated particle-level events (what the physics actually produced, before the detector touched it) using a Boltzmann-inspired functional form. The learned parameters correspond directly to the moments of the unfolded distribution.

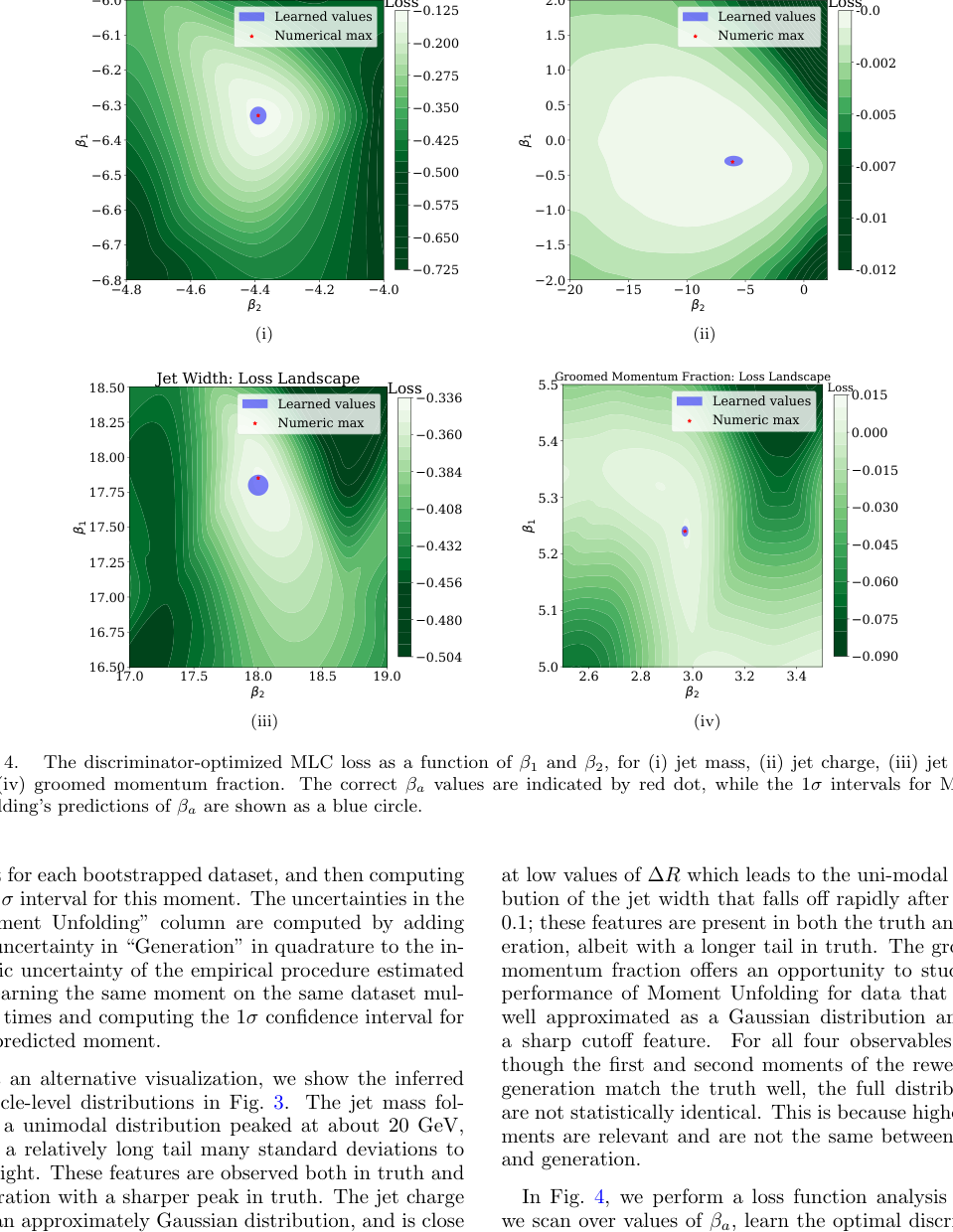

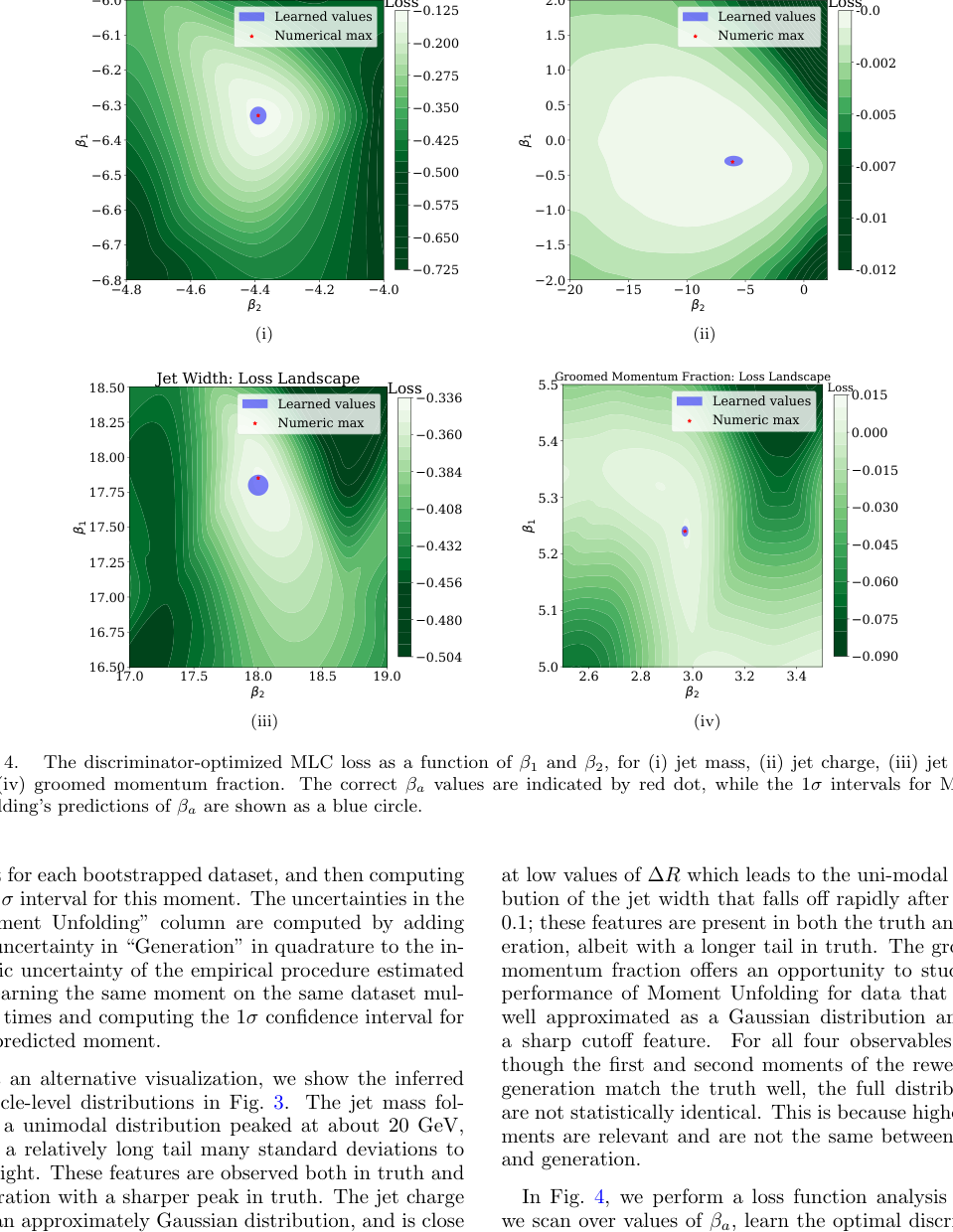

- Adversarial optimization. A second network acts as a discriminator, trained to tell apart detector-level simulation from real data. This borrows from Generative Adversarial Networks (GANs): one network tries to fool the other, and the other tries not to be fooled.

- Read off the moments. Once training converges, the reweighting function’s parameters are the unfolded moments. No histogram required.

This setup has a concrete advantage over existing methods. OmniFold, a popular unbinned unfolding approach, iterates back and forth between particle level and detector level. Moment Unfolding solves it in a single pass. No iteration means less computation and more numerical stability.

The team tested their method on jet substructure observables: properties of the collimated sprays of particles that form when quarks and gluons scatter at high energy. They looked at moments of the jet groomed momentum fraction ($z_g$) as a function of jet transverse momentum $p_T$. The groomed momentum fraction measures how unevenly a jet’s energy splits between its two main branches once you strip away soft, wide-angle radiation. Moments here carry precise theoretical predictions from DGLAP evolution equations (the rules governing how quark and gluon distributions shift with collision energy), but unfolding the full spectrum is overkill.

The results: Moment Unfolding achieves lower statistical uncertainty than bin-based approaches like Iterative Bayesian Unfolding (IBU), and matches or beats fully unbinned methods like OmniFold while targeting only the quantities you actually want.

Why It Matters

Precision in fundamental physics measurements comes down to cleanly separating what the detector did from what nature produced. Binning has been a workhorse for decades, but it’s a blunt instrument. When you place data into discrete bins and represent each bin by its center rather than its true mean, you introduce bias. That bias never fully vanishes unless you can make infinitely narrow bins, and you never have enough data for that in practice. Moment Unfolding sidesteps this entire class of systematic error.

The payoff reaches well beyond jet physics. Some of the most precise extractions of the strong coupling constant $\alpha_s$ come from comparing measured jet shape moments to theoretical predictions. Sharper moment extraction means tighter tests of the Standard Model and greater sensitivity to new physics hiding in small deviations. The same technique could, in principle, extend to deep-inelastic scattering, heavy-ion collisions, and any setting where moments matter more than full spectra.

Open questions remain. The current implementation handles moments of one observable at a time. Extending to multiple correlated observables, or recovering full distributions rather than just their summaries, are directions the authors flag for future work.

Bottom Line: By combining Boltzmann weight factors with adversarial machine learning, Moment Unfolding delivers more precise statistical moments from collider data than any previous approach, with no histogram and no iterative algorithm needed.

IAIFI Research Highlights

This work connects the mathematical structure of statistical mechanics (Boltzmann distributions) with adversarial machine learning to solve a core problem in experimental particle physics, bringing together ideas from multiple fields.

The paper introduces a constrained GAN architecture where the generator's functional form is physically motivated, so its learned parameters carry direct physical meaning as statistical moments. The result is an ML model whose internals are interpretable by design, not just accurate.

Moment Unfolding enables more precise extractions of QCD observables like jet substructure moments, tightening the measurements used to test quantum chromodynamics and pin down the strong coupling constant.

Future extensions could target multi-observable moment unfolding and full distribution recovery; the paper and code are available at [arXiv:2407.11284](https://arxiv.org/abs/2407.11284).

Original Paper Details

Moment Unfolding

2407.11284

Krish Desai, Benjamin Nachman, Jesse Thaler

Deconvolving ("unfolding'') detector distortions is a critical step in the comparison of cross section measurements with theoretical predictions in particle and nuclear physics. However, most existing approaches require histogram binning while many theoretical predictions are at the level of statistical moments. We develop a new approach to directly unfold distribution moments as a function of another observable without having to first discretize the data. Our Moment Unfolding technique uses machine learning and is inspired by Generative Adversarial Networks (GANs). We demonstrate the performance of this approach using jet substructure measurements in collider physics. With this illustrative example, we find that our Moment Unfolding protocol is more precise than bin-based approaches and is as or more precise than completely unbinned methods.