Modern Machine Learning and Particle Physics

Authors

Matthew D. Schwartz

Abstract

Over the past five years, modern machine learning has been quietly revolutionizing particle physics. Old methodology is being outdated and entirely new ways of thinking about data are becoming commonplace. This article will review some aspects of the natural synergy between modern machine learning and particle physics, focusing on applications at the Large Hadron Collider. A sampling of examples is given, from signal/background discrimination tasks using supervised learning to direct data-driven approaches. Some comments on persistent challenges and possible future directions for the field are included at the end.

Concepts

The Big Picture

Imagine searching for a single specific grain of sand on every beach on Earth, simultaneously. That’s roughly the challenge physicists face at the Large Hadron Collider, where only one in every billion proton collisions produces a Higgs boson, and only one in ten thousand of those is easy to detect.

For decades, physicists handled this by compressing the torrent of detector data into a few key numbers: carefully chosen measurements a trained eye could interpret. Think of it like describing a symphony using only its tempo and loudest note. Tractable, but lossy.

Over the past five years, something has shifted. Modern machine learning is transforming how physicists analyze collision data. Harvard physicist Matthew Schwartz’s review makes the case that this isn’t just a new tool for old problems. ML is opening up new ways to think about particle physics data, from smarter signal detection to analyses that sidestep errors introduced when simulations don’t perfectly match reality.

Particle physics turns out to be a near-perfect laboratory for modern ML. It generates enormous, richly structured datasets backed by world-class simulation tools. But it also poses challenges that push ML methods in unexpected directions.

How It Works

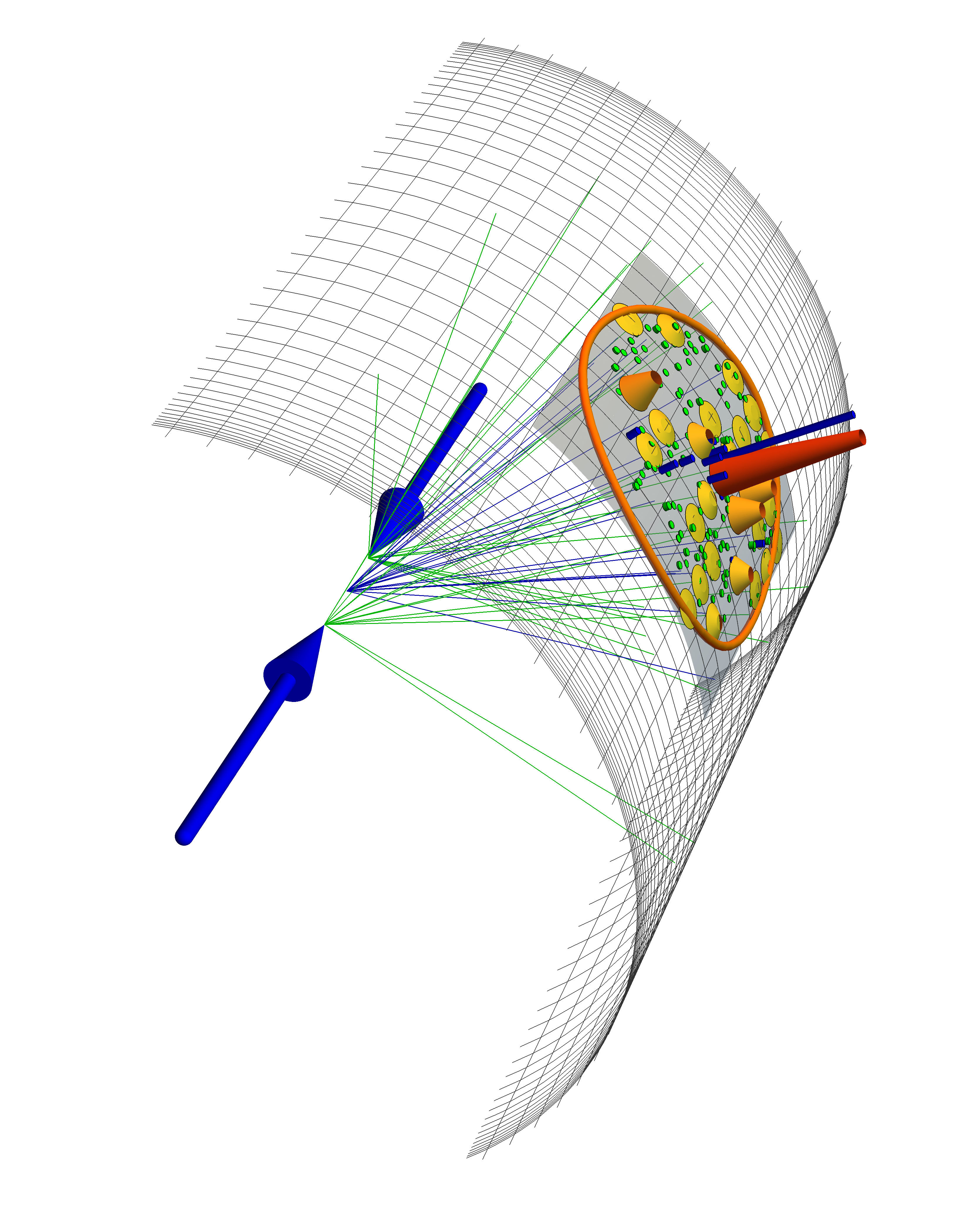

The LHC’s detectors read out roughly 100 million electronic channels per collision event, producing data in a space so high-dimensional that no single visualization can capture it. Traditional approaches collapse all of this into a handful of composite features: total energy deposited in a region, combined particle mass, the angle between two jets. These hand-crafted features are reliable and well-understood, but they inevitably discard information.

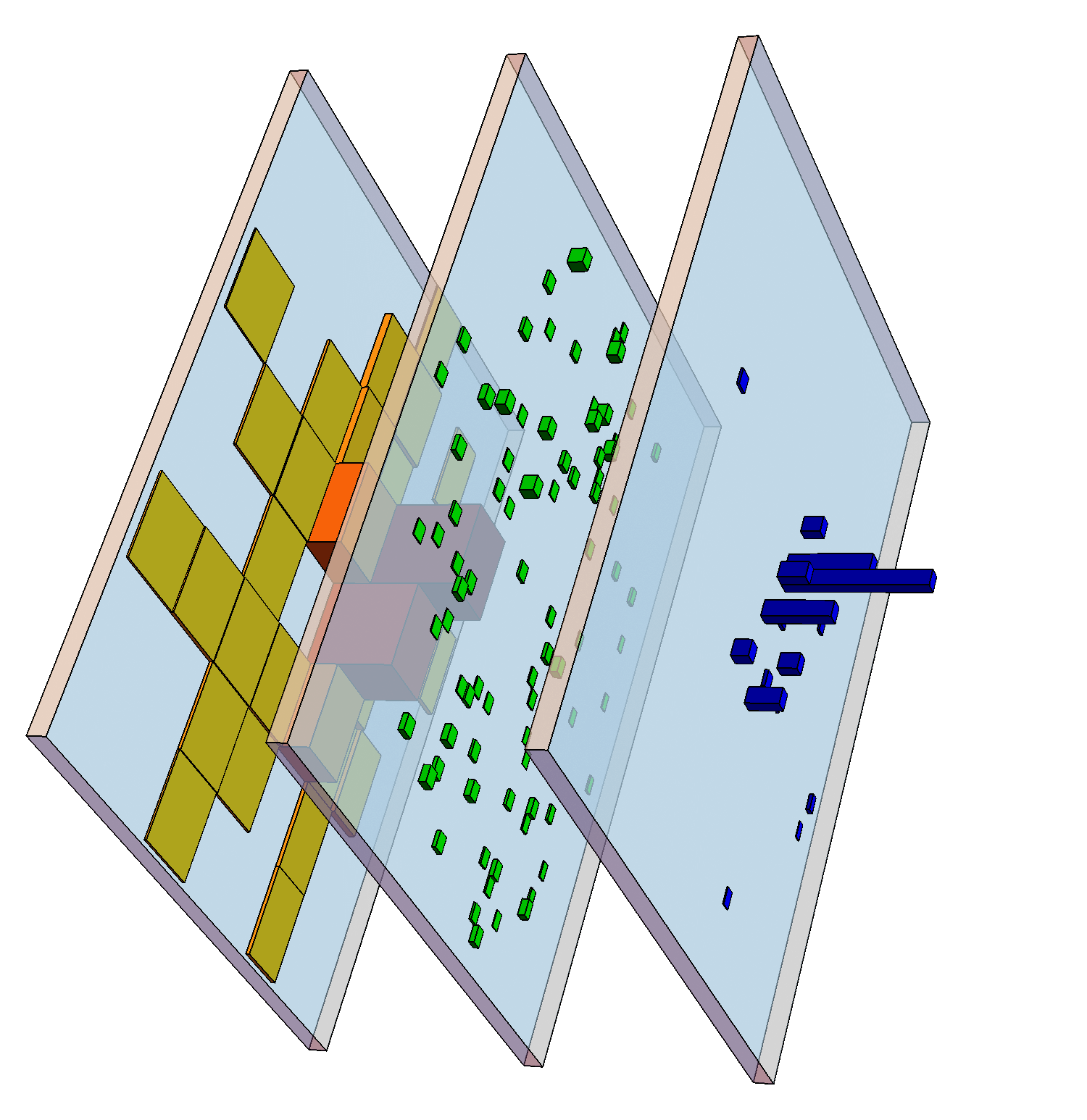

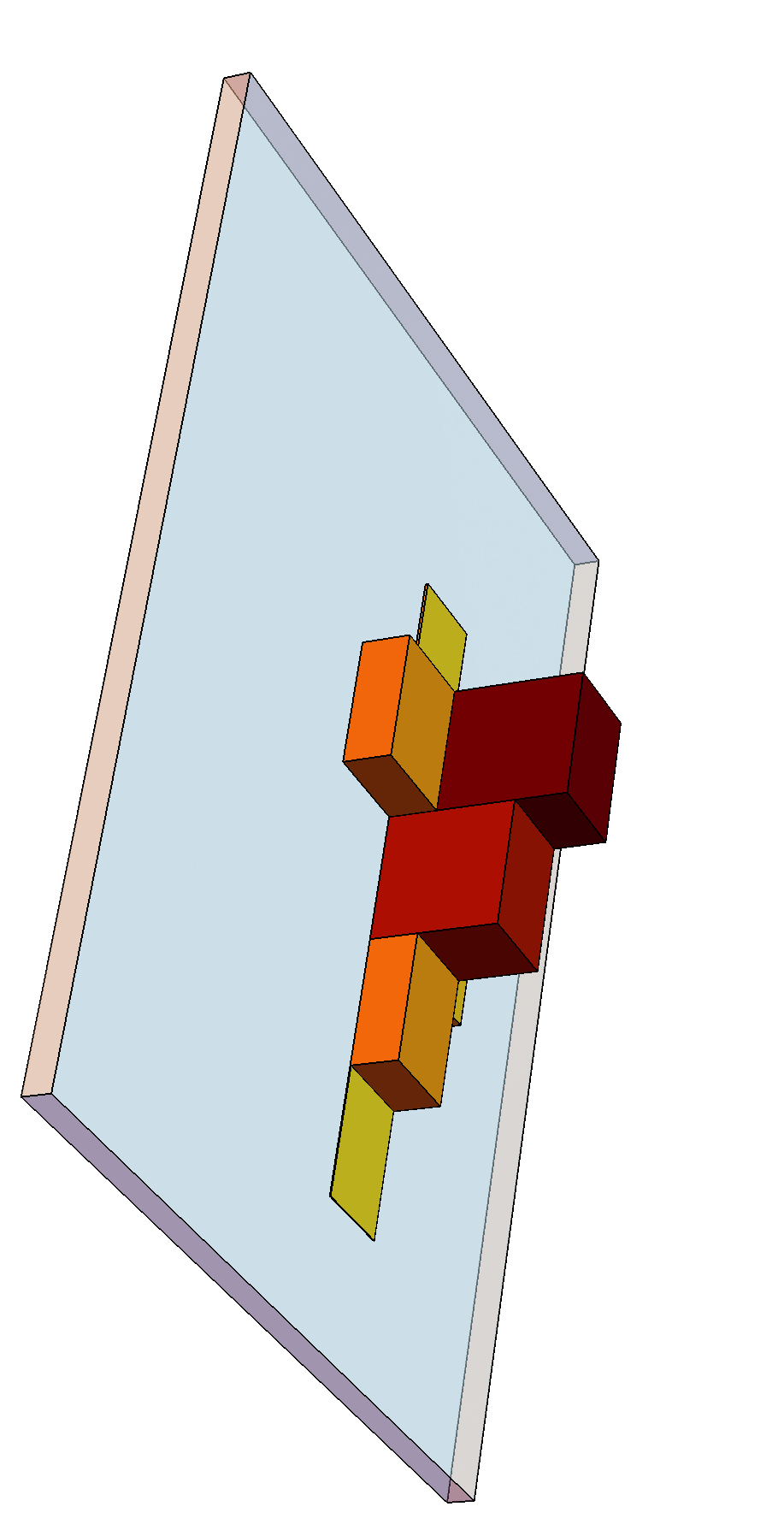

Modern ML takes the opposite approach: feed the network everything. Jets, the sprays of particles produced when quarks and gluons are ejected at high energy, carry detailed information about the underlying physics. A jet from a decaying top quark has different internal substructure than one from an ordinary background quark. Neural networks can learn to read this directly.

Schwartz surveys a range of ML approaches:

- Supervised classifiers trained on labeled signal and background events, achieving performance that approaches the theoretically optimal Neyman-Pearson test

- Jet image classifiers that treat the calorimeter’s energy-measuring grid as a literal image, applying convolutional neural networks (the architecture that revolutionized computer vision)

- Graph neural networks that treat each particle as a node with edges encoding pairwise relationships, a natural fit when particle counts vary and ordering shouldn’t matter

- Transformers, the architecture behind modern language models, applied to particle sequences, learning which particles are most relevant to each other

Here’s what makes particle physics genuinely unusual as an ML domain: individual events don’t have truth labels. Because of quantum mechanical interference, a collision event isn’t purely “signal” or purely “background.” It’s a quantum superposition of both, with probability |M_S + M_B|², where the cross term encodes interference between signal and background amplitudes. Only mixture fractions can be inferred, never per-event identity.

What saves the field is 40+ years of expert-built simulation tools that model collisions across 20 orders of magnitude in length scale, from 10⁻¹⁸ meters where perturbative quantum field theory governs, up to the 100-meter scale of the ATLAS detector. These simulators generate billions of synthetic events, enabling supervised training even without event-level labels.

The most active frontier is weakly supervised and unsupervised learning, methods that reduce or eliminate simulation dependence entirely. Techniques like CWoLa (Classification Without Labels) exploit the fact that different data regions contain different signal fractions, training classifiers directly on real data rather than simulations. Anomaly detection methods search for events that look unlike any simulated background, finding unexpected signals without specifying in advance what new physics should look like.

Why It Matters

The Standard Model of particle physics leaves enormous questions unanswered. What is dark matter? Why is there more matter than antimatter? What lies beyond the energy scales we’ve probed?

Already, the LHC has recorded more data than physicists can fully analyze with traditional methods. Machine learning can extract more physics from existing datasets, and find signatures that hand-crafted analyses might never have thought to seek.

The influence runs both directions. Particle physics poses unusual demands on ML: high-dimensional, physically structured, quantum-mechanical data without individual ground truth. These demands are pushing ML toward new architectures, training paradigms, and theoretical frameworks.

Networks that respect the symmetries of special relativity. Generative models that faithfully reproduce complex multi-particle collisions. Classifiers calibrated rigorously enough for scientific inference. Particle physics is shaping all of these.

Open challenges remain. Systematic uncertainties, the differences between simulation and reality, can bias ML classifiers in subtle, hard-to-detect ways. Interpretability is a persistent concern: when a deep network finds a signal, can physicists understand why? The field is still developing the statistical frameworks needed to rigorously combine ML outputs with traditional hypothesis testing.

The upshot goes beyond speed. Machine learning is enabling analyses that were flatly impossible with traditional methods. And the physics is returning the favor, pushing AI research toward architectures built on physical law.

IAIFI Research Highlights

This review captures how the demands of particle physics (quantum-mechanical data, massive simulation infrastructure, extreme signal-to-background ratios) have pushed AI methods and fundamental physics into unusually close collaboration at the LHC.

Particle physics has motivated new ML architectures including Lorentz-equivariant networks, physically-constrained generative models, and weakly supervised classifiers that learn without per-event ground truth labels. These ideas are finding uses well beyond particle physics.

ML-based analyses are increasing the sensitivity of LHC searches for new physics and enabling model-agnostic anomaly detection approaches that could surface signatures no one has predicted.

The field is moving toward data-driven methods that minimize simulation dependence, with open questions around systematic uncertainties and interpretability; see [arXiv:2103.12226](https://arxiv.org/abs/2103.12226) for the full review.

Original Paper Details

Modern Machine Learning and Particle Physics

2103.12226

Matthew D. Schwartz

Over the past five years, modern machine learning has been quietly revolutionizing particle physics. Old methodology is being outdated and entirely new ways of thinking about data are becoming commonplace. This article will review some aspects of the natural synergy between modern machine learning and particle physics, focusing on applications at the Large Hadron Collider. A sampling of examples is given, from signal/background discrimination tasks using supervised learning to direct data-driven approaches. Some comments on persistent challenges and possible future directions for the field are included at the end.