Modeling early-universe energy injection with Dense Neural Networks

Authors

Yitian Sun, Tracy R. Slatyer

Abstract

We show that Dense Neural Networks can be used to accurately model the cooling of high-energy particles in the early universe, in the context of the public code package DarkHistory. DarkHistory self-consistently computes the temperature and ionization history of the early universe in the presence of exotic energy injections, such as might arise from the annihilation or decay of dark matter. The original version of DarkHistory uses large pre-computed transfer function tables to evolve photon and electron spectra in redshift steps, which require a significant amount of memory and storage space. We present a light version of DarkHistory that makes use of simple Dense Neural Networks to store and interpolate the transfer functions, which performs well on small computers without heavy memory or storage usage. This method anticipates future expansion with additional parametric dependence in the transfer functions without requiring exponentially larger data tables.

Concepts

The Big Picture

Imagine trying to trace every ripple in a pond after throwing a stone. Not just the first splash, but every secondary wave bouncing off every edge, for billions of years. That’s roughly the challenge physicists face when modeling the early universe. If dark matter (the invisible substance making up most of the universe’s mass) occasionally transforms into bursts of high-energy particles, those particles slam into the hot, charged gas that filled the young cosmos. The result: cascades of secondary particles whose effects echo through cosmic history.

Tracking those cascades could tell us what dark matter actually is. But the computational cost has long been a bottleneck.

The code package DarkHistory was built for exactly this problem. It computes how the temperature and electrical state of the gas between galaxies (the intergalactic medium, or IGM) evolve when dark matter injects energy that wouldn’t exist under standard physics. The catch: roughly 18 gigabytes of pre-computed lookup tables must sit in memory during every simulation. If you don’t have a beefy workstation, you’re out of luck.

Yitian Sun and Tracy Slatyer at MIT found a smarter way to store all that information. They trained compact neural networks to replace the tables entirely, shrinking the memory footprint by a factor of roughly 400 without meaningfully sacrificing accuracy.

Key Insight: Dense Neural Networks can replace multi-gigabyte physics lookup tables in cosmological simulations, matching their accuracy at a fraction of the storage cost and making advanced dark matter research possible on a laptop.

How It Works

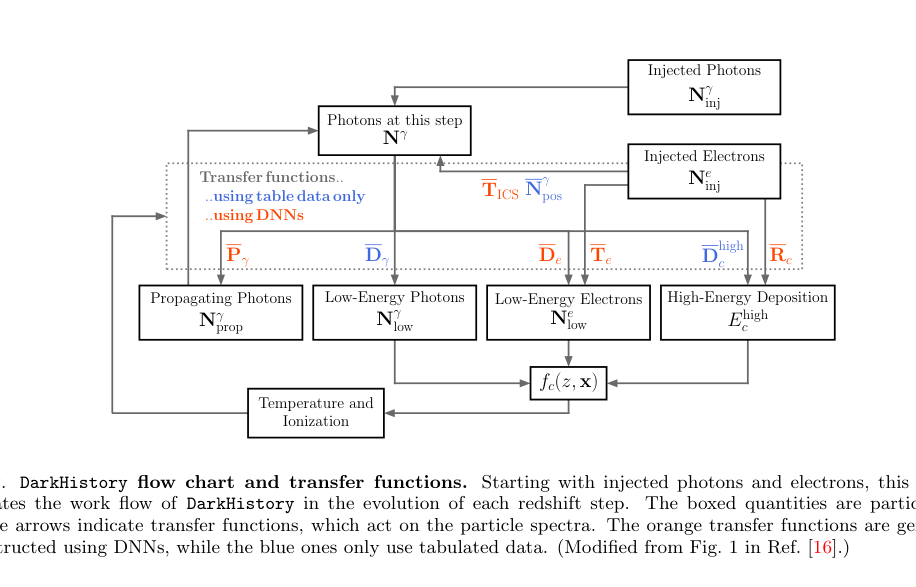

At the core of DarkHistory sit a set of transfer functions: mathematical objects that take an input bundle of particles (say, a burst of photons from annihilating dark matter) and output the secondary particles produced after one step of cosmic evolution. Think of them as detailed recipes. Given this mix of energetic particles at this moment in cosmic history, here’s what you get after the universe expands a little further. The storage problem is obvious: each transfer function table runs about 1.5 gigabytes, and a standard run needs 12 loaded at once.

Sun and Slatyer’s fix is straightforward. Instead of storing a giant pre-computed matrix, they train a Dense Neural Network (DNN), a standard multi-layer network where every unit connects to every unit in adjacent layers, to learn each transfer function as a smooth mathematical mapping.

The network takes five inputs: the energy of the incoming particle, the energy of the outgoing particle, the current redshift (a measure of how much the universe has expanded, used here as a proxy for cosmic time), the fraction of hydrogen atoms that have lost their electrons, and the equivalent fraction for helium. It outputs a single number: the log of the transfer function value at that point. To reconstruct the full matrix, you evaluate the DNN across the grid of relevant energies.

The training process:

- Generate a dense grid of training data from the original high-fidelity tables

- Train separate DNNs for each transfer function type (photon-to-photon, electron-to-photon, etc.)

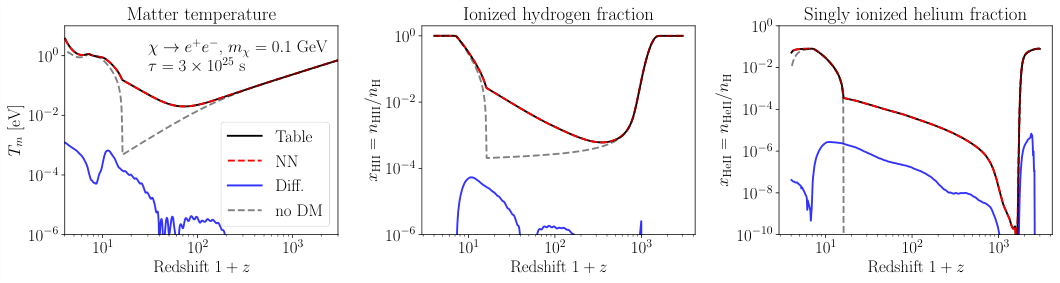

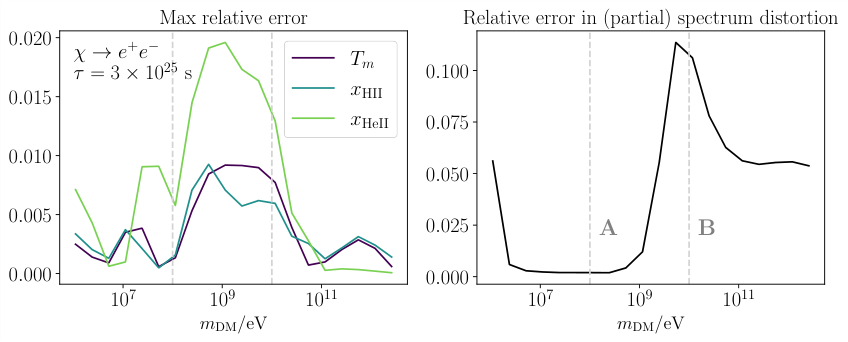

- Validate against held-out data, confirming that temperature and ionization histories match the original results to within a few percent

In the regions that matter most (when species are more than 10% ionized), the DNN-based DarkHistory reproduces the hydrogen ionization history and IGM temperature to sub-percent accuracy. Everywhere else, it stays within a few percent.

The approach also reproduces subtle distortions in the CMB (Cosmic Microwave Background, the faint glow of ancient light still detectable today), matching the original to within 10%. Current experimental uncertainties from CMB observations are larger than that, so the approximation is more than good enough for real science.

There’s a bonus baked into the neural network approach: automatic interpolation. The original tables were defined on a fixed grid, and values between grid points required manual interpolation. DNNs are continuous functions; they return sensible values at any parameter combination within the training range. Future versions of DarkHistory could work with flexible binning schemes or sparse training data, an option that rigid lookup tables simply don’t offer.

Why It Matters

The practical payoff is immediate: a researcher with a standard laptop can now run DarkHistory without needing 18 gigabytes of RAM. But the deeper point is about how these methods scale.

The table-based approach has a built-in problem. Adding a new physical parameter (say, a dependence on helium temperature or the density of ordinary matter) multiplies the table size by the number of steps in that new parameter. Storage demands grow exponentially.

Neural networks sidestep this. Adding a new input increases the network’s complexity only modestly, roughly a constant-size bump in neurons regardless of how finely you sample the new parameter. That’s the difference between a method that scales and one that hits a wall.

This work is part of a broader trend in theoretical physics, where neural networks stand in for expensive computations across particle collisions, galaxy formation, and more. What Sun and Slatyer are doing is different from the typical “AI finds patterns in data” story. They’re compressing and generalizing hard-won physical knowledge so more scientists can use it. Future extensions of DarkHistory, from dark matter decaying into multiple channels to position-dependent energy injection, now have a viable computational path forward.

Bottom Line: Swapping 18 GB of lookup tables for a handful of compact neural networks makes dark matter cosmology simulations far more portable, while giving

DarkHistoryroom to grow into more complex physics without blowing up its storage requirements.

IAIFI Research Highlights

This work sits at the intersection of machine learning and early-universe cosmology. Dense Neural Networks act as physics emulators, compressing complex particle-cascade physics into lightweight models that run on consumer hardware.

Simple fully-connected DNNs turn out to be accurate, continuously interpolating surrogates for high-dimensional physical lookup tables. The same approach could work for any simulation built around pre-computed transfer matrices.

Making `DarkHistory` run without heavy computing infrastructure lowers the barrier to studying dark matter annihilation and decay through the CMB and IGM temperature history, expanding who can contribute to the search for physics beyond the Standard Model.

Future work will extend the DNN transfer functions to handle additional parameters, such as helium temperature or other IGM conditions, without the exponential storage costs of conventional tables; see [arXiv:2207.06425](https://arxiv.org/abs/2207.06425) for the full paper.

Original Paper Details

Modeling early-universe energy injection with Dense Neural Networks

2207.06425

["Yitian Sun", "Tracy R. Slatyer"]

We show that Dense Neural Networks can be used to accurately model the cooling of high-energy particles in the early universe, in the context of the public code package DarkHistory. DarkHistory self-consistently computes the temperature and ionization history of the early universe in the presence of exotic energy injections, such as might arise from the annihilation or decay of dark matter. The original version of DarkHistory uses large pre-computed transfer function tables to evolve photon and electron spectra in redshift steps, which require a significant amount of memory and storage space. We present a light version of DarkHistory that makes use of simple Dense Neural Networks to store and interpolate the transfer functions, which performs well on small computers without heavy memory or storage usage. This method anticipates future expansion with additional parametric dependence in the transfer functions without requiring exponentially larger data tables.