Mixture Model Auto-Encoders: Deep Clustering through Dictionary Learning

Authors

Alexander Lin, Andrew H. Song, Demba Ba

Abstract

State-of-the-art approaches for clustering high-dimensional data utilize deep auto-encoder architectures. Many of these networks require a large number of parameters and suffer from a lack of interpretability, due to the black-box nature of the auto-encoders. We introduce Mixture Model Auto-Encoders (MixMate), a novel architecture that clusters data by performing inference on a generative model. Derived from the perspective of sparse dictionary learning and mixture models, MixMate comprises several auto-encoders, each tasked with reconstructing data in a distinct cluster, while enforcing sparsity in the latent space. Through experiments on various image datasets, we show that MixMate achieves competitive performance compared to state-of-the-art deep clustering algorithms, while using orders of magnitude fewer parameters.

Concepts

The Big Picture

You’ve got a massive box of unlabeled photographs: thousands of images of handwritten digits, animal faces, street scenes. Your job is to sort them into neat piles without anyone telling you what the categories are. That’s clustering, finding hidden structure in data without a teacher.

Deep learning has made clustering far more powerful in recent years. But the neural networks driving these advances are enormous, often containing millions of parameters, and they operate as black boxes. You get an answer but can’t easily explain why the model grouped things the way it did. For high-stakes applications in science and medicine, that opacity is a real problem.

Researchers at Harvard and MIT developed MixMate (Mixture Model Auto-Encoders), a new architecture that takes a different approach. Rather than building a bigger, more opaque network, they derived a transparent clustering system directly from a mathematical model of how data is produced. The result clusters competitively with state-of-the-art methods while using hundreds to thousands of times fewer parameters.

Key Insight: MixMate grounds deep clustering in classical signal processing theory, where each cluster of data can be described by a small set of reusable building blocks. Every component maps to something interpretable, and the parameter count drops by orders of magnitude.

How It Works

The starting point isn’t a neural network. It’s a generative story about how images come to be. The team assumes that data in each cluster can be described by a sparse dictionary: a set of basic building blocks (called atoms) where any image is a sparse combination of just a few atoms. Think of describing a face using a handful of archetypal facial features rather than raw pixels.

For a dataset with K natural categories, MixMate posits K distinct dictionaries, one per cluster. This sparse dictionary learning mixture model is a probabilistic framework: cluster membership is a hidden variable inferred from the data, and each image is generated by combining a few atoms from whichever dictionary governs its cluster. The Laplace distribution, a sharply peaked statistical curve, is the prior on sparse codes. It enforces that only a small number of atoms contribute to any given image.

Training uses the Expectation-Maximization (EM) algorithm, a standard technique for fitting probabilistic models when some variables are unobserved. Each iteration has two steps:

- E-Step (encoding): For each cluster k, estimate the sparse code that best explains a given data point using that cluster’s dictionary.

- M-Step (decoding): Use those codes to reconstruct the data and update the dictionary to minimize reconstruction error.

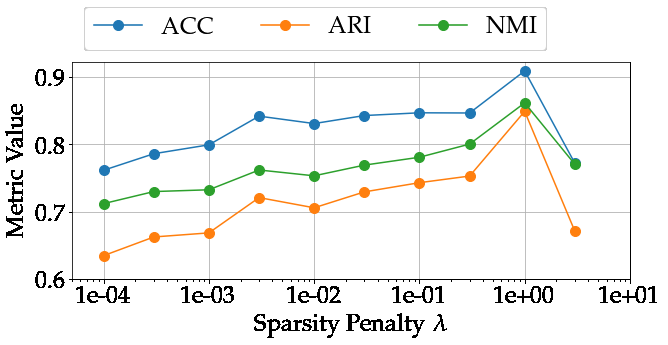

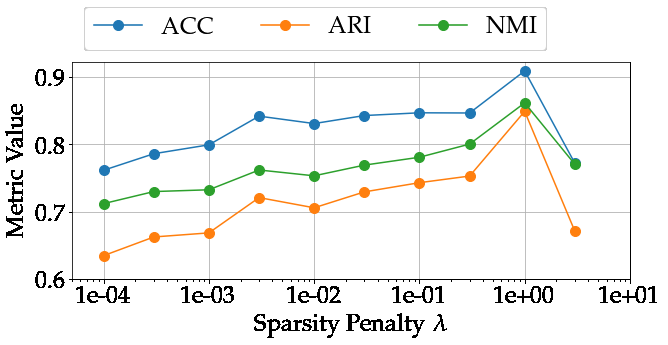

The encoding step solves a sparse coding problem: minimize reconstruction error plus an ℓ₁ penalty that pushes most code values toward zero. The researchers solve this with FISTA (Fast Iterative Shrinkage-Thresholding Algorithm), an efficient iterative method with a well-known closed form.

Here’s where it clicks. Running FISTA for L iterations turns out to be mathematically equivalent to passing data through an L-layer neural network. The algorithm unfolds into a deep encoder, with the dictionary matrix as learnable weights.

The full MixMate architecture runs K parallel encoders, one per cluster, each producing its own sparse code. Each encoder feeds a decoder (a linear multiplication by the dictionary) to reconstruct the input. An attention module then computes cluster assignment probabilities by comparing reconstruction quality and code sparsity across all encoders. The cluster with the lowest “energy” (best reconstruction, most compact code, highest prior probability) wins.

One neat property: the encoder and decoder in each auto-encoder share the same dictionary matrix (transposed), known as weight tying. This isn’t a design choice imposed by the researchers. It drops out of the mathematics on its own, and it cuts the parameter count even further.

Why It Matters

On standard image clustering datasets, MixMate matches or approaches the performance of much larger networks while using two to three orders of magnitude fewer parameters. That’s 100 to 1,000 times less. This isn’t just an engineering win. It suggests the assumptions baked into the generative model are doing real computational work that would otherwise require brute-force scaling.

Interpretability matters in scientific applications especially. At IAIFI, researchers cluster astrophysical observations, particle physics events, and other complex signals. In all of these domains, understanding why a clustering decision was made is as important as the decision itself. A model where every weight corresponds to a physically meaningful dictionary atom is far easier to interrogate than a generic deep network.

MixMate can also handle incomplete data (images with missing pixels) without retraining, by adjusting the optimization problem at inference time. That kind of flexibility is useful in experimental settings where data is often noisy or partially observed.

The work points beyond clustering, too. If other probabilistic models of scientific data can be similarly “unfolded” into neural networks, the payoff is interpretable deep learning tools shaped by domain-specific assumptions, not repurposed general-purpose architectures.

Bottom Line: Grounding deep clustering in a principled generative model doesn’t sacrifice performance. MixMate gets you interpretability and steep parameter efficiency, a combination well-suited for scientific data analysis.

IAIFI Research Highlights

MixMate draws on physics-inspired sparse dictionary learning to derive interpretable neural network architectures, linking classical signal processing theory with modern deep learning.

Model-based deep learning can match the clustering performance of black-box approaches with orders of magnitude fewer parameters, evidence that principled generative assumptions can substitute for sheer network size.

The architecture's interpretable cluster representations and natural handling of missing observations make it a strong fit for scientific domains like astrophysics and particle physics, where both properties matter.

Future directions include extending MixMate to larger-scale scientific datasets and more complex generative models. Code is available at [github.com/al5250/mixmate](https://github.com/al5250/mixmate). The paper ([arXiv:2110.04683](https://arxiv.org/abs/2110.04683)) was authored by Alexander Lin, Andrew H. Song, and Demba Ba from Harvard and MIT.

Original Paper Details

Mixture Model Auto-Encoders: Deep Clustering through Dictionary Learning

2110.04683

Alexander Lin, Andrew H. Song, Demba Ba

State-of-the-art approaches for clustering high-dimensional data utilize deep auto-encoder architectures. Many of these networks require a large number of parameters and suffer from a lack of interpretability, due to the black-box nature of the auto-encoders. We introduce Mixture Model Auto-Encoders (MixMate), a novel architecture that clusters data by performing inference on a generative model. Derived from the perspective of sparse dictionary learning and mixture models, MixMate comprises several auto-encoders, each tasked with reconstructing data in a distinct cluster, while enforcing sparsity in the latent space. Through experiments on various image datasets, we show that MixMate achieves competitive performance compared to state-of-the-art deep clustering algorithms, while using orders of magnitude fewer parameters.