Mapping Machine-Learned Physics into a Human-Readable Space

Authors

Taylor Faucett, Jesse Thaler, Daniel Whiteson

Abstract

We present a technique for translating a black-box machine-learned classifier operating on a high-dimensional input space into a small set of human-interpretable observables that can be combined to make the same classification decisions. We iteratively select these observables from a large space of high-level discriminants by finding those with the highest decision similarity relative to the black box, quantified via a metric we introduce that evaluates the relative ordering of pairs of inputs. Successive iterations focus only on the subset of input pairs that are misordered by the current set of observables. This method enables simplification of the machine-learning strategy, interpretation of the results in terms of well-understood physical concepts, validation of the physical model, and the potential for new insights into the nature of the problem itself. As a demonstration, we apply our approach to the benchmark task of jet classification in collider physics, where a convolutional neural network acting on calorimeter jet images outperforms a set of six well-known jet substructure observables. Our method maps the convolutional neural network into a set of observables called energy flow polynomials, and it closes the performance gap by identifying a class of observables with an interesting physical interpretation that has been previously overlooked in the jet substructure literature.

Concepts

The Big Picture

Imagine hiring a chess grandmaster to improve your game, but instead of explaining strategy, they just silently win every match. Their results are undeniable. You’ve learned nothing you can use yourself.

This is the situation physicists face when deploying deep neural networks to analyze particle collision data. The machine wins. The physicists scratch their heads.

At the Large Hadron Collider, neural networks classify jets (sprays of particles produced when quarks and gluons fly apart after high-energy collisions) with impressive accuracy. But that accuracy comes with a frustrating asterisk: nobody knows exactly how the machine does it. The network ingests thousands of detector readings and produces a classification through layers of opaque mathematics. Physicists need to validate that the machine is using real physical information, not artifacts of the training data. They need to estimate systematic uncertainties. They need to understand the physics.

Taylor Faucett, Jesse Thaler, and Daniel Whiteson built a systematic method to crack open that black box, not by peering inside, but by finding simple, human-readable quantities that make the same decisions as the neural network.

Key Insight: Rather than interpreting the neural network directly, the researchers use it as a guide to discover which human-understandable physical quantities capture the same information, closing the performance gap and revealing physics the community had missed.

How It Works

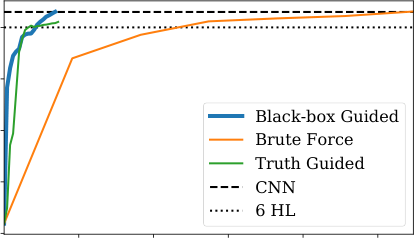

The core challenge is defining what “makes the same decisions” means mathematically. The team introduces a metric called Average Decision Ordering (ADO), inspired by classical rank-correlation statistics. Instead of asking whether two classifiers assign the same numerical score to each event, ADO asks a subtler question: for any pair of events, do the two classifiers agree on which one is more signal-like? This pairwise comparison measures agreement in ranking order, independent of absolute scores.

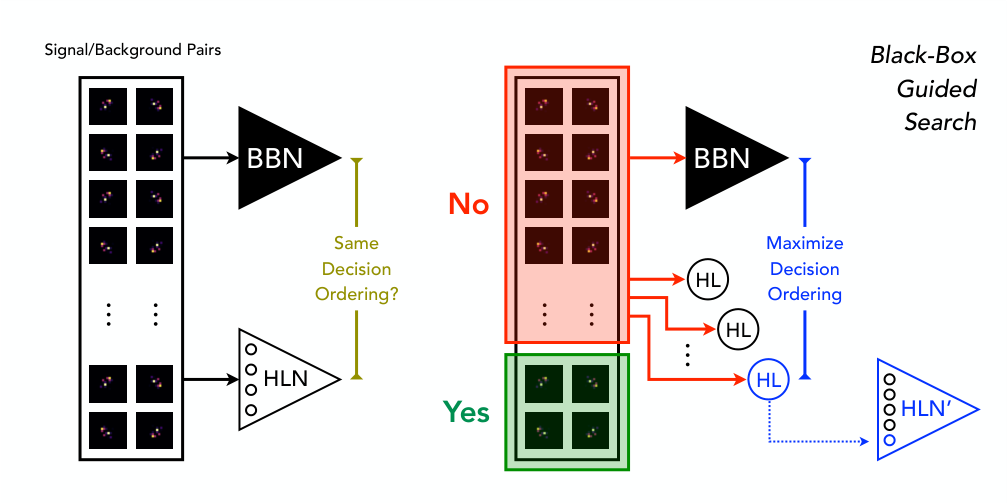

Using ADO as their metric, the researchers build an iterative search algorithm:

- Start with a large library of candidate high-level physics observables

- Find the single observable with the highest ADO relative to the neural network

- Add it to the working set and combine them into a new classifier

- Identify the event pairs the current set still misorients compared to the neural network

- Repeat, focusing only on those remaining misoriented pairs

This focus on “hard cases” is what makes the search efficient. The algorithm doesn’t waste iterations on pairs already handled correctly; it drives directly toward the gaps.

The case study is boosted W boson classification: distinguishing jets from W bosons (which decay into two quarks, producing a two-pronged spray) versus jets from ordinary quarks and gluons that produce just one stream. The black box is a convolutional neural network trained on 37×37 pixel calorimeter images. The human-readable candidates are Energy Flow Polynomials (EFPs), a mathematically complete set of infrared- and collinear-safe collider observables.

The team applies their method in two modes. In supplementation mode, the algorithm starts from six well-known jet substructure observables and searches for what’s missing. It quickly identifies a seventh: a specific EFP with a “triangle” graph topology that the jet substructure community had not previously considered for this task. Adding it nearly closes the performance gap between the human-engineered approach and the CNN. Physically, this EFP encodes a three-particle angular correlation matching the geometry expected from a W boson’s two-pronged decay.

In from-scratch mode, starting only from raw jet image inputs, the algorithm iteratively assembles a set of EFPs that matches CNN performance within statistical uncertainty. The selected EFPs tell a coherent physical story about what distinguishes W jets from QCD jets.

The black-box guided search also far outperforms both brute-force search and “truth-label guiding,” where the known particle labels serve as the guide instead of the neural network. The black box turns out to be a better teacher than the ground truth itself, because it encodes decision boundaries tuned to the actual structure of the data, not just the labels.

Why It Matters

Neural networks and interpretability are in tension throughout particle physics. The networks win the performance contest, but physics requires more than winning.

Results must be validated against physical models. Systematic uncertainties must be estimated and reported. Theoretical predictions must be tested. None of that is straightforward when your classifier is layers of convolutions.

Faucett, Thaler, and Whiteson’s method avoids the tradeoff entirely. Train the powerful network first and let it find the optimal decision boundary. Then use it as a guide to find the simplest human-readable approximation of that boundary. You end up with a compact set of physically interpretable observables that can be individually calibrated, theoretically predicted, and experimentally validated, all while capturing essentially the same discriminating power as the original machine.

The same iterative search could apply wherever a black-box classifier operates on high-dimensional data but a human-readable summary would be more useful, as long as a good library of candidate observables exists.

Bottom Line: By treating a neural network as a guide rather than an oracle, this work turns black-box classification into a tool for discovery, uncovering a jet substructure observable that physicists had overlooked for years.

IAIFI Research Highlights

This work sits at the intersection of machine learning and experimental particle physics. Average Decision Ordering gives physicists a concrete way to extract interpretable observables from opaque neural network classifiers trained on collider data.

The black-box guided search is a general interpretability technique: it approximates any high-dimensional classifier with a compact, human-readable model without sacrificing performance. The approach applies well beyond particle physics.

Applied to jet classification at colliders, the method recovered all the discriminating power of a CNN while identifying a previously overlooked three-particle angular correlation, pointing toward new structure in how jets form and can be distinguished.

Future work could extend this framework to more complex tasks such as full event reconstruction or flavor tagging, and to larger EFP libraries; the full paper is available at [arXiv:2010.11998](https://arxiv.org/abs/2010.11998).