Machine Learning for Quantum-Enhanced Gravitational-Wave Observatories

Authors

Chris Whittle, Ge Yang, Matthew Evans, Lisa Barsotti

Abstract

Machine learning has become an effective tool for processing the extensive data sets produced by large physics experiments. Gravitational-wave detectors are now listening to the universe with quantum-enhanced sensitivity, accomplished with the injection of squeezed vacuum states. Squeezed state preparation and injection is operationally complicated, as well as highly sensitive to environmental fluctuations and variations in the interferometer state. Achieving and maintaining optimal squeezing levels is a challenging problem and will require development of new techniques to reach the lofty targets set by design goals for future observing runs and next-generation detectors. We use machine learning techniques to predict the squeezing level during the third observing run of the Laser Interferometer Gravitational-Wave Observatory (LIGO) based on auxiliary data streams, and offer interpretations of our models to identify and quantify salient sources of squeezing degradation. The development of these techniques lays the groundwork for future efforts to optimize squeezed state injection in gravitational-wave detectors, with the goal of enabling closed-loop control of the squeezer subsystem by an agent based on machine learning.

Concepts

The Big Picture

Imagine trying to hear a whisper from a billion light-years away through a roar of noise. That’s what LIGO does every time it listens for gravitational waves, the faint ripples in spacetime produced when black holes collide or neutron stars merge. Catching those whispers means operating at the very edge of what physics permits.

One of LIGO’s most powerful tools is quantum squeezing. Quantum mechanics places a fundamental noise floor on any measurement, a limit you can’t beat by building better equipment alone. Squeezing finds a loophole: by deliberately making one aspect of a light beam noisier, you can make another aspect quieter. LIGO cares about the quiet one.

During LIGO’s third observing run (O3), squeezing cut quantum noise by up to 3.2 dB. That’s a real gain in a world where every fraction of a decibel matters. But the squeezer is finicky. Keeping it at peak performance over months-long observing runs is still an open problem.

A team from MIT and IAIFI turned to machine learning, training models to predict squeezing performance from environmental sensor data. The long-term goal: AI systems that can automatically tune these quantum instruments in real time.

Key Insight: Machine learning models trained on historical LIGO data can accurately predict how well the quantum squeezer is performing at any given moment and identify which environmental factors are degrading it. That prediction capability is what you need before you can build AI-driven, closed-loop control of quantum noise reduction.

How It Works

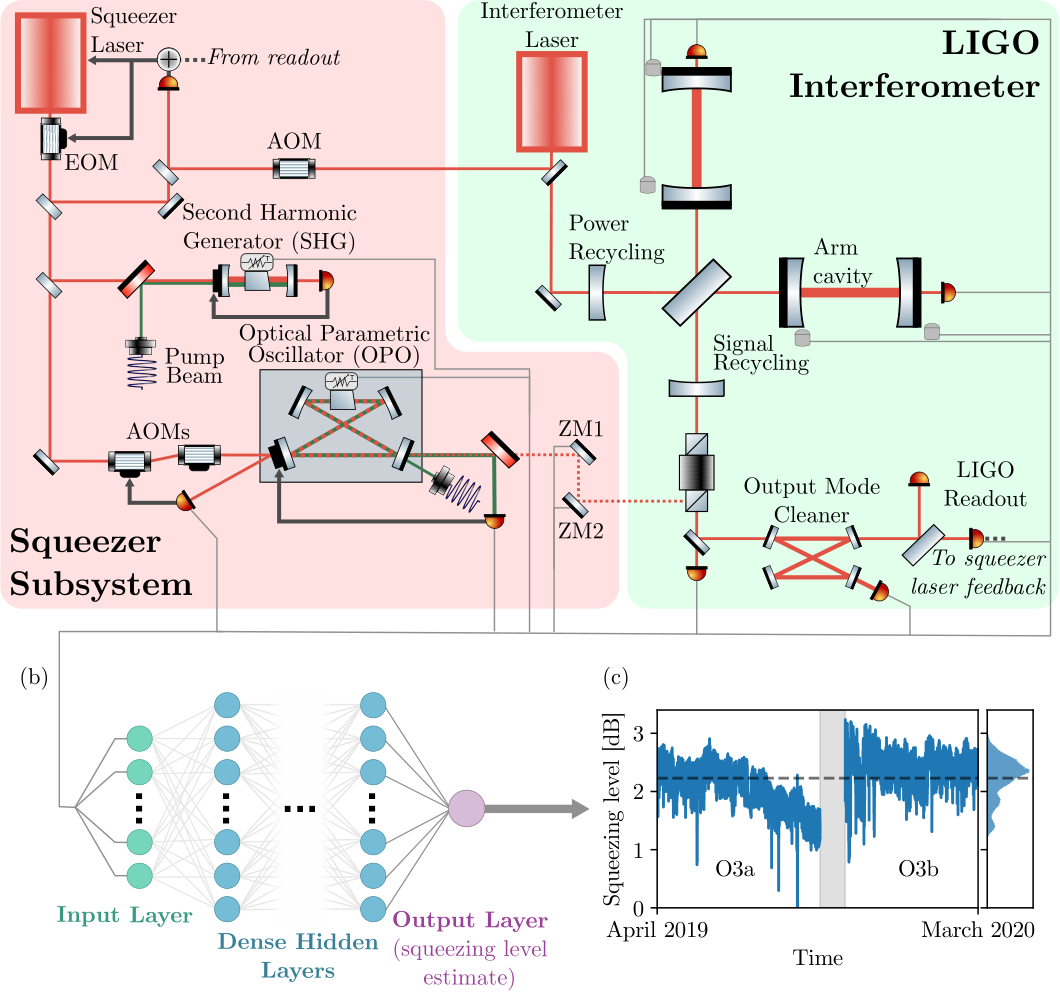

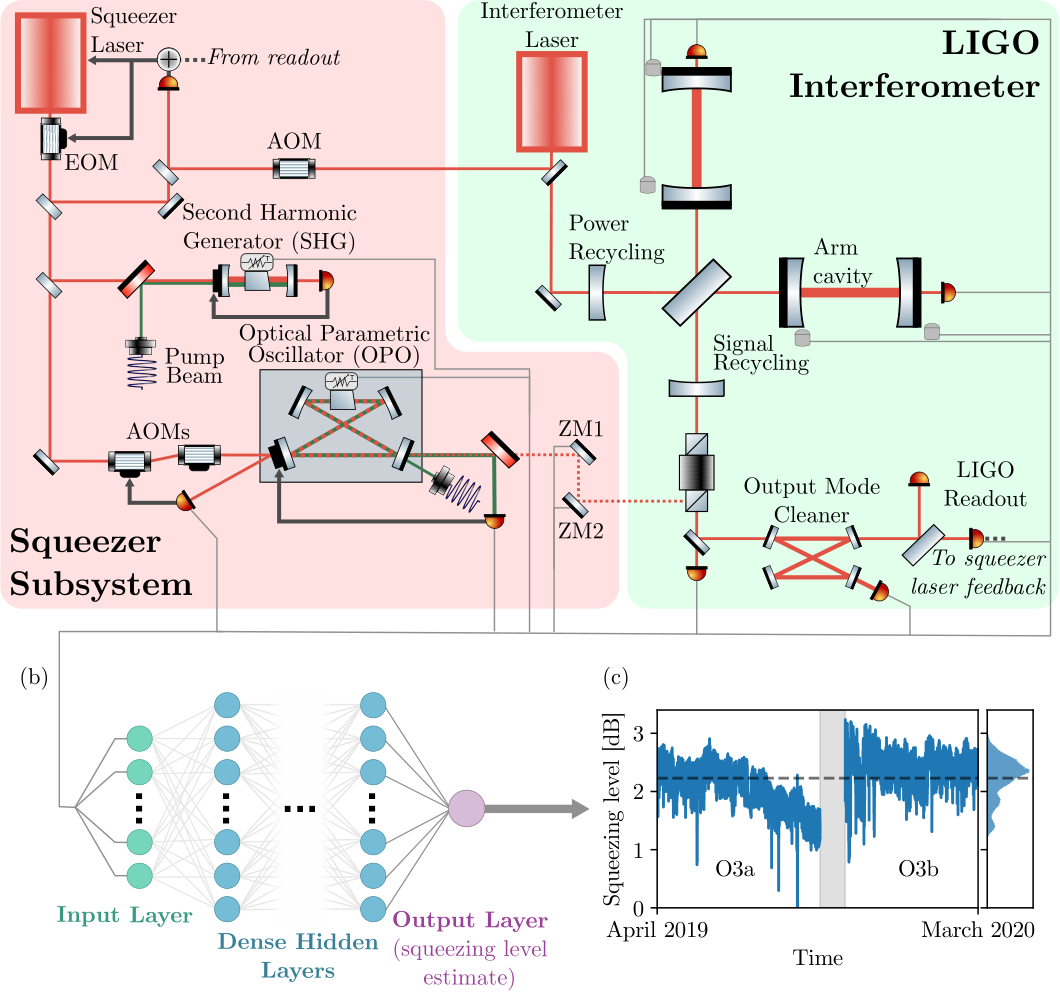

LIGO’s squeezed light comes from an optical parametric oscillator (OPO), a specialized crystal device that produces pairs of light particles with linked quantum properties. The squeezing level that actually reaches the interferometer depends on many interacting factors: crystal temperature, pump power, mirror alignment, ground motion, thermal drifts, and dozens more.

Over O3, the Livingston detector averaged only 2.23 dB of squeezing, nearly 1 dB below peak, with a standard deviation of 0.36 dB. For next-generation detectors targeting 10 dB, that kind of variability would be devastating.

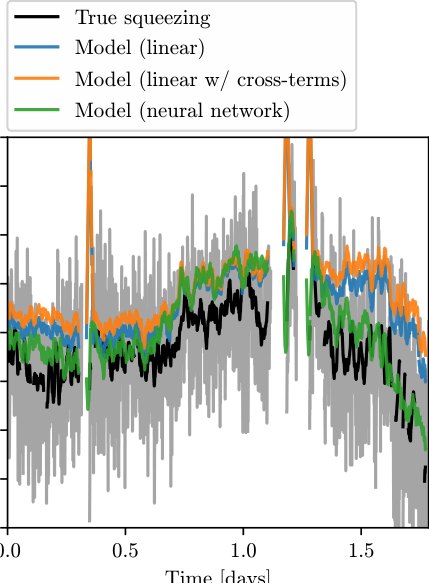

The researchers framed this as a prediction problem. They trained neural networks on O3 data, using auxiliary channels (sensor streams monitoring the detector’s physical environment) as inputs to predict squeezing level.

They didn’t pick the inputs by hand. Instead, a genetic algorithm, a search strategy modeled on natural selection, sifted through the available channels. Candidate input combinations competed against each other, and only the most informative survived. The process automatically identified which channels carry the most signal about squeezing performance.

The workflow breaks into three connected stages:

- Data curation — pulling relevant auxiliary channels from the O3 dataset at LIGO Livingston Observatory

- Model training — fitting neural networks to predict squeezing level, with genetic algorithm-driven feature selection

- Interpretation — sensitivity analysis to rank and quantify the sources of squeezing degradation

The models weren’t black boxes. The team ran sensitivity analysis, systematically varying each input to measure its effect on the predicted output. That turned the model from a predictor into a diagnostic tool: it can tell engineers what’s degrading squeezing right now and by how much.

Why It Matters

Future detectors like Einstein Telescope and Cosmic Explorer will need up to 10 dB of squeezing. The light-loss fluctuations behind O3’s variability, extrapolated to a 10 dB source, would drag performance well below target. That isn’t a minor inconvenience. It’s a barrier to the science these detectors exist to do.

The natural next step is a self-correcting AI control system for quantum instruments. This paper handles the sensing side: predicting squeezing from auxiliary data. What remains is actuation, where the model doesn’t just watch but intervenes, adjusting squeezer parameters to push performance back toward optimum.

That’s a harder problem. Real-time control has physical consequences, and the stakes are high. But you can’t close the loop without first knowing where the degradation is coming from. Squeezed light is used across quantum sensing more broadly, and the challenge of maintaining quantum performance under real-world conditions reaches well beyond gravitational-wave detectors.

Bottom Line: By training neural networks to predict LIGO’s quantum squeezing performance from environmental sensors, this team built a diagnostic AI that pinpoints the sources of noise degradation: the sensing half of the AI-controlled quantum instruments that next-generation detectors will need.

IAIFI Research Highlights

This is quantum optics engineering meeting modern machine learning. The team applied neural networks and genetic algorithms to optimize one of the most sensitive quantum instruments ever built.

Neural network prediction, genetic algorithm-based feature selection, and post-hoc sensitivity analysis work together here as a scientific diagnostic tool, not just a black-box predictor. It's a case study in making ML interpretable for high-stakes experimental physics.

Identifying and quantifying the dominant sources of squeezing degradation in LIGO's O3 data feeds directly into the design of next-generation gravitational-wave observatories, where squeezing targets reach up to 10 dB.

The authors see this as the sensing half of a future closed-loop control system, with actuation on the squeezer subsystem as the next step. The full paper is available at [arXiv:2305.13780](https://arxiv.org/abs/2305.13780).

Original Paper Details

Machine Learning for Quantum-Enhanced Gravitational-Wave Observatories

2305.13780

Chris Whittle, Ge Yang, Matthew Evans, Lisa Barsotti

Machine learning has become an effective tool for processing the extensive data sets produced by large physics experiments. Gravitational-wave detectors are now listening to the universe with quantum-enhanced sensitivity, accomplished with the injection of squeezed vacuum states. Squeezed state preparation and injection is operationally complicated, as well as highly sensitive to environmental fluctuations and variations in the interferometer state. Achieving and maintaining optimal squeezing levels is a challenging problem and will require development of new techniques to reach the lofty targets set by design goals for future observing runs and next-generation detectors. We use machine learning techniques to predict the squeezing level during the third observing run of the Laser Interferometer Gravitational-Wave Observatory (LIGO) based on auxiliary data streams, and offer interpretations of our models to identify and quantify salient sources of squeezing degradation. The development of these techniques lays the groundwork for future efforts to optimize squeezed state injection in gravitational-wave detectors, with the goal of enabling closed-loop control of the squeezer subsystem by an agent based on machine learning.