LtU-ILI: An All-in-One Framework for Implicit Inference in Astrophysics and Cosmology

Authors

Matthew Ho, Deaglan J. Bartlett, Nicolas Chartier, Carolina Cuesta-Lazaro, Simon Ding, Axel Lapel, Pablo Lemos, Christopher C. Lovell, T. Lucas Makinen, Chirag Modi, Viraj Pandya, Shivam Pandey, Lucia A. Perez, Benjamin Wandelt, Greg L. Bryan

Abstract

This paper presents the Learning the Universe Implicit Likelihood Inference (LtU-ILI) pipeline, a codebase for rapid, user-friendly, and cutting-edge machine learning (ML) inference in astrophysics and cosmology. The pipeline includes software for implementing various neural architectures, training schemata, priors, and density estimators in a manner easily adaptable to any research workflow. It includes comprehensive validation metrics to assess posterior estimate coverage, enhancing the reliability of inferred results. Additionally, the pipeline is easily parallelizable and is designed for efficient exploration of modeling hyperparameters. To demonstrate its capabilities, we present real applications across a range of astrophysics and cosmology problems, such as: estimating galaxy cluster masses from X-ray photometry; inferring cosmology from matter power spectra and halo point clouds; characterizing progenitors in gravitational wave signals; capturing physical dust parameters from galaxy colors and luminosities; and establishing properties of semi-analytic models of galaxy formation. We also include exhaustive benchmarking and comparisons of all implemented methods as well as discussions about the challenges and pitfalls of ML inference in astronomical sciences. All code and examples are made publicly available at https://github.com/maho3/ltu-ili.

Concepts

The Big Picture

Most of astrophysics boils down to an inverse problem: you observe something (a blurry X-ray image, a faint gravitational wave signal) and you want to know what produced it. The standard approach writes down a mathematical formula, called a likelihood, that predicts how the data should look for any given set of physical parameters. Then you work backwards from the data to figure out which parameters fit best. But for a growing number of problems, no one can write that formula down. The physics is just too complicated.

Telescopes like the Rubin Observatory and space missions like Euclid are about to flood researchers with petabytes of data, and the old approach of hand-crafting exact prediction formulas can’t keep up. Some physical processes are too messy. The turbulent collapse of dark matter halos, the tangled magnetic fields inside galaxy clusters: no clean mathematical description exists for any of it.

A team of fifteen researchers from institutions across Paris, New York, Cambridge, and elsewhere built a tool to handle exactly this situation: the Learning the Universe Implicit Likelihood Inference pipeline, or LtU-ILI. It’s a unified, open-source software framework that lets scientists train machine learning models to draw statistical conclusions directly from simulations, without ever writing down an analytic likelihood.

Key Insight: LtU-ILI packages simulation-based inference into a single pipeline so that any researcher can extract Bayesian posteriors from complex astronomical data, even when no closed-form likelihood exists.

How It Works

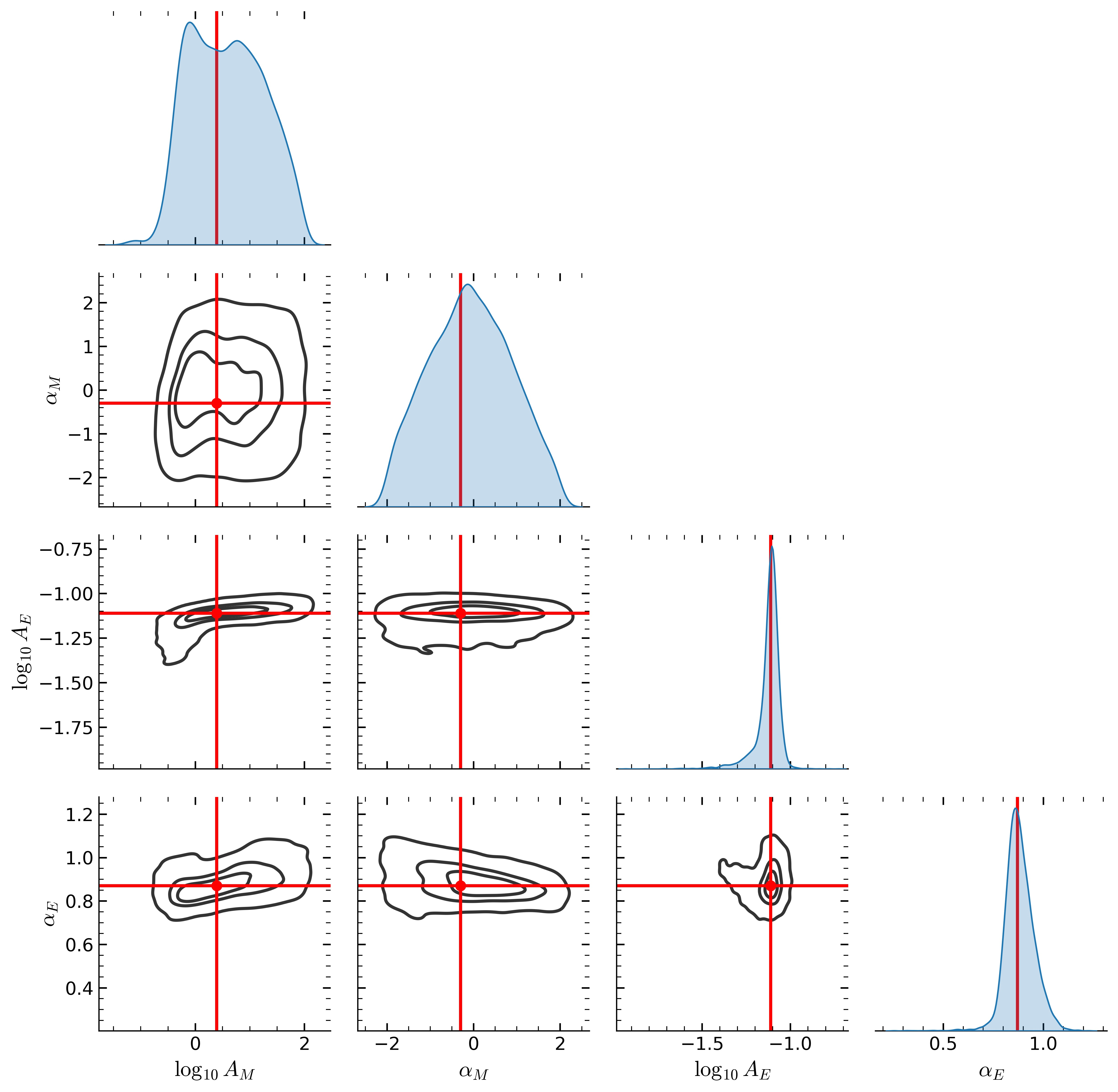

The core idea behind LtU-ILI is Implicit Likelihood Inference (ILI), also called simulation-based inference or likelihood-free inference. Instead of deriving a formula for how likely your observations are given a model, you train a neural network to learn that relationship from thousands of simulated examples. Feed it enough pairs of (simulation inputs, simulation outputs), and the network learns to invert the process: given new observations, it produces a full probability distribution over the underlying parameters. This distribution, called the posterior, is the central goal of Bayesian statistical inference. It tells you not just a best-fit value, but which parameter values are plausible and by how much.

The pipeline is modular. Researchers can swap in different neural architectures, different density estimators (the mathematical machinery representing probability distributions), and different training strategies. The three main approaches are:

- Neural Posterior Estimation (NPE): Train a network to directly output the posterior distribution.

- Neural Likelihood Estimation (NLE): Train a network to learn the likelihood, then sample the posterior using MCMC, a standard algorithm that explores a probability distribution by taking many small random steps.

- Neural Ratio Estimation (NRE): Train a classifier to distinguish real parameter-data pairs from fake ones, using its output as a proxy for the likelihood ratio.

Each method has different strengths. NPE is fast at inference time. NLE handles complex posteriors well. NRE works even when simulations are expensive. LtU-ILI lets you run all three and compare.

Raw inference isn’t enough, though. A posterior that looks confident can still be wrong. LtU-ILI bakes in validation metrics, including simulation-based calibration (SBC) tests that check whether the pipeline’s credible intervals actually deliver on their promise. If your 90% credible interval only captures the truth 60% of the time, you’ve got a problem, and LtU-ILI will flag it.

The team tested the pipeline on five real astrophysics problems: galaxy cluster masses from X-ray photometry, cosmological parameters from matter power spectra and dark matter halo point clouds, gravitational wave signals from merging black holes and neutron stars, dust physics from galaxy colors, and semi-analytic models of galaxy formation. In each case, they benchmarked multiple ILI methods head-to-head. That kind of apples-to-apples comparison is uncommon in a field where methods are usually tested in isolation.

Why It Matters

Next-generation surveys like Rubin and Euclid need inference pipelines that are fast, scalable, and scientifically reliable. Traditional MCMC methods can take days or weeks to converge on a single posterior. A trained ILI network produces posteriors in seconds. The catch has always been trust: how do you know the network learned the right distribution? LtU-ILI’s validation suite addresses this directly, letting researchers certify a neural posterior estimator before deploying it on real data.

The problems the authors document (model misspecification, hyperparameter sensitivity, epistemic uncertainty) aren’t unique to astrophysics. They come up wherever ML models are deployed, ground truth is scarce, and stakes are high. The pipeline’s modular design and built-in validation transfer readily to other fields with similar constraints. All the code is publicly available, and the team built it so that a researcher with no neural network experience can get a working pipeline running with minimal friction.

Bottom Line: LtU-ILI gives astrophysicists a plug-and-play toolkit for machine-learning-powered Bayesian inference, and its open design means the next generation of cosmic surveys won’t have to start from scratch every time they face a new inference problem.

IAIFI Research Highlights

LtU-ILI: An All-in-One Framework for Implicit Inference in Astrophysics and Cosmology

2402.05137

Matthew Ho, Deaglan J. Bartlett, Nicolas Chartier, Carolina Cuesta-Lazaro, Simon Ding, Axel Lapel, Pablo Lemos, Christopher C. Lovell, T. Lucas Makinen, Chirag Modi, Viraj Pandya, Shivam Pandey, Lucia A. Perez, Benjamin Wandelt, Greg L. Bryan

This paper presents the Learning the Universe Implicit Likelihood Inference (LtU-ILI) pipeline, a codebase for rapid, user-friendly, and cutting-edge machine learning (ML) inference in astrophysics and cosmology. The pipeline includes software for implementing various neural architectures, training schemata, priors, and density estimators in a manner easily adaptable to any research workflow. It includes comprehensive validation metrics to assess posterior estimate coverage, enhancing the reliability of inferred results. Additionally, the pipeline is easily parallelizable and is designed for efficient exploration of modeling hyperparameters. To demonstrate its capabilities, we present real applications across a range of astrophysics and cosmology problems, such as: estimating galaxy cluster masses from X-ray photometry; inferring cosmology from matter power spectra and halo point clouds; characterizing progenitors in gravitational wave signals; capturing physical dust parameters from galaxy colors and luminosities; and establishing properties of semi-analytic models of galaxy formation. We also include exhaustive benchmarking and comparisons of all implemented methods as well as discussions about the challenges and pitfalls of ML inference in astronomical sciences. All code and examples are made publicly available at https://github.com/maho3/ltu-ili.