Learning to Unknot

Authors

Sergei Gukov, James Halverson, Fabian Ruehle, Piotr Sułkowski

Abstract

We introduce natural language processing into the study of knot theory, as made natural by the braid word representation of knots. We study the UNKNOT problem of determining whether or not a given knot is the unknot. After describing an algorithm to randomly generate $N$-crossing braids and their knot closures and discussing the induced prior on the distribution of knots, we apply binary classification to the UNKNOT decision problem. We find that the Reformer and shared-QK Transformer network architectures outperform fully-connected networks, though all perform well. Perhaps surprisingly, we find that accuracy increases with the length of the braid word, and that the networks learn a direct correlation between the confidence of their predictions and the degree of the Jones polynomial. Finally, we utilize reinforcement learning (RL) to find sequences of Markov moves and braid relations that simplify knots and can identify unknots by explicitly giving the sequence of unknotting actions. Trust region policy optimization (TRPO) performs consistently well for a wide range of crossing numbers and thoroughly outperformed other RL algorithms and random walkers. Studying these actions, we find that braid relations are more useful in simplifying to the unknot than one of the Markov moves.

Concepts

The Big Picture

Imagine handing a tangled ball of string to a mathematician and asking: “Is this actually knotted, or could you untangle it into a simple loop with enough patience?” This is the UNKNOT problem, one of the oldest open challenges in mathematics. It’s easy to state but brutally hard to solve, and it turns out to be connected to the same technology powering your email autocomplete.

Knots show up throughout fundamental science: in protein folding, in the search for possible string theory universes, and in deep unsolved questions about four-dimensional geometry like the smooth Poincaré conjecture. Existing algorithms scale explosively with complexity. Human intuition breaks down for knots with many crossings.

A team from Caltech, Northeastern, CERN, Oxford, and Warsaw tried something different: they taught a neural network to read knots like sentences, then trained a reinforcement learning agent to untangle them move by move. Their system classifies knots with high accuracy and, when a knot is trivial, can prove it by producing the explicit sequence of simplifying moves.

Key Insight: Knots encoded as braid words are formally equivalent to sentences in a language, and transformer neural networks trained on natural language turn out to be very good at detecting the unknot, with accuracy that improves as the knot gets more complex.

How It Works

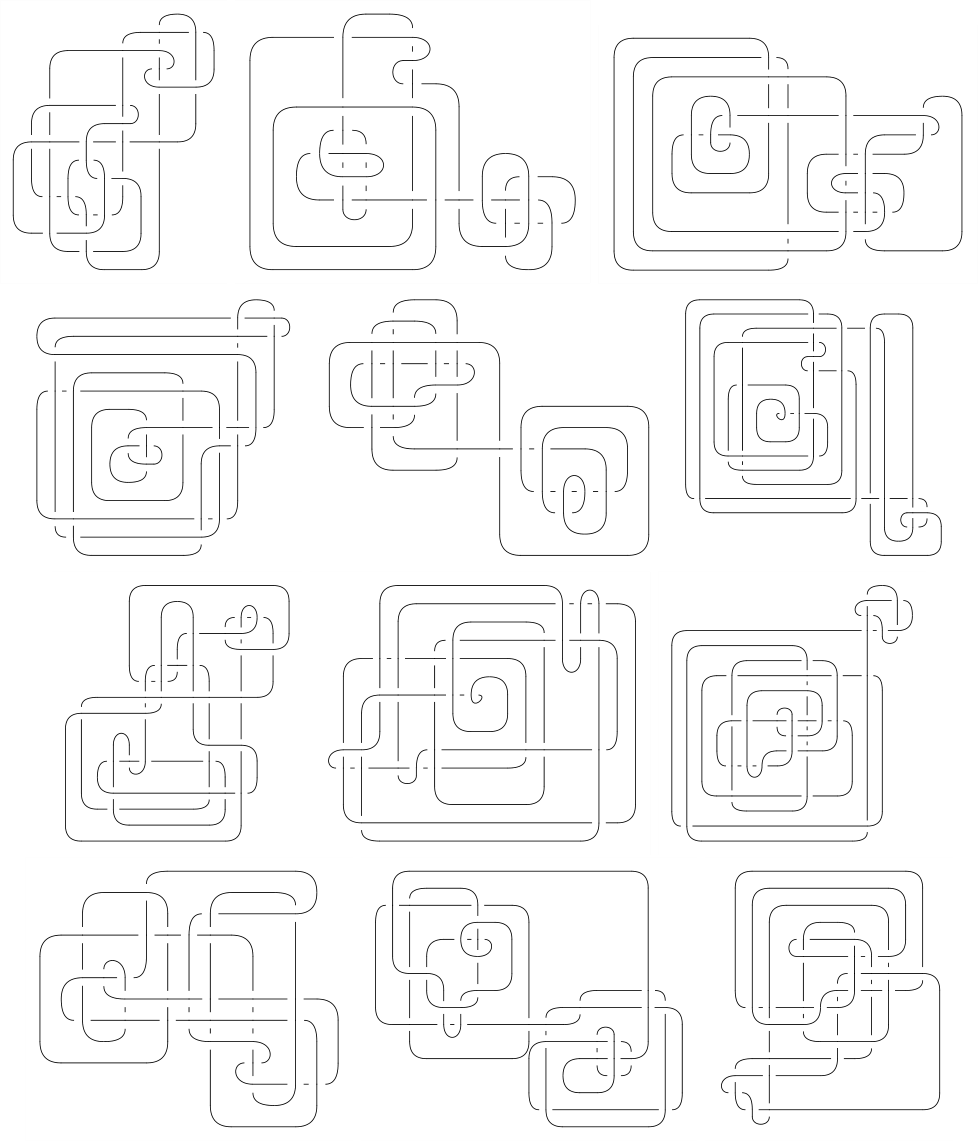

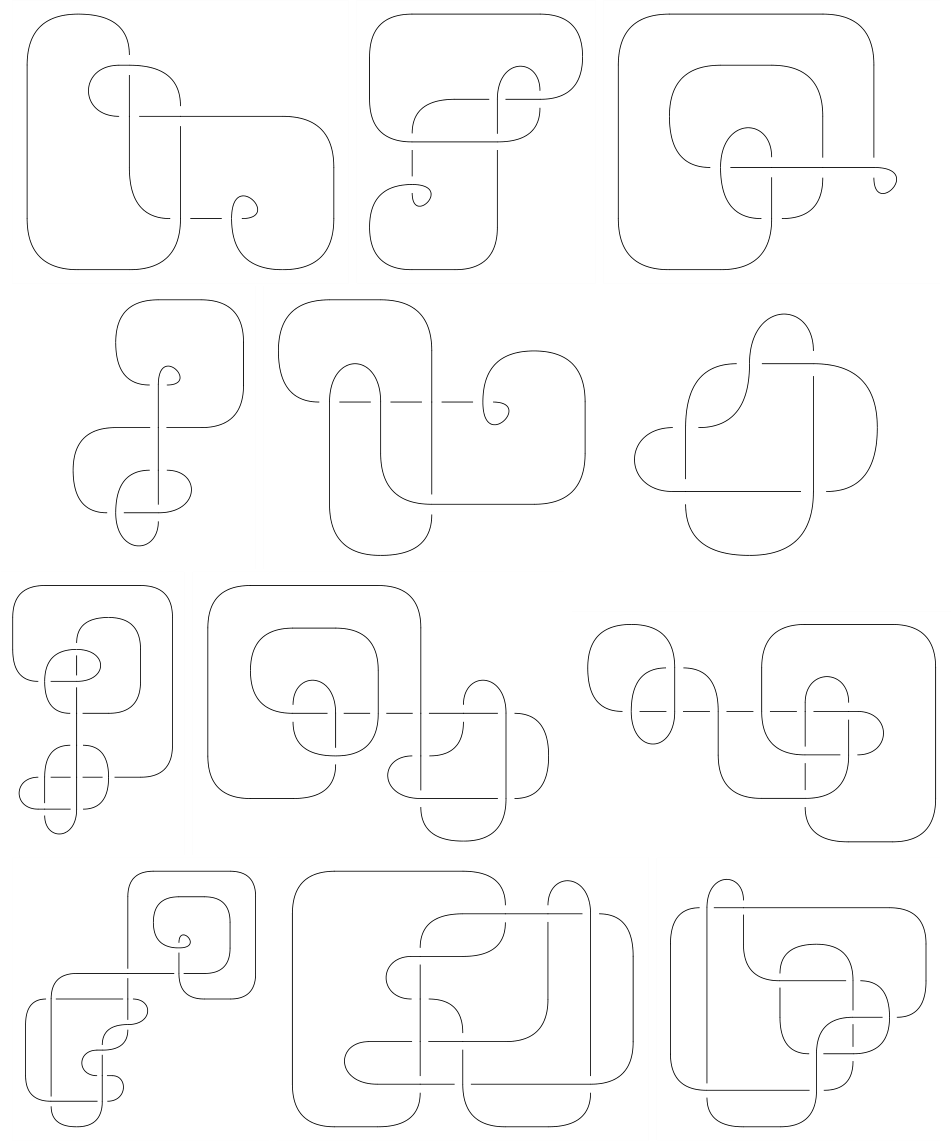

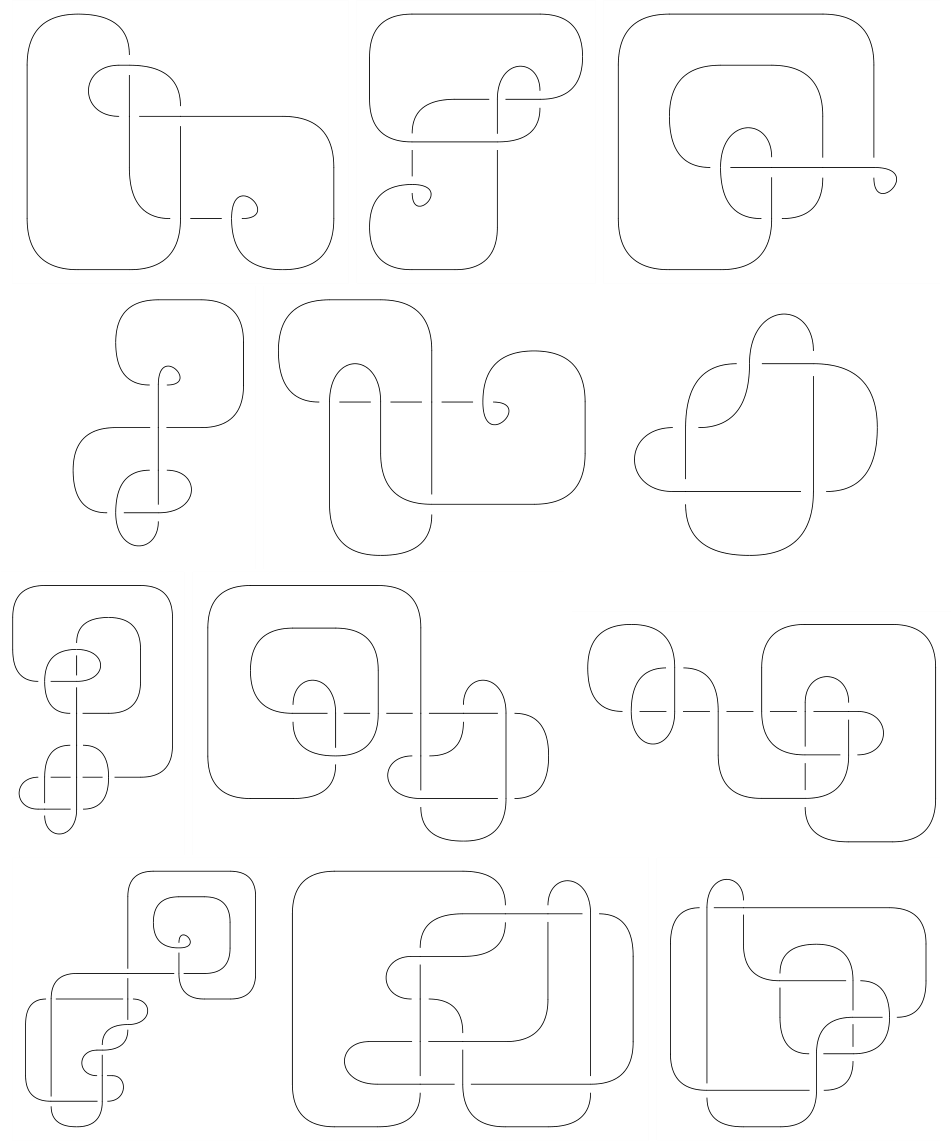

The central trick is representing knots as braid words: sequences of symbols like σ₁, σ₂⁻¹, σ₁, σ₂, where each symbol describes how strands cross over or under each other. Close the ends of a braid and you get a knot. A braid word is just a sequence of characters drawn from a finite alphabet, which is exactly what language models were built to process.

The researchers generated N-crossing braids and balanced datasets of unknots and genuine knots, then trained several neural architectures on binary classification: given this braid word, is it the unknot?

- Fully-connected networks, the baseline, which performed well but not best

- Shared-QK Transformers, attention models where the query and key matrices are tied together, reducing parameter count

- Reformers, a memory-efficient transformer variant that uses locality-sensitive hashing to handle long sequences without blowing up memory

Both the Reformer and the shared-QK Transformer outperformed the baseline. Against expectation, longer braid words yielded higher classification accuracy. You’d expect more crossings to make the problem harder, but longer braid words carry more redundancy and context for the attention mechanism to exploit.

The team found that classifier confidence tracks the Jones polynomial, a knot invariant (a quantity unchanged by any deformation that doesn’t cut the knot) introduced in 1984. High-confidence predictions correlated with the degree of the Jones polynomial. The network was never told about this invariant. It picked up the correlation from braid words alone.

Unknotting as a Game

Classification tells you whether a knot is trivial. Reinforcement learning tells you how to untangle it.

They set up unknotting as a game. An RL agent observes the current braid and picks from legal moves: Markov moves (which change the number of strands while preserving the knot type) and braid relations (local rewriting rules that simplify crossings). Simplify the braid, earn points. Reduce it to the trivial braid, and win.

Among several RL algorithms tested (PPO, A2C, random walkers), Trust Region Policy Optimization (TRPO) dominated. TRPO constrains how much the policy can shift per update, which prevents catastrophic forgetting and keeps learning stable across a wide range of crossing numbers.

Analyzing the agent’s move choices turned up a useful result: braid relations were far more effective than stabilization (one of the two Markov moves), which rarely helped. That’s a practical finding for anyone building knot simplification algorithms.

The RL agent also provides something the classifier cannot: a proof. When it unknots a braid, it outputs an explicit, mechanically verifiable sequence of moves, turning a probabilistic prediction into a mathematical certificate.

Why It Matters

The computational complexity of the UNKNOT problem remains open. It’s known to be decidable but not known to be in NP. Tools that rapidly classify or simplify knots give mathematicians new ways to probe this question. The Jones polynomial connection raises a natural follow-up: if networks are implicitly approximating this invariant, could they be reverse-engineered to find new invariants?

On the physics side, knots appear in string theory compactifications, lattice gauge theories, and topological quantum computing. The NLP framing suggests a broader program: other mathematical structures with discrete symbolic representations (Lie algebras, Calabi-Yau manifolds, Diophantine equations) could be treated as languages and fed to transformers. This paper is a proof of concept for that entire approach.

Bottom Line: By treating knots as sentences and unknotting as a game, this team showed that modern AI can both classify and actively solve one of mathematics’ oldest topological puzzles, while turning up an unexpected link between neural network confidence and the Jones polynomial.

IAIFI Research Highlights

This work spans topology, NLP, and reinforcement learning. Encoding knots as braid-word "sentences" and training transformer architectures and RL agents on them shows that AI tools developed for language can make real progress on problems in fundamental mathematics and physics.

Attention-based architectures (Reformers, shared-QK Transformers) outperform fully-connected networks on combinatorial mathematical problems, and classification accuracy *increases* with sequence length, a counterintuitive result with implications for how transformers handle structured symbolic data.

The RL agent's move analysis reveals that braid relations are more useful than stabilization for unknotting, and the classifier's confidence correlates with the degree of the Jones polynomial, tying deep learning to one of knot theory's central invariants.

Future work could extend these methods to harder knot invariants, more complex topological problems, and other symbolic mathematical structures. The paper is available at [arXiv:2010.16263](https://arxiv.org/abs/2010.16263).

Original Paper Details

Learning to Unknot

2010.16263

Sergei Gukov, James Halverson, Fabian Ruehle, Piotr Sułkowski

We introduce natural language processing into the study of knot theory, as made natural by the braid word representation of knots. We study the UNKNOT problem of determining whether or not a given knot is the unknot. After describing an algorithm to randomly generate $N$-crossing braids and their knot closures and discussing the induced prior on the distribution of knots, we apply binary classification to the UNKNOT decision problem. We find that the Reformer and shared-QK Transformer network architectures outperform fully-connected networks, though all perform well. Perhaps surprisingly, we find that accuracy increases with the length of the braid word, and that the networks learn a direct correlation between the confidence of their predictions and the degree of the Jones polynomial. Finally, we utilize reinforcement learning (RL) to find sequences of Markov moves and braid relations that simplify knots and can identify unknots by explicitly giving the sequence of unknotting actions. Trust region policy optimization (TRPO) performs consistently well for a wide range of crossing numbers and thoroughly outperformed other RL algorithms and random walkers. Studying these actions, we find that braid relations are more useful in simplifying to the unknot than one of the Markov moves.