KAN: Kolmogorov-Arnold Networks

Authors

Ziming Liu, Yixuan Wang, Sachin Vaidya, Fabian Ruehle, James Halverson, Marin Soljačić, Thomas Y. Hou, Max Tegmark

Abstract

Inspired by the Kolmogorov-Arnold representation theorem, we propose Kolmogorov-Arnold Networks (KANs) as promising alternatives to Multi-Layer Perceptrons (MLPs). While MLPs have fixed activation functions on nodes ("neurons"), KANs have learnable activation functions on edges ("weights"). KANs have no linear weights at all -- every weight parameter is replaced by a univariate function parametrized as a spline. We show that this seemingly simple change makes KANs outperform MLPs in terms of accuracy and interpretability. For accuracy, much smaller KANs can achieve comparable or better accuracy than much larger MLPs in data fitting and PDE solving. Theoretically and empirically, KANs possess faster neural scaling laws than MLPs. For interpretability, KANs can be intuitively visualized and can easily interact with human users. Through two examples in mathematics and physics, KANs are shown to be useful collaborators helping scientists (re)discover mathematical and physical laws. In summary, KANs are promising alternatives for MLPs, opening opportunities for further improving today's deep learning models which rely heavily on MLPs.

Concepts

The Big Picture

Imagine teaching someone to draw by giving them a single universal pencil stroke and telling them to combine it in different ways. That’s how most neural networks learn. Every neuron applies the same fixed mathematical rule, a formula baked in from the start. The network’s intelligence lives entirely in how those neurons connect.

It works. It’s driven virtually every AI breakthrough of the past decade. But a team of researchers from MIT, Caltech, Northeastern, and IAIFI asked a different question: what if the connections themselves could learn?

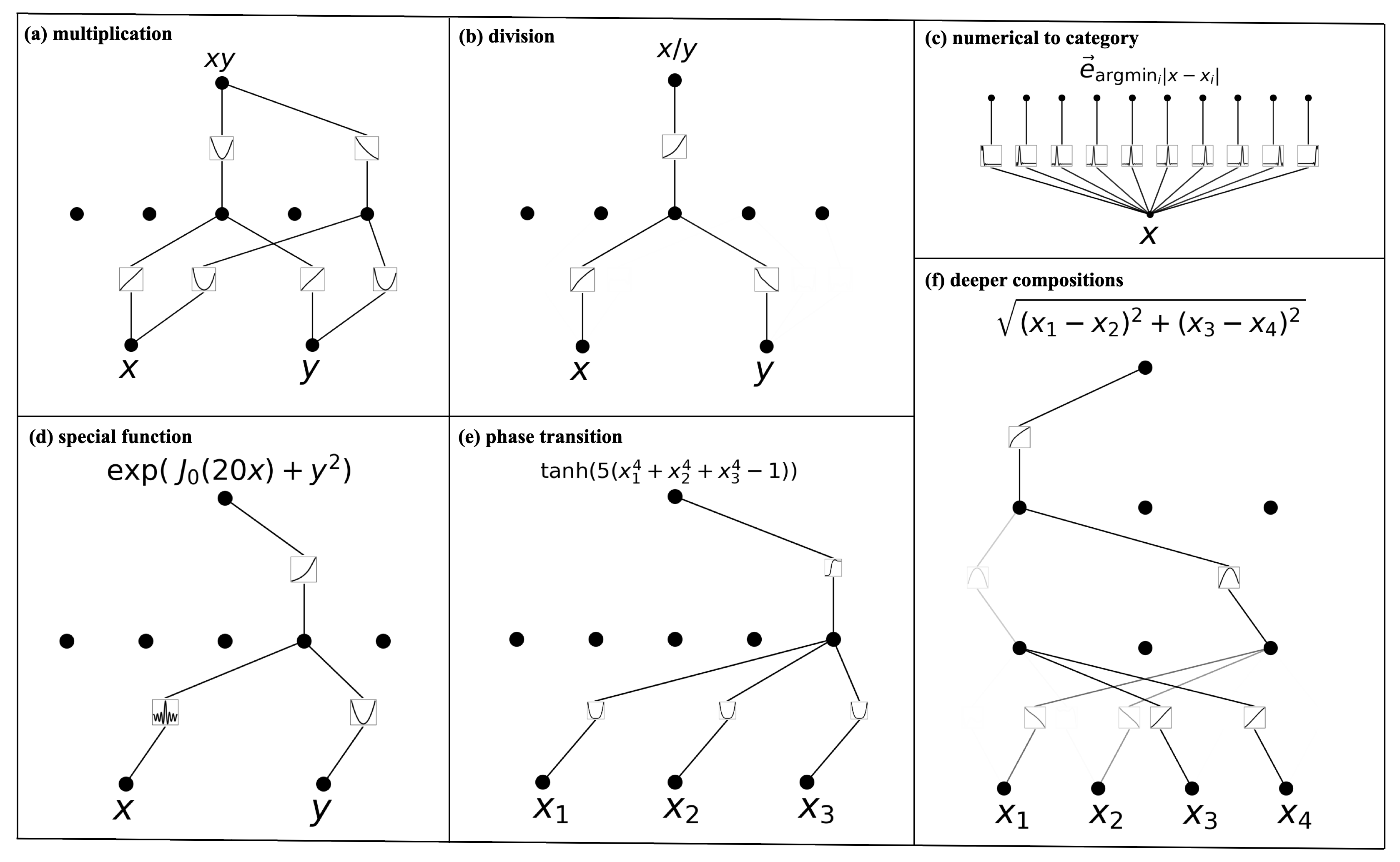

The answer is Kolmogorov-Arnold Networks (KANs), a different blueprint for neural networks. In a standard network, each neuron applies a fixed activation function to transform its input. KANs flip this: neurons become simple summation points, and the connections carry learnable rules instead.

It sounds like a subtle change. The consequences are not.

The result: smaller networks that match or beat much larger conventional ones, readable internal logic, and a path toward rediscovering known mathematical and physical laws from raw data.

Key Insight: KANs replace every fixed connection formula in a neural network with a learnable function, producing networks that are more accurate, more efficient, and more interpretable.

How It Works

The mathematical foundation comes from a 1957 theorem by Andrey Kolmogorov and Vladimir Arnold. The Kolmogorov-Arnold representation theorem states that any function of multiple variables can be written as a finite composition of single-variable functions. No matter how complex a relationship is, you can always decompose it into nested, one-dimensional pieces.

Classical neural networks draw from a different result, the universal approximation theorem, which says you can approximate any function by stacking enough neurons with fixed nonlinearities. Both theorems guarantee expressive power, but they point toward radically different architectures.

Here’s the practical difference:

- In a standard MLP (Multi-Layer Perceptron): neurons apply a fixed activation function (say, ReLU), and the network learns by adjusting linear weights on the connections between them.

- In a KAN: every connection carries a learnable univariate function, parametrized as a spline, a smooth curve built from adjustable mathematical segments. Nodes simply sum their incoming signals. All the learning happens on the edges.

A KAN has no fixed linear weight matrices. Each “weight” is a flexible curve the network sculpts during training.

Why splines? They approximate smooth functions in low dimensions well, adjusting locally and converging fast. The catch is the curse of dimensionality: fitting a high-dimensional function directly with splines requires exponentially more parameters as dimensions grow.

MLPs sidestep this by learning compositional structure. KANs get both advantages. Splines handle individual univariate pieces with high precision, while the MLP-like outer structure discovers how those pieces compose.

On a benchmark function (a high-dimensional sum of sines wrapped in an exponential), a small KAN learns it accurately. A much larger MLP struggles because ReLU activations are a poor fit for smooth trigonometric and exponential shapes.

Why It Matters

Accuracy gains alone would justify interest, but the interpretability story is what sets KANs apart. After training, each learned edge function can be visualized directly as a curve you can inspect, prune, or symbolically identify. The team combines this with simplification techniques: pruning unimportant connections, matching learned functions to known mathematical operations, and collapsing redundant structure.

KANs can help scientists find structure in data. The researchers tested this in two case studies.

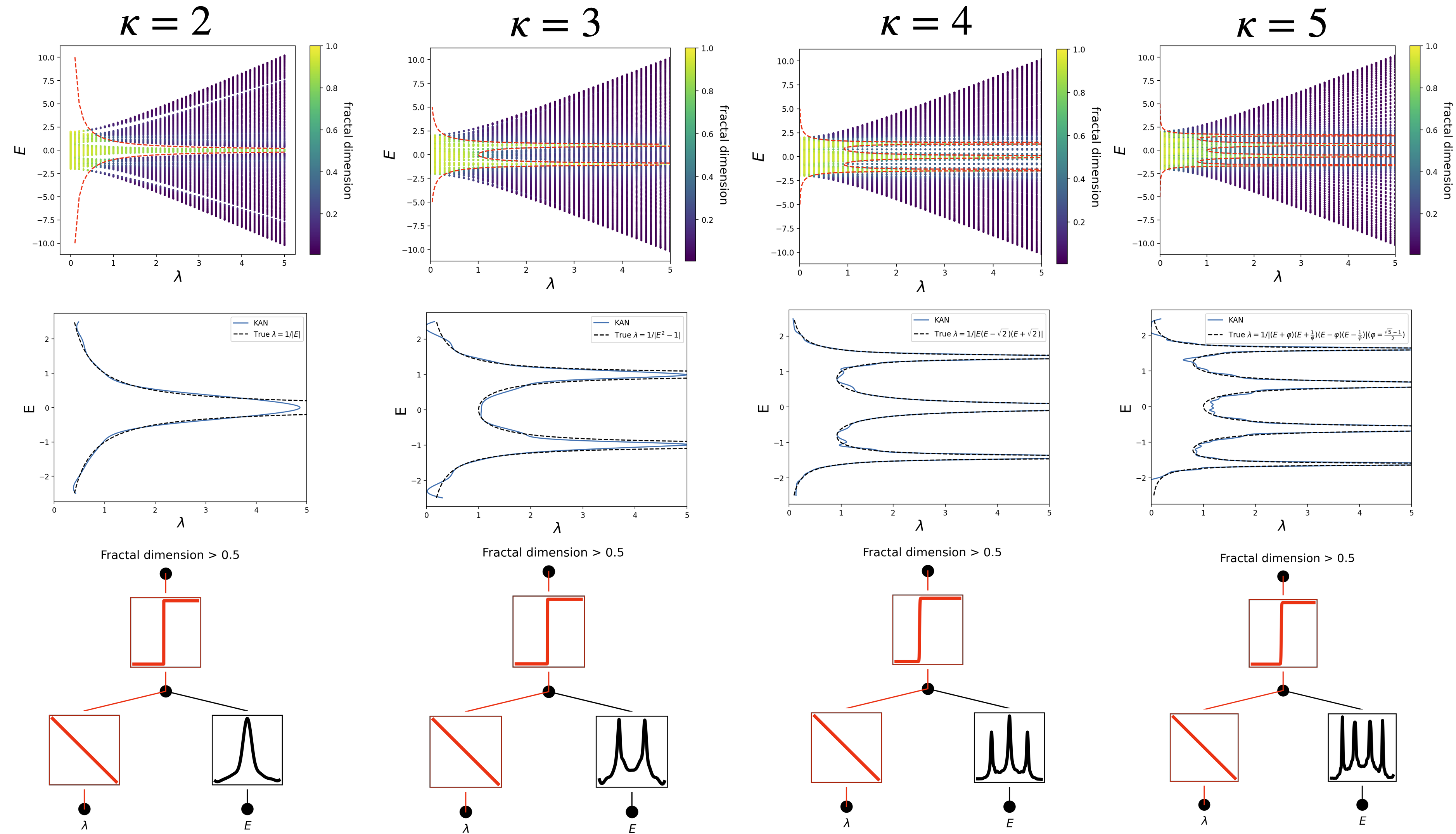

In knot theory, a KAN recovered a known relationship between topological invariants, one that took human mathematicians years to establish. A separate KAN trained on condensed matter data identified the functional form governing Anderson localization, the quantum phenomenon where electrons become trapped in disordered materials. It reproduced the known scaling law from scratch.

These aren’t curve-fits. They’re structured mathematical hypotheses that humans can verify.

KANs also show faster neural scaling laws than comparable MLPs: a fixed computational budget yields more accuracy. On PDE-solving tasks, small KANs match the accuracy of networks orders of magnitude larger.

There are real caveats. Current results focus on small-scale scientific tasks like function fitting, PDE solving, and symbolic discovery. Whether KANs scale to the massive transformer architectures behind large language models remains open. Training can be slower per step due to spline evaluation overhead, though the team argues these are engineering problems, not fundamental limits.

For AI research, KANs open a new design axis. Modern deep learning has converged on MLPs as the default nonlinear building block, tucked inside every transformer, every graph network, every diffusion model. An alternative with different built-in assumptions and genuine interpretability could change how the next generation of models gets built.

The stakes are higher in physics and mathematics. Science produces more data than humans can digest: particle colliders, gravitational wave detectors, cosmological surveys, quantum simulators. Black-box predictions are useful, but symbolic, human-readable structure is what drives real discovery. KANs are a serious step toward neural networks that don’t just predict but explain.

Bottom Line: KANs replace fixed activations with learnable spline functions on every edge, achieving better accuracy with smaller models while producing networks whose internal structure can be read, pruned, and interpreted as mathematical laws.

IAIFI Research Highlights

KANs translate a classical theorem from pure mathematics into a working neural network architecture, then use it to rediscover known laws in knot theory and condensed matter physics from data alone.

KANs show faster neural scaling laws than MLPs and match or exceed MLP accuracy with far fewer parameters, while producing networks whose learned functions can be directly visualized and symbolically identified.

By recovering known physical scaling laws (such as those governing Anderson localization) from experimental data without prior knowledge, KANs offer a new approach to symbolic scientific discovery in physics and mathematics.

Future work will test whether KANs scale to large transformer architectures and whether their interpretability advantages hold in higher-dimensional regimes. The paper ([arXiv:2404.19756](https://arxiv.org/abs/2404.19756)) has open-source code available via `pip install pykan`.

Original Paper Details

KAN: Kolmogorov-Arnold Networks

2404.19756

Ziming Liu, Yixuan Wang, Sachin Vaidya, Fabian Ruehle, James Halverson, Marin Soljačić, Thomas Y. Hou, Max Tegmark

Inspired by the Kolmogorov-Arnold representation theorem, we propose Kolmogorov-Arnold Networks (KANs) as promising alternatives to Multi-Layer Perceptrons (MLPs). While MLPs have fixed activation functions on nodes ("neurons"), KANs have learnable activation functions on edges ("weights"). KANs have no linear weights at all -- every weight parameter is replaced by a univariate function parametrized as a spline. We show that this seemingly simple change makes KANs outperform MLPs in terms of accuracy and interpretability. For accuracy, much smaller KANs can achieve comparable or better accuracy than much larger MLPs in data fitting and PDE solving. Theoretically and empirically, KANs possess faster neural scaling laws than MLPs. For interpretability, KANs can be intuitively visualized and can easily interact with human users. Through two examples in mathematics and physics, KANs are shown to be useful collaborators helping scientists (re)discover mathematical and physical laws. In summary, KANs are promising alternatives for MLPs, opening opportunities for further improving today's deep learning models which rely heavily on MLPs.