Introduction to Normalizing Flows for Lattice Field Theory

Authors

Michael S. Albergo, Denis Boyda, Daniel C. Hackett, Gurtej Kanwar, Kyle Cranmer, Sébastien Racanière, Danilo Jimenez Rezende, Phiala E. Shanahan

Abstract

This notebook tutorial demonstrates a method for sampling Boltzmann distributions of lattice field theories using a class of machine learning models known as normalizing flows. The ideas and approaches proposed in arXiv:1904.12072, arXiv:2002.02428, and arXiv:2003.06413 are reviewed and a concrete implementation of the framework is presented. We apply this framework to a lattice scalar field theory and to U(1) gauge theory, explicitly encoding gauge symmetries in the flow-based approach to the latter. This presentation is intended to be interactive and working with the attached Jupyter notebook is recommended.

Concepts

The Big Picture

Imagine describing thousands of subatomic particles at once: not just tracking their positions but capturing every possible configuration they might be in, weighted by probability. In quantum field theory, the physics governing how fundamental particles arise and interact, this is the problem physicists face every time they want to make a prediction.

The traditional tools are running out of road.

For decades, physicists have relied on Markov Chain Monte Carlo (MCMC), a random walk through an enormous space of possible physical configurations, guided by the laws of physics. Picture a hiker mapping a vast, foggy mountain range one careful step at a time, always favoring more plausible terrain. It works, but it’s slow.

Near phase transitions, when matter changes its fundamental character (water freezing into ice, say), MCMC gets stuck. The random walk takes exponentially longer to explore relevant configurations. Physicists call this critical slowing down, and the field has been searching for something faster.

A team from MIT, NYU, DeepMind, and Argonne National Laboratory has written a detailed tutorial showing how normalizing flows, a class of machine learning models, can fill that role. Instead of a random walk, these models generate realistic field configurations directly, in a single step, on a computational lattice: a grid that discretizes space so the physics becomes computable. The researchers show how to encode gauge symmetry directly into the model’s architecture, so transformations that leave the physics unchanged are respected by construction.

Key Insight: Normalizing flows learn to map simple random noise directly into physically meaningful field configurations, bypassing the slow random walk of traditional MCMC, with gauge symmetry baked into the model’s structure from the start.

How It Works

Rather than stumbling through configuration space step by step, a normalizing flow learns a smooth, invertible transformation that maps a simple distribution (like a Gaussian) into something matching the target physics.

Think of a rubber sheet. Start flat and featureless. A normalizing flow stretches, compresses, and folds it until it matches the complicated shape of your target distribution. Because the transformation is invertible and differentiable, you can track exactly how much any region gets stretched. That’s the Jacobian factor: it tells you how volumes change under the transformation, which lets you compute the exact probability density of any sample you draw.

The practical challenge is building flows that are both expressive and computationally cheap. The answer is coupling layers, a modular construction that splits the field variables into two groups. One passes through unchanged. The other gets transformed using functions of the first group, which gives the whole layer’s Jacobian a special triangular structure. Computing it then reduces to multiplying diagonal entries rather than doing a full matrix operation. Stack enough of these layers and the composite flow can approximate arbitrarily complex distributions.

For scalar field theory on a 2D lattice, training proceeds in four steps:

- Draw samples from a simple Gaussian prior.

- Pass them through the normalizing flow to get candidate field configurations.

- Compare the output distribution to the target Boltzmann distribution (the physics-derived probability law that assigns lower probability to higher-energy configurations) using KL divergence, a measure of how far apart two distributions are.

- Backpropagate through the entire flow to update its parameters.

After training, the flow generates independent, nearly correct samples in a single forward pass. Any residual errors get corrected by feeding the flow samples as proposals into a standard MCMC step, which guarantees exact statistics even if the learned approximation isn’t perfect.

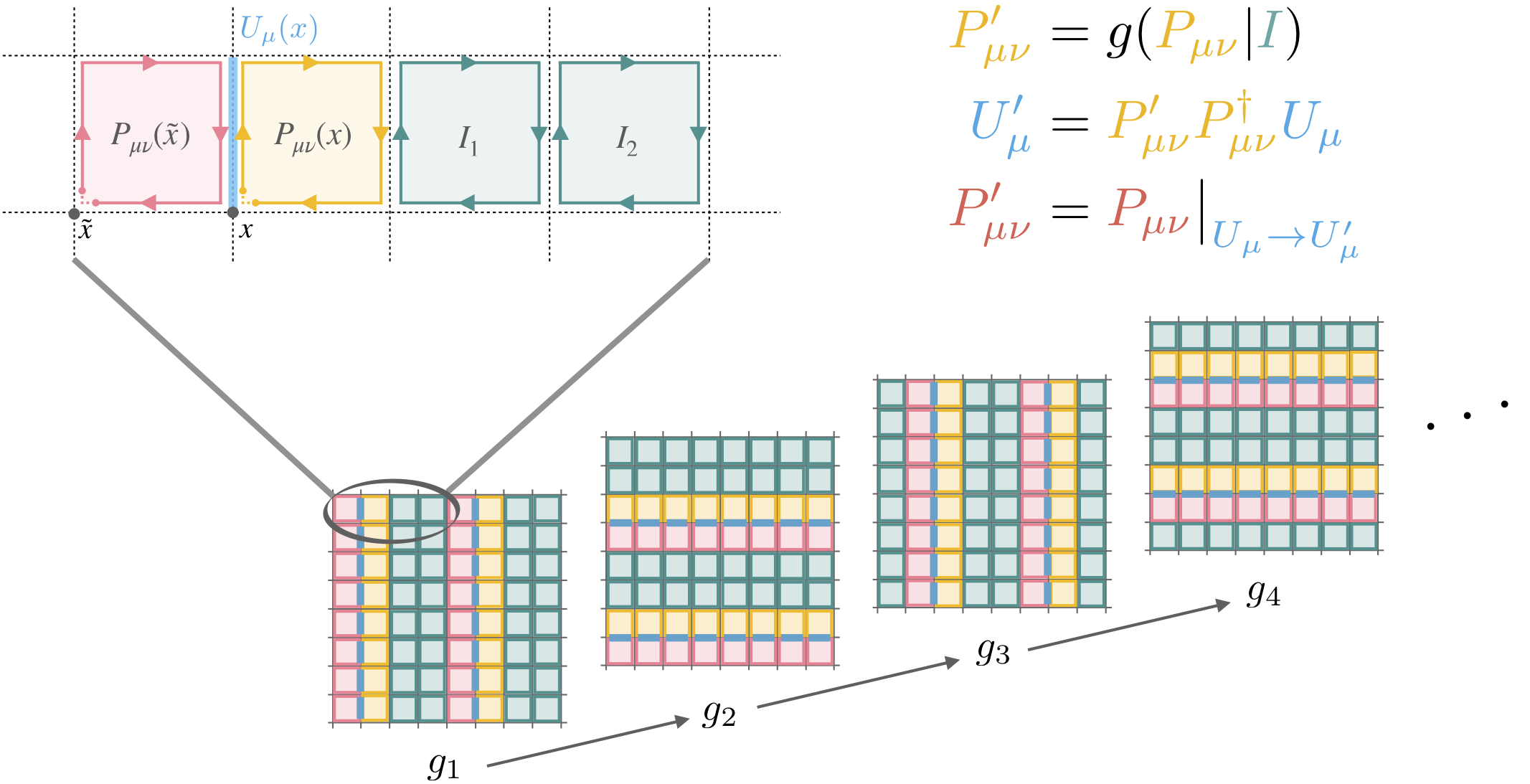

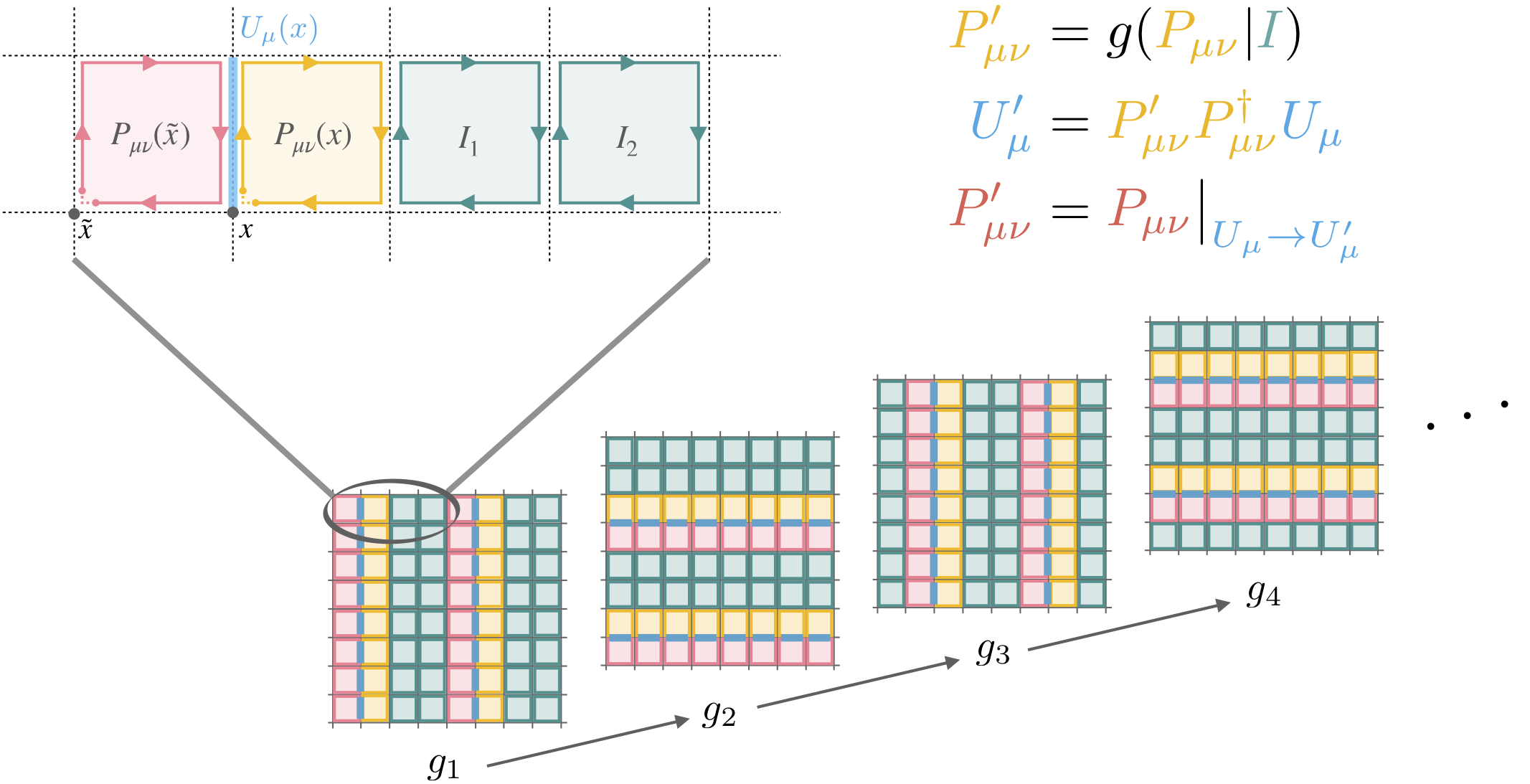

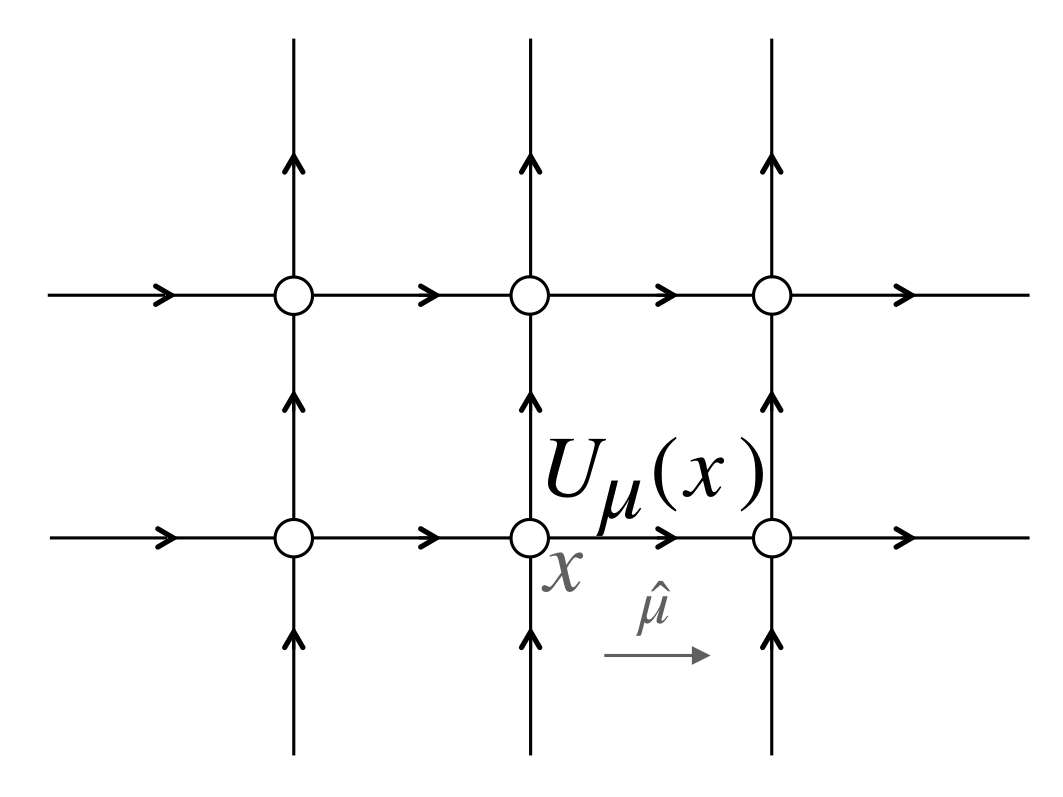

The gauge theory case is harder. In U(1) gauge theory, the simplest theory with local gauge symmetry, the field variables live on the circle (angles from 0 to 2π) rather than on the real line. The physics must also be invariant under local gauge transformations. A naive flow would ignore this structure entirely, wasting capacity on configurations that are physically identical.

The solution is architectural. The paper introduces gauge-equivariant coupling layers that produce outputs respecting gauge symmetry regardless of their inputs. These layers build their transformations from plaquettes, the smallest closed loops on the lattice and the natural building blocks of gauge-invariant quantities. The learned distribution automatically respects gauge invariance. The model never needs to learn what gauge symmetry is; it comes for free.

Why It Matters

The stakes here go well beyond any single calculation. Lattice quantum chromodynamics (QCD), the theory of quarks and gluons behind the strong nuclear force, is the primary tool for first-principles predictions about protons, neutrons, and nuclear matter. Those calculations run on some of the world’s largest supercomputers and are extraordinarily expensive.

If normalizing flows can replace or accelerate the sampling step, the payoff would be large: faster calculations, access to parameter regimes where MCMC breaks down entirely, and the ability to study phenomena near phase transitions that are currently out of reach.

The tutorial format matters too. The authors released a working Jupyter notebook alongside the mathematical exposition, lowering the barrier for physicists to try these methods and for ML researchers to engage with the specific challenges of gauge invariance. Their treatment of U(1) gauge theory lays groundwork for tackling SU(2) and SU(3), the more complex symmetry groups governing the strong force.

Open questions remain. Current implementations work on small lattices; scaling to the large volumes needed for precision QCD is an unsolved engineering problem. Training costs are high, and whether they pay off at production scale is not yet clear. But the framework is in place, and the community now has a hands-on guide to build on.

Bottom Line: This tutorial makes normalizing flows accessible to the lattice field theory community, showing how machine learning models can generate field configurations that respect physical symmetries, with potential to reshape some of the most computationally demanding calculations in particle physics.

IAIFI Research Highlights

This work combines probabilistic machine learning with quantum field theory, embedding gauge symmetry constraints into neural network architectures to make ML-based samplers physically meaningful.

The paper shows how to encode continuous symmetry groups, specifically U(1) local gauge invariance, as hard architectural constraints rather than soft training objectives, extending what normalizing flows can do.

Flow-based sampling offers a path beyond critical slowing down in lattice QCD, potentially enabling first-principles calculations of hadronic properties at parameter regimes inaccessible to traditional MCMC.

Future work will need to scale these methods to SU(3) gauge theory and larger lattice volumes; the foundational approaches are detailed in [arXiv:2101.08176](https://arxiv.org/abs/2101.08176) and prior works [arXiv:1904.12072](https://arxiv.org/abs/1904.12072), [arXiv:2002.02428](https://arxiv.org/abs/2002.02428), and [arXiv:2003.06413](https://arxiv.org/abs/2003.06413).

Original Paper Details

Introduction to Normalizing Flows for Lattice Field Theory

2101.08176

Michael S. Albergo, Denis Boyda, Daniel C. Hackett, Gurtej Kanwar, Kyle Cranmer, Sébastien Racanière, Danilo Jimenez Rezende, Phiala E. Shanahan

This notebook tutorial demonstrates a method for sampling Boltzmann distributions of lattice field theories using a class of machine learning models known as normalizing flows. The ideas and approaches proposed in arXiv:1904.12072, arXiv:2002.02428, and arXiv:2003.06413 are reviewed and a concrete implementation of the framework is presented. We apply this framework to a lattice scalar field theory and to U(1) gauge theory, explicitly encoding gauge symmetries in the flow-based approach to the latter. This presentation is intended to be interactive and working with the attached Jupyter notebook is recommended.