Inferring subhalo effective density slopes from strong lensing observations with neural likelihood-ratio estimation

Authors

Gemma Zhang, Siddharth Mishra-Sharma, Cora Dvorkin

Abstract

Strong gravitational lensing has emerged as a promising approach for probing dark matter models on sub-galactic scales. Recent work has proposed the subhalo effective density slope as a more reliable observable than the commonly used subhalo mass function. The subhalo effective density slope is a measurement independent of assumptions about the underlying density profile and can be inferred for individual subhalos through traditional sampling methods. To go beyond individual subhalo measurements, we leverage recent advances in machine learning and introduce a neural likelihood-ratio estimator to infer an effective density slope for populations of subhalos. We demonstrate that our method is capable of harnessing the statistical power of multiple subhalos (within and across multiple images) to distinguish between characteristics of different subhalo populations. The computational efficiency warranted by the neural likelihood-ratio estimator over traditional sampling enables statistical studies of dark matter perturbers and is particularly useful as we expect an influx of strong lensing systems from upcoming surveys.

Concepts

The Big Picture

Imagine trying to understand the contents of a room by watching shadows on a wall. You can’t see the objects directly, only the distorted shapes they cast. At cosmic scales, galaxies bend light from even more distant galaxies into rings, arcs, and multiple images. This is strong gravitational lensing, and it’s one of the few tools we have for probing dark matter.

Dark matter makes up about 84% of all matter in the universe, yet it emits no light, reflects none, and interacts with ordinary matter only through gravity. We know it exists from its gravitational pull, but pinning down its fundamental nature requires looking at the smallest scales, far below individual galaxies. Sub-galactic clumps called subhalos contain no stars. They’re invisible except through their gravitational imprint on passing light, and strong lensing can pick up exactly that signal.

The problem: existing methods are either imprecise or grind to a halt computationally when analyzing more than one or two subhalos at a time. Researchers at Harvard and MIT have introduced a machine learning approach, a neural network trained to judge which dark matter model best fits an observed lensing image, that analyzes entire populations of subhalos simultaneously. The result is statistical power that older techniques simply couldn’t reach.

Key Insight: By replacing traditional sampling with a trained neural network, this approach combines information from many subhalos across multiple lensing images at once. That makes population-level inferences about dark matter computationally tractable for the first time.

How It Works

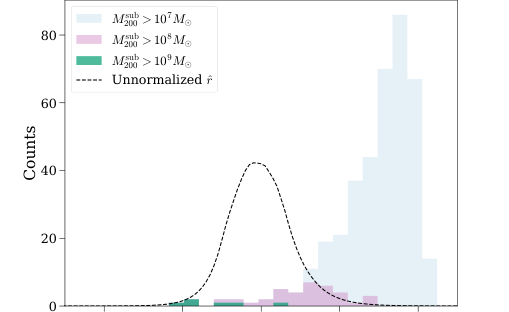

Most previous studies measured the subhalo mass function, a census of subhalo masses, but doing so requires assumptions about internal density profiles in regions not directly observed. That introduces systematic errors. The team instead focused on the effective density slope: how steeply a subhalo’s density falls off from its center, measured at the scale where lensing is most sensitive. This slope can be extracted directly from lensing data without assuming any particular profile shape. It’s a cleaner observable, and it encodes information about dark matter physics that pure mass measurements miss.

The forward model works like this:

- Simulate a lensing system: Use

lenstronomyto generate mock 100×100 pixel images at 0.08 arcseconds per pixel, including a background source galaxy, a foreground lens galaxy, and a population of dark matter subhalos drawn from some distribution. - Vary the population parameter: The key parameter is the mean effective density slope, a number that distinguishes cold dark matter (cuspy, concentrated profiles) from alternatives like warm or self-interacting dark matter (cored, less concentrated profiles).

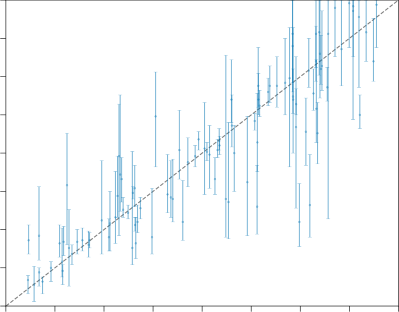

- Train a neural network: The network learns to estimate the likelihood ratio between two hypotheses: that an image came from a population with slope θ₁ versus slope θ₂.

- Deploy across many images: After training, the network processes new lensing images rapidly. Combining evidence across images doesn’t multiply computational cost.

A lensing image contains enormous variation in individual subhalo details: exact masses, positions, individual slopes. None of that directly reveals the population-level pattern. The network’s trick is to bypass measuring any of those individual properties entirely. It learns to extract only the signal that distinguishes one dark matter population from another. This is simulation-based inference at work: train on realistic simulations, then run the trained network on new observations.

Why It Matters

The timing here is no accident. Upcoming sky surveys from the Euclid space telescope and the Rubin Observatory’s Legacy Survey of Space and Time are expected to increase known strong lensing systems from the current few hundred to potentially hundreds of thousands. Each system carries information about dark matter substructure. Without a fast analysis method, that information just sits there.

Traditional Markov chain Monte Carlo sampling works by randomly exploring all possible explanations until it homes in on the ones that best fit the data. It scales badly with both the number of subhalos per image and the number of images per dataset. The neural likelihood-ratio approach pays a one-time training cost, then processes each new image in a fraction of a second. That’s the difference between a method that handles a handful of systems and one that operates at survey scale.

The approach also provides a template for similar problems elsewhere in astrophysics, wherever population-level inference from high-dimensional data runs into the same computational wall.

Bottom Line: By pairing a carefully chosen dark matter observable with neural likelihood-ratio estimation, this method makes population-level dark matter science from strong gravitational lensing practical, and it grows more powerful with every new lensing system future surveys discover.

IAIFI Research Highlights

This work combines simulation-based inference and neural likelihood-ratio estimation with gravitational lensing analysis to extract population-level dark matter constraints, sitting at the intersection of modern machine learning and fundamental astrophysics.

The neural likelihood-ratio estimator handles high-dimensional astrophysical data with large latent spaces, providing a scalable alternative to traditional sampling that extends naturally to population-level inference problems in other scientific domains.

By measuring the subhalo effective density slope across populations without assuming a particular density profile, this method gives researchers a new, assumption-free probe of dark matter's fundamental properties at sub-galactic scales.

Future work could extend this approach to real observational data and incorporate additional systematics such as line-of-sight perturbers; the paper is available at [arXiv:2208.13796](https://arxiv.org/abs/2208.13796).