How Do Transformers "Do" Physics? Investigating the Simple Harmonic Oscillator

Authors

Subhash Kantamneni, Ziming Liu, Max Tegmark

Abstract

How do transformers model physics? Do transformers model systems with interpretable analytical solutions, or do they create "alien physics" that are difficult for humans to decipher? We take a step in demystifying this larger puzzle by investigating the simple harmonic oscillator (SHO), $\ddot{x}+2γ\dot{x}+ω_0^2x=0$, one of the most fundamental systems in physics. Our goal is to identify the methods transformers use to model the SHO, and to do so we hypothesize and evaluate possible methods by analyzing the encoding of these methods' intermediates. We develop four criteria for the use of a method within the simple testbed of linear regression, where our method is $y = wx$ and our intermediate is $w$: (1) Can the intermediate be predicted from hidden states? (2) Is the intermediate's encoding quality correlated with model performance? (3) Can the majority of variance in hidden states be explained by the intermediate? (4) Can we intervene on hidden states to produce predictable outcomes? Armed with these two correlational (1,2), weak causal (3) and strong causal (4) criteria, we determine that transformers use known numerical methods to model trajectories of the simple harmonic oscillator, specifically the matrix exponential method. Our analysis framework can conveniently extend to high-dimensional linear systems and nonlinear systems, which we hope will help reveal the "world model" hidden in transformers.

Concepts

The Big Picture

Hand a black box to a physicist and ask: “Does this thing actually understand the laws of motion, or is it just memorizing patterns?” That’s one of the big open questions in AI research.

Transformers (the architecture behind GPT, Claude, and a growing number of scientific models) have proven eerily good at predicting physical systems. But nobody really knows how. Do they rediscover the same equations physicists use? Or do they invent some alien internal calculus that happens to give the right answers?

Subhash Kantamneni, Ziming Liu, and Max Tegmark at MIT set out to answer this by studying one of the simplest physical systems imaginable: the simple harmonic oscillator (SHO), the equation governing everything from pendulums to quantum fields. Rather than guess what the transformer is doing, they built a framework to test specific hypotheses about its internal computations.

What they found: transformers appear to solve the SHO the same way a careful physicist would, by computing the matrix exponential, the standard mathematical recipe for projecting a system’s state forward in time. The “alien physics” worry, at least for this system, doesn’t hold up.

Key Insight: When trained to predict oscillator trajectories, transformers internally compute the same matrix exponential that physicists use. They appear to learn recognizable methods, not inscrutable shortcuts.

How It Works

The researchers framed their investigation as a hypothesis-testing problem. Given a known solution method g (say, the matrix exponential), does the transformer actually use g internally? If so, g’s intermediate computational quantities should be encoded somewhere in the transformer’s hidden states.

They started with a simpler stand-in problem: in-context linear regression, where a model estimates the relationship between inputs and outputs (a slope w) purely from examples, without being told the underlying formula. The model never sees w directly but must implicitly compute it to predict y = wx correctly. Three possibilities describe how an intermediate can appear in a hidden state:

- Linearly encoded: a simple linear test can recover w from the hidden state

- Nonlinearly encoded: a more complex function f(w) is recoverable using a Taylor probe that searches for polynomial combinations of w via a statistical technique for identifying shared structure between two sets of measurements

- Not encoded: neither approach finds the intermediate

From there, they laid out four criteria for establishing that a transformer actually uses a given method, ordered from weak to strong:

- Correlational: Can the intermediate be predicted from hidden states?

- Correlational: Does encoding quality correlate with model performance?

- Weak causal: Does the intermediate explain most of the variance in the hidden states?

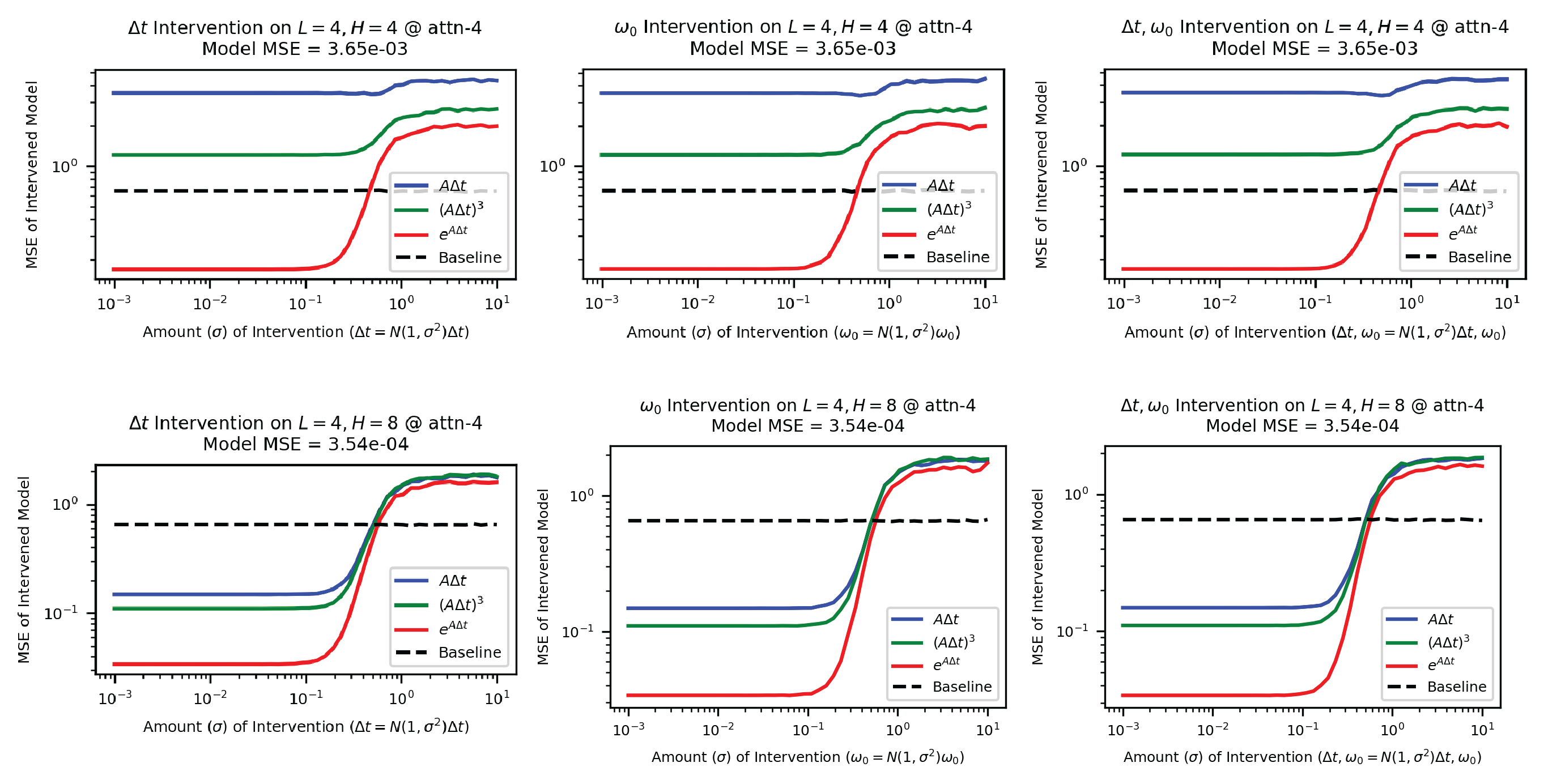

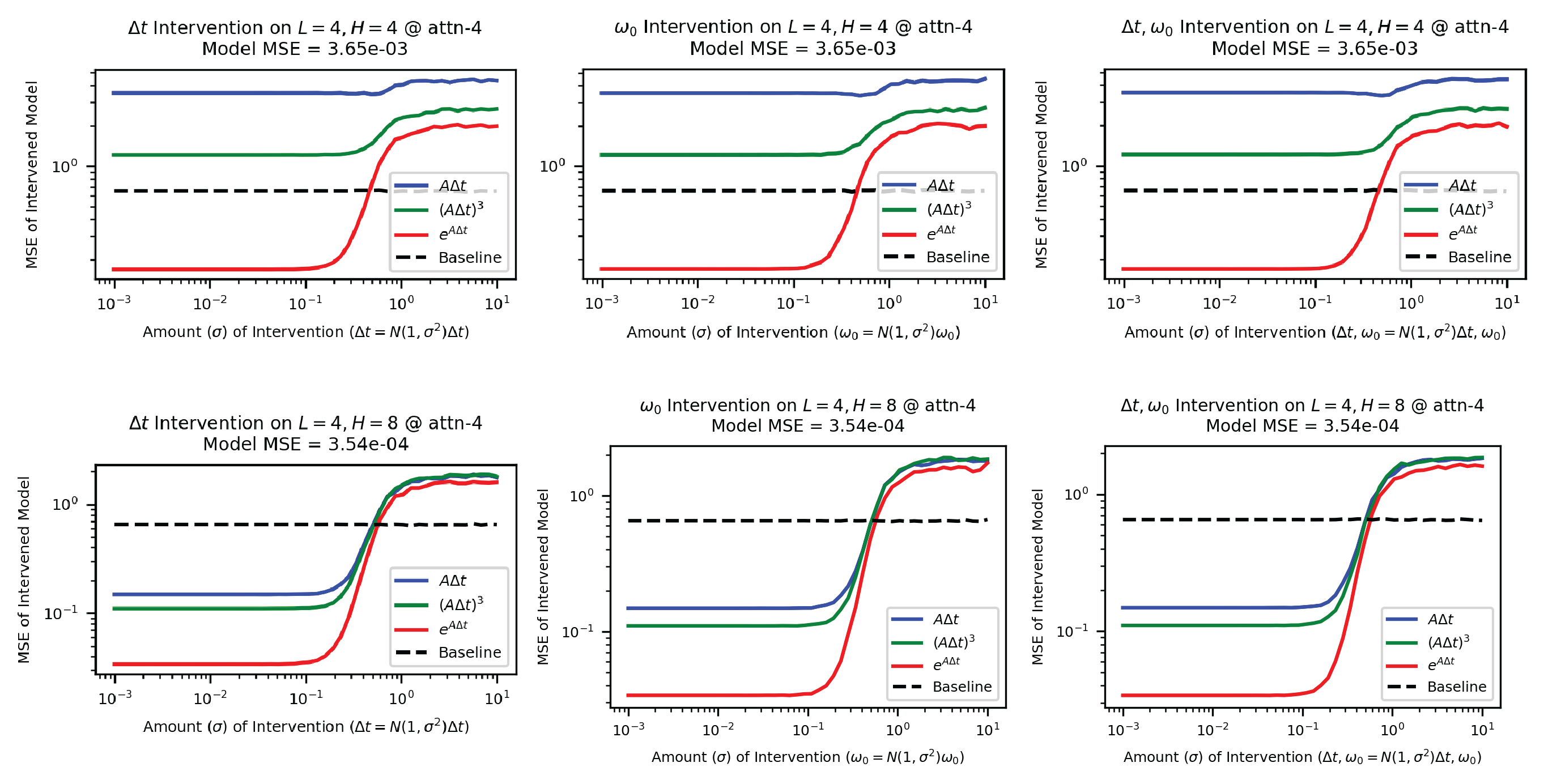

- Strong causal: Can you intervene on the hidden states to predictably change model output?

That fourth criterion is the gold standard. If you can surgically edit the transformer’s hidden representation of an intermediate and watch the output change in exactly the predicted way, you have genuine causal evidence, not just correlation.

The team then turned to the main event: the simple harmonic oscillator, governed by ẍ + 2γẋ + ω₀²x = 0. They trained transformers to predict the next position and velocity from past observations, varying the damping parameter γ and natural frequency ω₀ across training examples. Five candidate hypotheses for how the model might solve the problem ranged from directly inferring γ and ω₀ to running numerical solvers like Euler’s method (a step-by-step approximation) or the matrix exponential.

The matrix exponential hypothesis won on all four criteria. Hidden states in the transformer encode the matrix exponential of the system’s dynamics matrix, which captures how position and velocity evolve together. Encoding quality tracks model accuracy across training checkpoints, and the matrix exponential explains the dominant variance in hidden states.

The clincher: when the researchers manually modified the hidden state’s representation of the matrix exponential, model predictions shifted in exactly the direction the formula predicted. The model’s internal representation isn’t just correlated with the matrix exponential. It’s driving the output.

Why It Matters

A persistent worry in AI-for-science is that neural networks might build world models that are functionally correct but conceptually alien: right answers arrived at through representations so foreign that humans can never audit, trust, or build on them. Here, a transformer trained on physics data reached for the same mathematical tool a physicist would. That’s reassuring, at least for this system.

The framework itself may be the bigger contribution. The four-criteria methodology (two correlational, one weak causal, one strong causal) gives researchers a clear ladder for making mechanistic claims about what any model computes internally. The same approach can probe transformers trained on high-dimensional linear systems, nonlinear dynamics, and more complex physical scenarios.

If future work confirms that transformers consistently rediscover standard numerical methods, it would point to some built-in inductive bias in these architectures toward human-interpretable computation. That would be a surprising result, and one worth paying attention to.

Bottom Line: Transformers trained on oscillator physics internally compute the matrix exponential, the same method physicists use. A new four-criteria framework can rigorously test such claims, giving researchers a way to audit the “world models” hidden inside large AI systems.

IAIFI Research Highlights

The work brings mechanistic interpretability and classical physics into direct contact, using the simple harmonic oscillator as a testbed. The result: transformer architectures rediscover standard numerical methods that physicists have used for decades.

The four-criteria framework (two correlational, two causal) provides a general method for probing whether any transformer uses a specific computational approach, expanding the toolkit available for mechanistic interpretability research.

Transformers learning the matrix exponential for oscillator dynamics suggests that AI models trained on physical data can encode interpretable physics, which makes them more trustworthy for scientific simulation.

Future work will extend this framework to high-dimensional linear systems and nonlinear dynamics, with the goal of mapping the full "world model" encoded in transformers trained on physical data. See the full paper: [arXiv:2405.17209](https://arxiv.org/abs/2405.17209) by Kantamneni, Liu, and Tegmark.

Original Paper Details

How Do Transformers "Do" Physics? Investigating the Simple Harmonic Oscillator

2405.17209

Subhash Kantamneni, Ziming Liu, Max Tegmark

How do transformers model physics? Do transformers model systems with interpretable analytical solutions, or do they create "alien physics" that are difficult for humans to decipher? We take a step in demystifying this larger puzzle by investigating the simple harmonic oscillator (SHO), $\ddot{x}+2γ\dot{x}+ω_0^2x=0$, one of the most fundamental systems in physics. Our goal is to identify the methods transformers use to model the SHO, and to do so we hypothesize and evaluate possible methods by analyzing the encoding of these methods' intermediates. We develop four criteria for the use of a method within the simple testbed of linear regression, where our method is $y = wx$ and our intermediate is $w$: (1) Can the intermediate be predicted from hidden states? (2) Is the intermediate's encoding quality correlated with model performance? (3) Can the majority of variance in hidden states be explained by the intermediate? (4) Can we intervene on hidden states to produce predictable outcomes? Armed with these two correlational (1,2), weak causal (3) and strong causal (4) criteria, we determine that transformers use known numerical methods to model trajectories of the simple harmonic oscillator, specifically the matrix exponential method. Our analysis framework can conveniently extend to high-dimensional linear systems and nonlinear systems, which we hope will help reveal the "world model" hidden in transformers.