High-dimensional inference for the $γ$-ray sky with differentiable programming

Authors

Siddharth Mishra-Sharma, Tracy R. Slatyer, Yitian Sun, Yuqing Wu

Abstract

We motivate the use of differentiable probabilistic programming techniques in order to account for the large model-space inherent to astrophysical $γ$-ray analyses. Targeting the longstanding Galactic Center $γ$-ray Excess (GCE) puzzle, we construct differentiable forward model and likelihood that make liberal use of GPU acceleration and vectorization in order to simultaneously account for a continuum of possible spatial morphologies consistent with the GCE emission in a fully probabilistic manner. Our setup allows for efficient inference over the large model space using variational methods. Beyond application to $γ$-ray data, a goal of this work is to showcase how differentiable probabilistic programming can be used as a tool to enable flexible analyses of astrophysical datasets.

Concepts

The Big Picture

Imagine trying to solve a crime with a blurry photograph taken from space, where the suspect might be hiding behind a thick fog of unrelated activity, and the fog itself keeps shifting. That’s roughly the situation astronomers face when studying a decades-old mystery at the heart of our galaxy: an unexplained glow of high-energy gamma rays that shouldn’t quite be there.

This glow, called the Galactic Center Gamma-ray Excess (GCE), was first spotted over a decade ago in data from NASA’s Fermi space telescope. Its properties look tantalizingly like what you’d expect from dark matter particles annihilating each other in the dense gravitational core of the Milky Way. But another explanation competes: millions of dim, rapidly spinning neutron stars called millisecond pulsars, too faint to see individually but collectively producing the same signal. Telling the two apart has been nearly impossible, partly because the analysis tools themselves introduced subtle biases tied to rigid assumptions about the signal’s shape.

A new paper from Siddharth Mishra-Sharma, Tracy Slatyer, Yitian Sun, and Yuqing Wu takes this problem on directly. The team built a flexible, high-speed framework, powered by graphics processors and grounded in rigorous probabilistic statistics, that can explore a vast space of possible gamma-ray sky models simultaneously.

Key Insight: By connecting every step of their gamma-ray analysis into one seamlessly optimizable system, the researchers can efficiently explore thousands of possible signal shapes at once, rather than committing to a single rigid template. The result is a far more honest picture of what the Fermi data actually tells us.

How It Works

Traditional gamma-ray analyses fit spatial templates (pre-computed maps of where emission is expected) to observed photon counts. The problem: these templates are fixed. Choose the wrong shape and your results become contaminated, because the analysis can’t distinguish a real signal from a modeling artifact.

The leading statistical technique for this problem, Non-Poissonian Template Fitting (NPTF), was built to handle both the smooth, uniform glow of diffuse emission and the irregular, clumpy statistics produced by faint point sources. But all previous NPTF applications suffered from exactly this template rigidity.

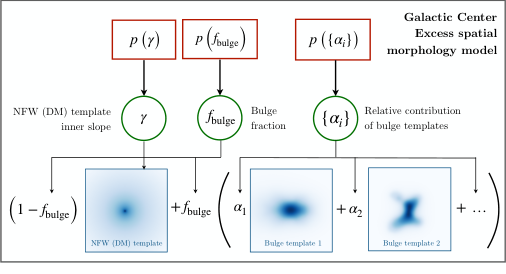

The new framework replaces static templates with a dynamic differentiable forward model: a mathematical description of the sky where every parameter can be continuously adjusted and the entire system optimized automatically. Here’s the pipeline:

- Differentiable forward model: Sky maps for different emission components (diffuse cosmic-ray backgrounds, the GCE signal, unresolved point sources) are constructed as smooth, mathematically connected functions of their parameters. Every step, from physical assumptions to predicted photon counts, can be efficiently tuned without manual intervention.

- GPU acceleration: Each model evaluation requires computing photon counts across thousands of pixels while accounting for uncertainty across all model parameters. The team uses graphics processor (GPU) hardware, the same chips that power video games, to run these computations in parallel at speed.

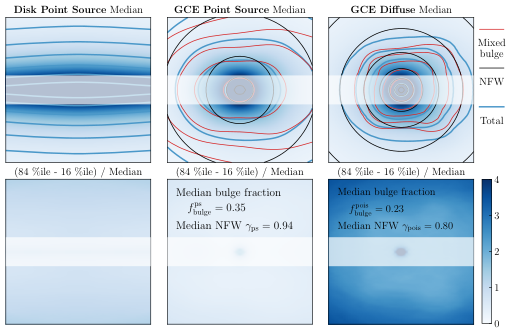

- Flexible morphology: Rather than fixing one spatial profile for the GCE, the framework treats the signal’s shape as a continuous parameter. It simultaneously considers a whole family of plausible forms, weighted by their consistency with the data.

- Variational inference: The model space is too large for traditional sampling. Instead, the team uses variational inference, a technique that approximates complex probability distributions with simpler, tractable ones optimized via gradient descent, to efficiently characterize the full range of likely models.

The point source component uses a flexible source count function (SCF) parameterized as a broken power law, characterizing how many sources contribute a given number of photons. This captures the statistics of faint, unresolved point sources without detecting them individually. The combined model handles both Poissonian emission (the smooth, predictable statistics of diffuse light) and the non-Poissonian statistics of point source populations. The entire pipeline remains differentiable end-to-end.

Applied to real Fermi-LAT data, the framework finds results consistent with a significant GCE, but with uncertainty about the signal’s shape properly accounted for rather than hidden behind fixed assumptions. Validation on simulated datasets shows that the method produces somewhat wider posteriors in some regimes, an expected feature of variational approximations, while avoiding the systematic biases that plague rigid-template approaches. The framework also lets researchers assess whether apparent evidence for point sources might arise from diffuse emission mismodeling, a longstanding concern in the field.

Why It Matters

The GCE puzzle is one of the most tantalizing open questions in astroparticle physics. A dark matter annihilation origin would be the first indirect detection of a particle making up roughly 27% of the universe’s total energy content. A millisecond pulsar origin reshapes our understanding of neutron star populations in the galactic core. The stakes are high enough that getting the statistical methodology right isn’t academic bookkeeping; it determines whether we’re seeing new fundamental physics or a known astrophysical population we’ve underestimated.

This work also points toward a shift in how astrophysics handles complex inference more broadly. The same approach (automatic differentiation, GPU hardware, and variational methods borrowed from machine learning) can in principle be applied to any dataset where the model space is large and rigid templates fall short. Cosmic microwave background analysis, gravitational wave parameter estimation, and galaxy survey cosmology all face similar challenges. The authors have released their pipeline as public code, so other groups can build on it directly.

Bottom Line: By making gamma-ray sky modeling fully differentiable and GPU-native, this work breaks the constraint that forced astronomers to assume a single rigid spatial template for the Galactic Center Excess, and provides a blueprint for honest high-dimensional Bayesian inference across astrophysics.

IAIFI Research Highlights

This work imports differentiable programming and variational inference from machine learning into high-stakes astrophysical inference, showing that GPU-native AI infrastructure can resolve longstanding methodological bottlenecks in fundamental physics data analysis.

The framework puts differentiable probabilistic programming to work as a practical tool for high-dimensional scientific inference, extending variational methods to problems with complex, physically-motivated likelihood functions that were previously intractable.

By enabling flexible, morphology-agnostic analysis of the Galactic Center Gamma-ray Excess, this work advances the search for dark matter annihilation signals and brings new rigor to one of astroparticle physics' most contested open questions.

Future work could integrate Gaussian process priors on spatial morphology and extend the framework to multi-wavelength datasets; the paper is available at [arXiv:2604.08648](https://arxiv.org/abs/2604.08648) with public code released alongside publication.

Original Paper Details

High-dimensional inference for the $γ$-ray sky with differentiable programming

2604.08648

Siddharth Mishra-Sharma, Tracy R. Slatyer, Yitian Sun, Yuqing Wu

We motivate the use of differentiable probabilistic programming techniques in order to account for the large model-space inherent to astrophysical $γ$-ray analyses. Targeting the longstanding Galactic Center $γ$-ray Excess (GCE) puzzle, we construct differentiable forward model and likelihood that make liberal use of GPU acceleration and vectorization in order to simultaneously account for a continuum of possible spatial morphologies consistent with the GCE emission in a fully probabilistic manner. Our setup allows for efficient inference over the large model space using variational methods. Beyond application to $γ$-ray data, a goal of this work is to showcase how differentiable probabilistic programming can be used as a tool to enable flexible analyses of astrophysical datasets.