Grayscale to Hyperspectral at Any Resolution Using a Phase-Only Lens

Authors

Dean Hazineh, Federico Capasso, Todd Zickler

Abstract

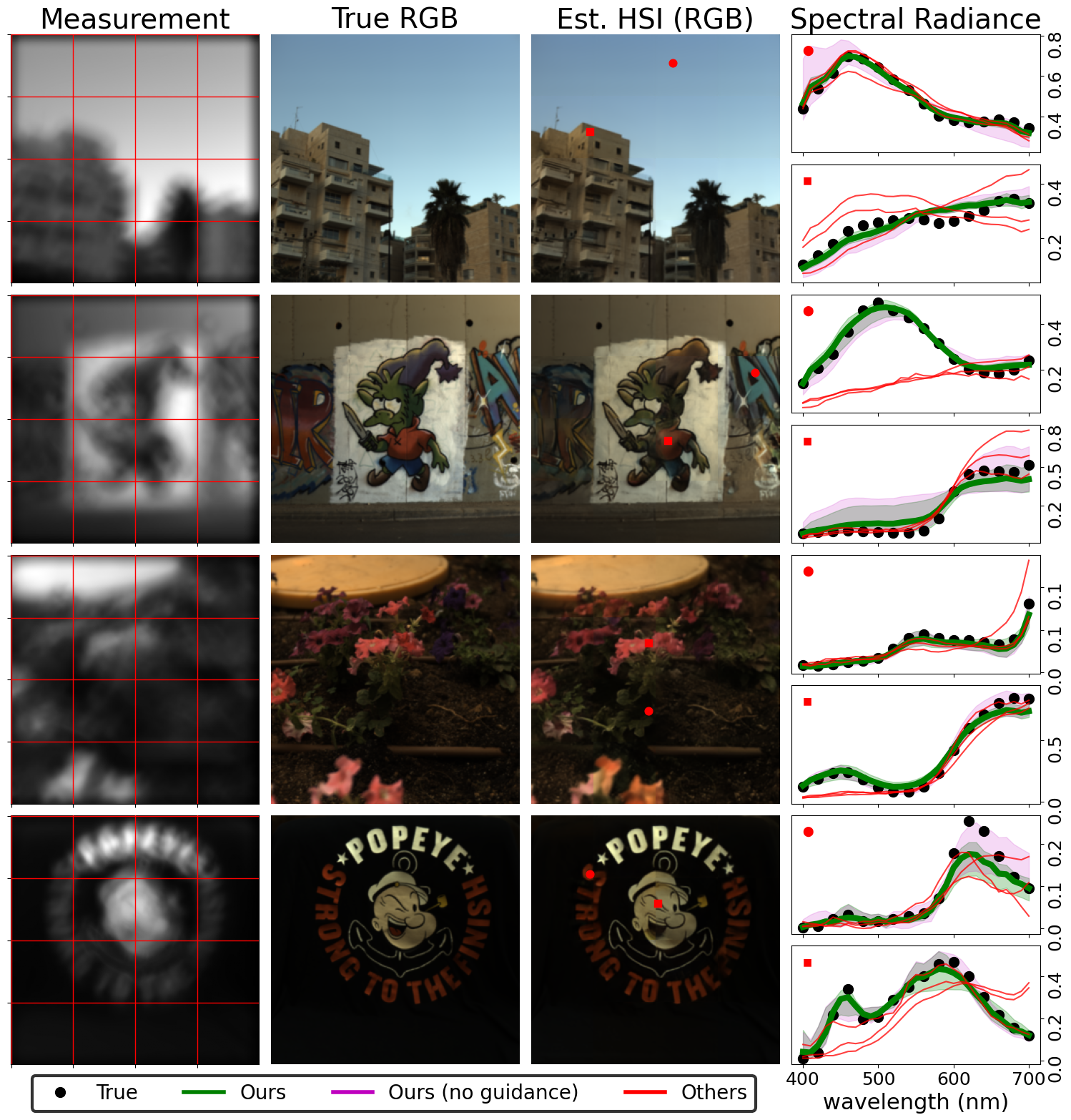

We consider the problem of reconstructing a HxWx31 hyperspectral image from a HxW grayscale snapshot measurement that is captured using only a single diffractive optic and a filterless panchromatic photosensor. This problem is severely ill-posed, but we present the first model that produces high-quality results. We make efficient use of limited data by training a conditional denoising diffusion model that operates on small patches in a shift-invariant manner. During inference, we synchronize per-patch hyperspectral predictions using guidance derived from the optical point spread function. Surprisingly, our experiments reveal that patch sizes as small as the PSFs support achieve excellent results, and they show that local optical cues are sufficient to capture full spectral information. Moreover, by drawing multiple samples, our model provides per-pixel uncertainty estimates that strongly correlate with reconstruction error. Our work lays the foundation for a new class of high-resolution snapshot hyperspectral imagers that are compact and light-efficient.

Concepts

The Big Picture

Imagine trying to reconstruct a full rainbow from a single black-and-white photograph. Not just guessing which objects might be red or blue based on context, but recovering the precise spectral fingerprint of every pixel across 31 distinct wavelength bands, from a grayscale image with no color information at all. A team of Harvard researchers just pulled it off.

Where a standard camera captures three color channels (red, green, blue), a hyperspectral imager records dozens, producing a rich spectral fingerprint of every point in a scene. These cameras detect crop disease from aircraft, identify minerals on Mars, and guide cancer surgeons in the operating room.

The catch? Traditional hyperspectral cameras are bulky, expensive, and light-hungry. They rely on complex optical systems, stacks of color filters, or sensors with far more pixels than the final image. Researchers at Harvard’s School of Engineering and Applied Sciences have now done something that probably shouldn’t work: reconstructing a full, high-resolution hyperspectral image from a plain grayscale snapshot, using nothing but a single thin flat lens and a camera sensor with no color filters.

Key Insight: A single diffractive flat optic imprints subtle chromatic aberrations into an ordinary grayscale image, and a patch-based diffusion model can decode those hidden spectral signatures to reconstruct full hyperspectral information at any image resolution.

How It Works

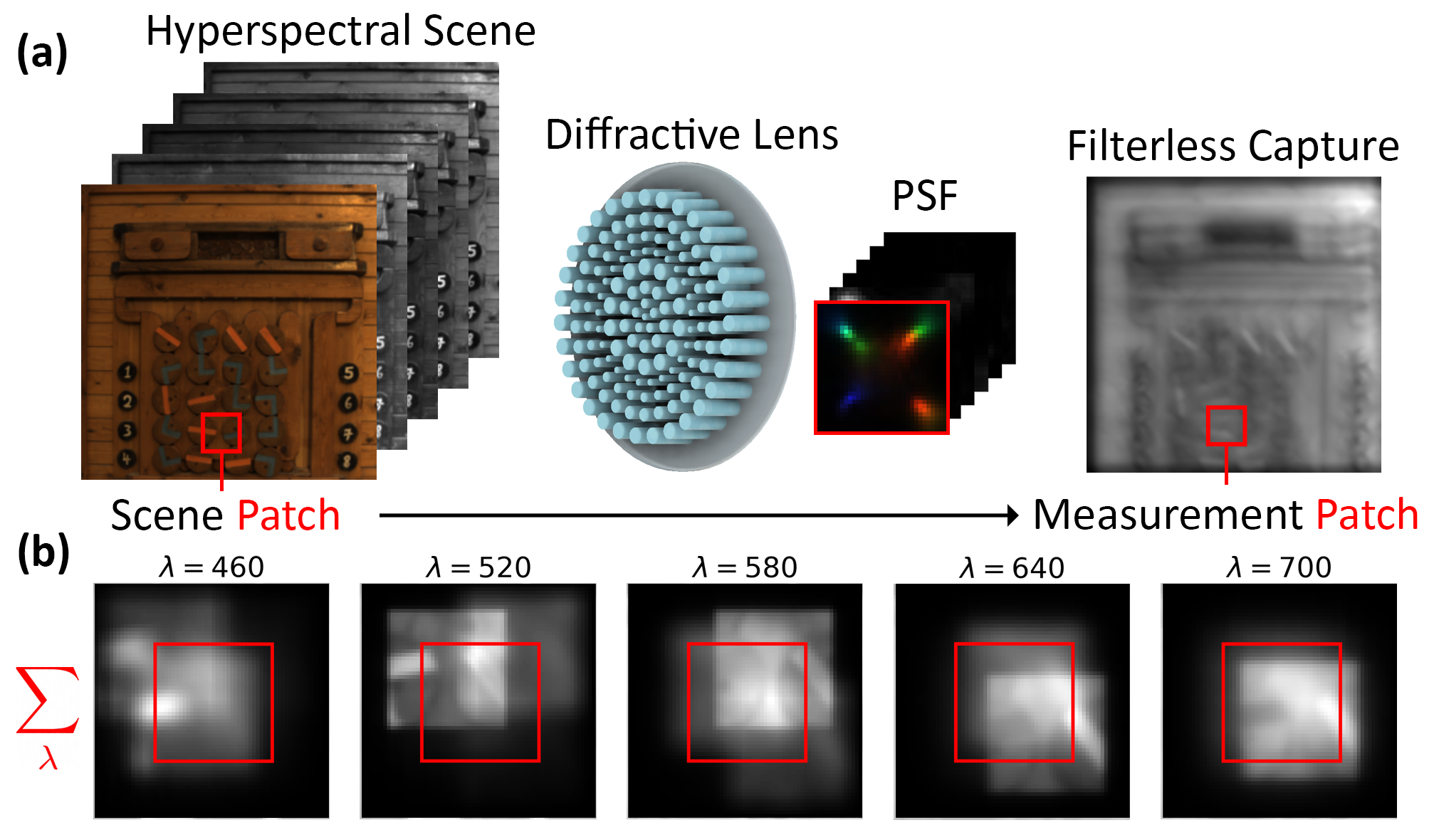

It starts with the lens. A diffractive optical element (a precisely patterned flat surface, sometimes called a metalens) bends different wavelengths of light by different amounts. This isn’t a bug; it’s the feature.

Traditional camera designers work hard to eliminate chromatic aberration, the tendency of a lens to focus different colors at slightly different points. Here, the researchers deliberately embrace it. The lens smears each wavelength into a slightly different blur pattern on the sensor, encoding spectral information directly into the spatial structure of the grayscale image.

The optical encoding works like this: each pixel’s recorded brightness is a weighted mixture of the scene’s spectral content, blurred and shifted by wavelength. The point spread function (PSF), which describes how a single point of light spreads on the sensor, differs across all 31 wavelength bands. The sensor records a single grayscale value at each pixel, but that value secretly carries a scrambled mix of spectral signals. The reconstruction problem is unscrambling them.

To decode this, the team trained a conditional denoising diffusion model (the same class of generative model behind modern image synthesis), repurposed here for a physical inverse problem. Three design choices make it work:

- Patch-based training: Rather than full images (scarce in hyperspectral research), the model trains on 64×64 spatial patches.

- Shift-invariant PSF: Because the diffractive lens produces the same blur response across the entire image plane, a patch-trained model generalizes to any image size, from a small test crop to a 1280×1280 full-field scene.

- PSF-guided inference: Patches are denoised in parallel, assembled into a full image, then a guidance signal derived from the PSF forces the result to be consistent with the original grayscale measurement. This resolves ambiguity where neighboring patches bleed spectral signal across boundaries.

The team tested eight different PSF designs and found that even the simplest phase-only configurations, with PSF supports as small as 32×32 pixels, produced strong spectral reconstructions.

Drawing multiple samples from the model gives per-pixel uncertainty estimates, a probabilistic map of where the model is confident and where it’s guessing. These estimates correlate strongly with actual reconstruction error, so users get a built-in quality check at no extra cost.

Why It Matters

The results cut two ways: one for hardware, one for AI.

For imaging hardware, this is a design blueprint for a new class of snapshot hyperspectral cameras, far simpler than anything currently available. A single flat optic, potentially mass-produced like a computer chip, paired with an off-the-shelf grayscale sensor, could replace instruments that need multiple optical stages, dispersive prisms, and custom photosensor arrays. Cheap, compact, light-efficient hyperspectral sensing would open up applications from environmental monitoring to medical diagnostics.

For AI methods, the contribution is subtler but no less significant. Patch-based diffusion can succeed even when blur kernels are large relative to patch size, a regime where previous approaches fell apart. The PSF guidance technique could transfer to other computational imaging problems where a physical forward model is known but the inverse is highly underdetermined. Getting competitive reconstruction out of models trained from scratch on limited data suggests a general recipe for physics-informed generative modeling when data is scarce.

Bottom Line: By combining a minimalist single-optic design with patch-based diffusion and physics-guided inference, this Harvard team achieved what was previously out of reach: high-quality hyperspectral reconstruction from a grayscale snapshot, opening the door to compact spectral cameras built from a single flat lens and a plain photosensor.

IAIFI Research Highlights

This work brings together diffractive optics design and generative AI, showing that purposeful chromatic aberration from a flat diffractive lens can be decoded by a physics-informed diffusion model to recover full spectral information from a grayscale image.

The paper introduces a patch-based conditional diffusion framework with PSF-guided inference synchronization. It is the first to show that diffusion models can solve blurred inverse problems where the blur kernel is large relative to patch size, with uncertainty quantification that reliably tracks reconstruction error.

By enabling snapshot hyperspectral imaging with a single flat optic and no color filters, this work could bring compact spectral sensing to scientific settings from astrophysical observation to materials characterization, where light efficiency and portability are critical.

Future directions include co-optimizing the diffractive lens design jointly with the reconstruction network, extending the approach to RGB measurements, and validating the system with physical hardware prototypes; the paper is available at [arXiv:2412.02798](https://arxiv.org/abs/2412.02798).

Original Paper Details

Grayscale to Hyperspectral at Any Resolution Using a Phase-Only Lens

2412.02798

["Dean Hazineh", "Federico Capasso", "Todd Zickler"]

We consider the problem of reconstructing a HxWx31 hyperspectral image from a HxW grayscale snapshot measurement that is captured using only a single diffractive optic and a filterless panchromatic photosensor. This problem is severely ill-posed, but we present the first model that produces high-quality results. We make efficient use of limited data by training a conditional denoising diffusion model that operates on small patches in a shift-invariant manner. During inference, we synchronize per-patch hyperspectral predictions using guidance derived from the optical point spread function. Surprisingly, our experiments reveal that patch sizes as small as the PSFs support achieve excellent results, and they show that local optical cues are sufficient to capture full spectral information. Moreover, by drawing multiple samples, our model provides per-pixel uncertainty estimates that strongly correlate with reconstruction error. Our work lays the foundation for a new class of high-resolution snapshot hyperspectral imagers that are compact and light-efficient.