GenPhys: From Physical Processes to Generative Models

Authors

Ziming Liu, Di Luo, Yilun Xu, Tommi Jaakkola, Max Tegmark

Abstract

Since diffusion models (DM) and the more recent Poisson flow generative models (PFGM) are inspired by physical processes, it is reasonable to ask: Can physical processes offer additional new generative models? We show that the answer is yes. We introduce a general family, Generative Models from Physical Processes (GenPhys), where we translate partial differential equations (PDEs) describing physical processes to generative models. We show that generative models can be constructed from s-generative PDEs (s for smooth). GenPhys subsume the two existing generative models (DM and PFGM) and even give rise to new families of generative models, e.g., "Yukawa Generative Models" inspired from weak interactions. On the other hand, some physical processes by default do not belong to the GenPhys family, e.g., the wave equation and the Schrödinger equation, but could be made into the GenPhys family with some modifications. Our goal with GenPhys is to explore and expand the design space of generative models.

Concepts

The Big Picture

You’re a physicist with a catalog of well-known equations: the heat equation describing how warmth spreads through metal, the Poisson equation governing electric fields, the Schrödinger equation at the heart of quantum mechanics. Hidden inside each of these is a blueprint for a machine that can generate photorealistic images, synthesize music, or simulate molecular structures. That’s the idea behind GenPhys.

AI researchers have borrowed from physics for years, often by accident. Diffusion models, the engine behind Stable Diffusion and DALL-E, were inspired by watching ink dissolve in water. Poisson Flow Generative Models drew from electrostatics, imagining data points as charged particles drifting through a field. Both work extremely well, but they represent just two points in a mostly unexplored space.

A team from MIT (Ziming Liu, Di Luo, Yilun Xu, Tommi Jaakkola, and Max Tegmark) asked a simple question: if two physical equations gave us two great generative models, what happens when you work through the entire zoo of physics? Their answer is GenPhys, a framework that translates partial differential equations (PDEs) directly into generative models.

Key Insight: Any physical process described by a PDE that smooths out information over time can, in principle, be converted into a generative model, opening up new AI architectures inspired by heat, gravity, weak interactions, and more.

How It Works

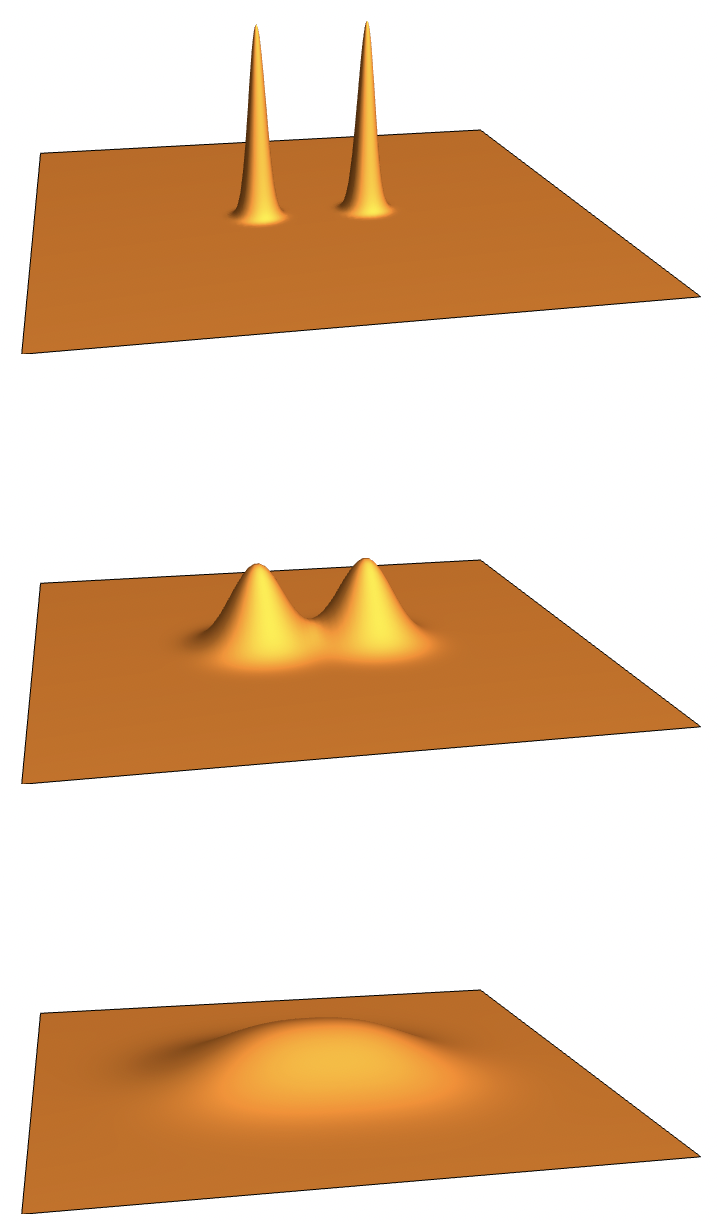

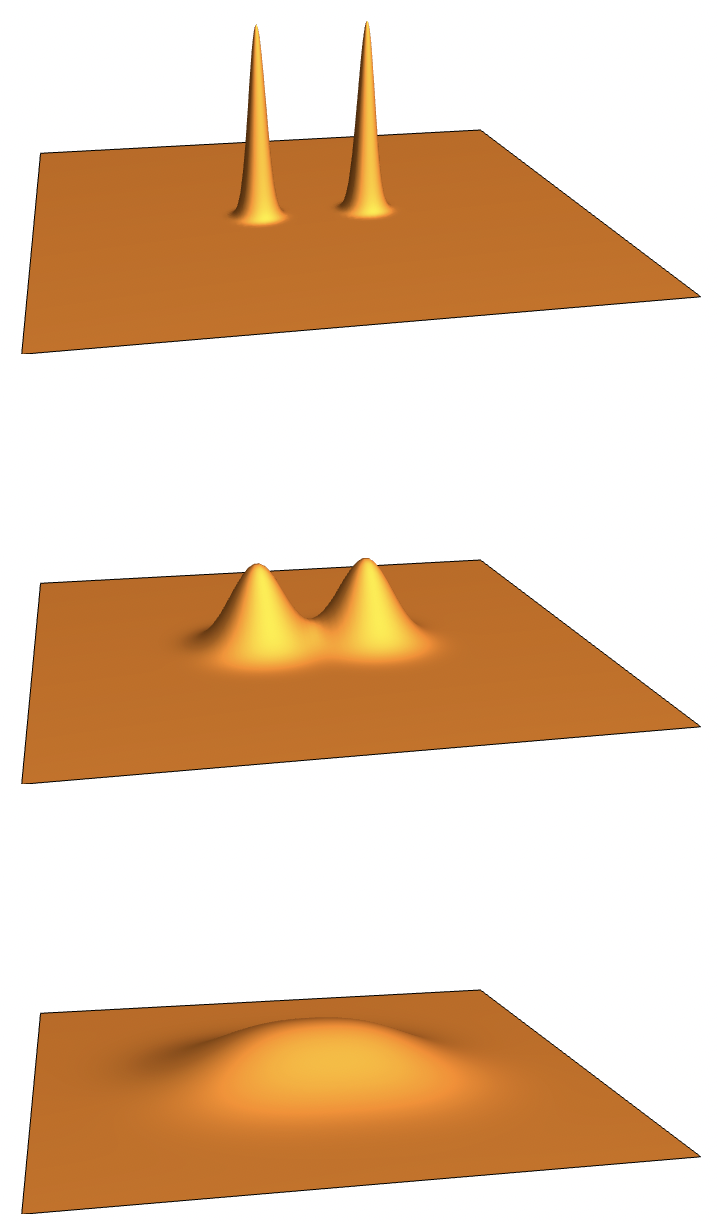

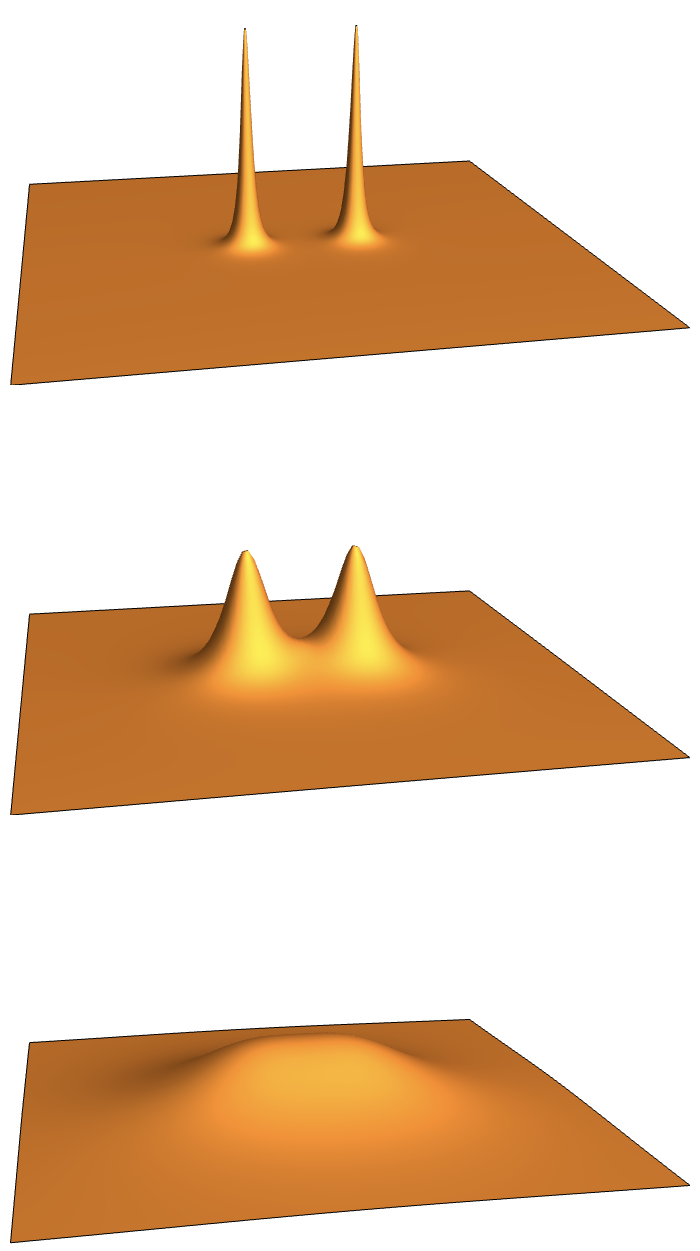

GenPhys rests on a straightforward observation: generative models and physical processes do the same thing in reverse. A physical process takes a complicated state and gradually simplifies it. Heat diffuses until temperature is uniform. Charges redistribute until fields are smooth. A generative model runs this backward: start from simple noise, reverse the process, arrive at complex, structured data.

To formalize this, the team defines s-generative PDEs (the “s” is for smooth). A PDE qualifies if it satisfies two conditions:

- Condition C1: The PDE can be rewritten as a density flow, tracking how all possible versions of a data point drift and spread over time. Think of following a crowd rather than a single person.

- Condition C2: Solutions become smoother over time. Rapid variations die out quickly while slow, gradual patterns persist.

The second condition is the decisive one. It’s what lets you run the process backward: if physics drives everything toward smoothness, then reversing it drives smoothness toward structure. You can test Condition C2 rigorously using dispersion relations, a standard tool for characterizing how quickly fluctuations decay. If fine-grained variations die out exponentially fast while broad, slow variations survive, the PDE earns its s-generative badge.

The team then marches through classical physics. The diffusion equation? S-generative. That’s the heat equation, exactly what diffusion models already exploit. The Poisson equation from electrostatics? Also s-generative. That’s PFGM.

The Yukawa equation, describing a screened potential from the physics of weak interactions, qualifies too. It gives rise to a new family of models the authors call Yukawa Generative Models.

The wave equation and the Schrödinger equation fail the test. A wave doesn’t smooth; it propagates. A quantum wavefunction oscillates indefinitely. Neither is s-generative in its default form. But with modifications, adding dissipation to a wave equation or tweaking the Schrödinger equation, even these can be brought into the GenPhys family.

Building a GenPhys model involves four steps:

- Rewrite the PDE in continuity equation form to extract a velocity field and a source term.

- Solve the PDE with the data distribution as an initial condition, using a Green’s function to get an analytic form. (A Green’s function expresses the solution as the accumulated response to many tiny, point-like nudges.)

- Train a neural network to approximate the velocity field.

- Generate samples by drawing from the simple prior and integrating the learned dynamics backward in time.

Why It Matters

For AI practitioners, GenPhys is a recipe book. Instead of waiting for the next lucky analogy, you can check systematically: is this PDE s-generative? If so, there’s a generative model waiting to be built. The Yukawa model is a proof of concept, a new architecture derived from physics rather than intuition or trial and error.

The work also pins down an analogy physicists have long found appealing. Our universe started from a nearly uniform state after the Big Bang, something close to Gaussian noise, and physical laws evolved that into the complexity we observe today. The math that lets diffusion equations generate images is closely related to the math governing entropy increase. GenPhys makes this connection formal.

The authors describe what they call bidirectional inspiration. Physics has given us new AI models, and the AI perspective on PDEs might suggest new ways to think about physical processes. Future work could test whether quantum or relativistic PDEs, with the right modifications, yield generative models with unusual properties.

Bottom Line: GenPhys provides a systematic recipe for converting any smoothing physical equation into a generative AI model, unifying diffusion models and PFGM under one roof and delivering new architectures like Yukawa Generative Models straight from the physics of weak interactions.

IAIFI Research Highlights

GenPhys builds a formal mathematical bridge between the PDEs of classical and quantum physics and the design of generative AI models, showing that the two fields share deep structural connections.

The framework expands the generative model design space from two known instances (diffusion and Poisson flow) to a potentially large family, providing a systematic method for constructing new architectures from physical principles.

By casting the Yukawa screened potential, a staple of weak interaction theory, as a generative model, the work uncovers computational structure hidden in the mathematics of fundamental interactions.

Future directions include exploring relativistic and quantum PDEs with modifications that restore s-generativity, and testing whether Yukawa and other new models offer practical performance advantages; the paper is available at [arXiv:2304.02637](https://arxiv.org/abs/2304.02637).

Original Paper Details

GenPhys: From Physical Processes to Generative Models

2304.02637

Ziming Liu, Di Luo, Yilun Xu, Tommi Jaakkola, Max Tegmark

Since diffusion models (DM) and the more recent Poisson flow generative models (PFGM) are inspired by physical processes, it is reasonable to ask: Can physical processes offer additional new generative models? We show that the answer is yes. We introduce a general family, Generative Models from Physical Processes (GenPhys), where we translate partial differential equations (PDEs) describing physical processes to generative models. We show that generative models can be constructed from s-generative PDEs (s for smooth). GenPhys subsume the two existing generative models (DM and PFGM) and even give rise to new families of generative models, e.g., "Yukawa Generative Models" inspired from weak interactions. On the other hand, some physical processes by default do not belong to the GenPhys family, e.g., the wave equation and the Schrödinger equation, but could be made into the GenPhys family with some modifications. Our goal with GenPhys is to explore and expand the design space of generative models.