Equivariant Symmetry Breaking Sets

Authors

YuQing Xie, Tess Smidt

Abstract

Equivariant neural networks (ENNs) have been shown to be extremely effective in applications involving underlying symmetries. By construction ENNs cannot produce lower symmetry outputs given a higher symmetry input. However, symmetry breaking occurs in many physical systems and we may obtain a less symmetric stable state from an initial highly symmetric one. Hence, it is imperative that we understand how to systematically break symmetry in ENNs. In this work, we propose a novel symmetry breaking framework that is fully equivariant and is the first which fully addresses spontaneous symmetry breaking. We emphasize that our approach is general and applicable to equivariance under any group. To achieve this, we introduce the idea of symmetry breaking sets (SBS). Rather than redesign existing networks, we design sets of symmetry breaking objects which we feed into our network based on the symmetry of our inputs and outputs. We show there is a natural way to define equivariance on these sets, which gives an additional constraint. Minimizing the size of these sets equates to data efficiency. We prove that minimizing these sets translates to a well studied group theory problem, and tabulate solutions to this problem for the point groups. Finally, we provide some examples of symmetry breaking to demonstrate how our approach works in practice. The code for these examples is available at \url{https://github.com/atomicarchitects/equivariant-SBS}.

Concepts

The Big Picture

Imagine a perfectly symmetric snowflake melting and refreezing into a lopsided crystal. The laws governing water molecules are completely symmetric; they don’t prefer any direction. Yet nature routinely produces structures that break that symmetry. This puzzle creates a real problem for some of the most powerful AI tools scientists use today.

A class of neural networks called equivariant neural networks (ENNs) has become a staple in computational physics and chemistry. They’re built with a clever guarantee: rotate your molecule, and the network’s predictions rotate with it. Feed in a crystal structure, and the output respects the same spatial symmetries. This property, called equivariance, means the network needs far less training data because it never has to rediscover the same physics twice from different orientations.

The catch? That guarantee becomes a straitjacket. By construction, an ENN cannot predict an output with lower symmetry than its input. You can’t get a lopsided crystal from a perfectly symmetric starting configuration, not because the physics forbids it, but because the math of the network does.

MIT researchers YuQing Xie and Tess Smidt tackle this head-on. Their paper in Transactions on Machine Learning Research introduces symmetry breaking sets (SBS): a fully equivariant framework for teaching neural networks to break symmetry without sacrificing the properties that make ENNs work.

Key Insight: You don’t need to redesign your neural network to handle symmetry breaking. Instead, design a carefully chosen set of “symmetry breaking objects” as additional inputs. Group theory tells you exactly how to pick them.

How It Works

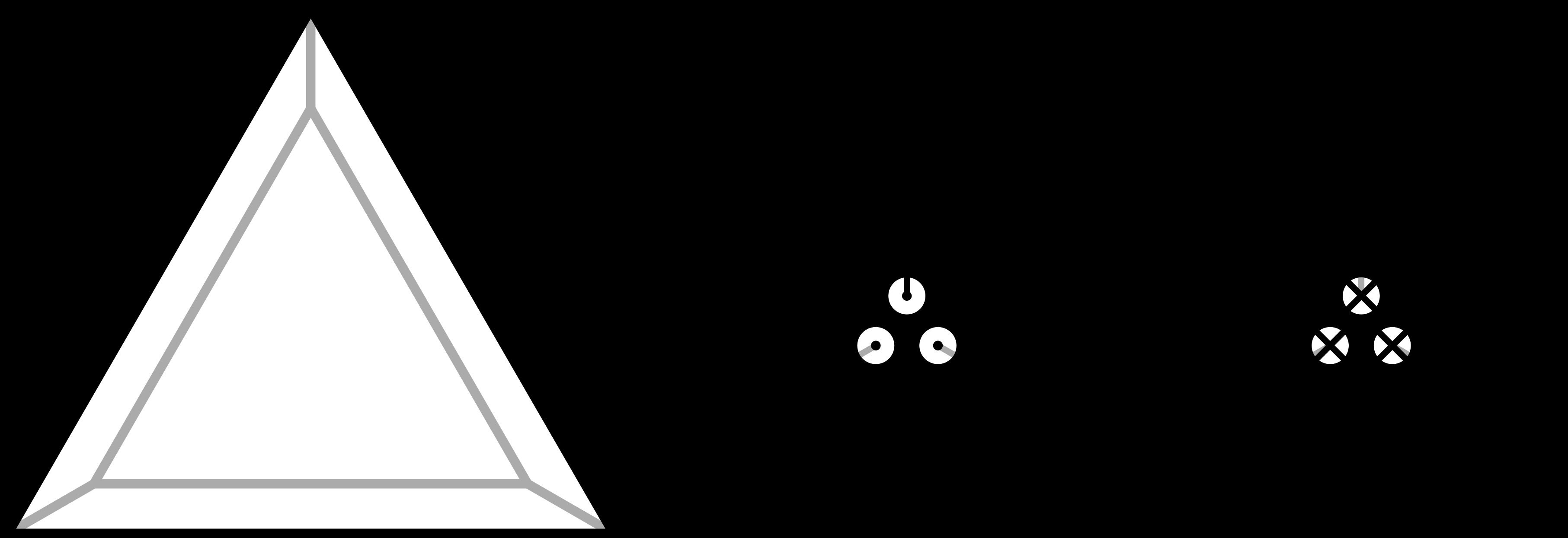

Xie and Smidt distinguish two flavors of symmetry breaking. Explicit symmetry breaking happens when the underlying laws themselves are asymmetric. Spontaneous symmetry breaking is subtler: the laws are perfectly symmetric, but the system settles into one of several equally valid low-symmetry states.

Think of a ball balanced atop a Mexican-hat-shaped hill. The hill is perfectly symmetric around the peak, but the ball must fall somewhere, rolling into one of infinitely many equivalent positions around the rim. Earlier approaches couldn’t handle this regime.

Rather than tinkering with the internals of an existing equivariant network, Xie and Smidt propose feeding the network additional “symmetry breaking objects” alongside the original input. These objects carry just enough asymmetry to nudge the network toward one valid low-symmetry output. The trick is designing them so the whole package (original data plus symmetry breaker) still transforms correctly when the system is rotated, reflected, or reoriented.

Here’s what “correctly” means in practice:

- The set must be closed under the normalizer. When you rotate or reorient the input, the symmetry-breaking objects must rearrange themselves in a matching way. The normalizer of a symmetry group is the set of all transformations that preserve the group’s structure: all the ways you can reorient data without changing its symmetry type.

- Minimizing set size maximizes data efficiency. A smaller SBS means fewer symmetry-breaking copies of your data to process. The authors prove that finding the minimum-size equivariant SBS is mathematically equivalent to finding complements of subgroups, the minimal extra structure needed to complete a partial symmetry into a full one.

- A wrinkle: sometimes it’s more efficient to break more symmetry than strictly necessary. Breaking all symmetry of the input can yield a smaller, cleaner SBS than carefully preserving some symmetries.

The researchers tabulate concrete solutions for all point groups, the symmetry groups describing molecules and crystals. This is the kind of reference table computational physicists will actually use: pick your input symmetry, pick your desired output symmetry, look up the table, and you have your SBS recipe.

The demonstrations cover crystal distortions from high- to low-symmetry phases, ground states that break Hamiltonian symmetry, and fluid dynamics simulations where symmetric flows spontaneously develop asymmetric Kármán vortex streets. In each case, a standard equivariant network augmented with an SBS correctly produces the full set of valid lower-symmetry outputs.

Why It Matters

Spontaneous symmetry breaking is not an edge case. The Higgs mechanism, which gives fundamental particles their mass, is spontaneous symmetry breaking. Phase transitions in materials, from ferromagnetism to superconductivity, involve it. So does the formation of large-scale structure in the early universe.

The inability to handle this kind of physics has been a real obstacle for machine learning in science. The SBS framework removes that obstacle, and it’s plug-and-play: researchers don’t need to discard existing equivariant models or retrain from scratch. They augment their inputs using the tabulated SBS designs. The code is open-source.

Because adoption requires minimal effort, the framework should spread quickly to molecular dynamics, crystal structure prediction, quantum chemistry, and PDE solvers (software for simulating fluid flow, heat transfer, and other physical processes). Anywhere equivariant networks are already deployed and symmetry breaking shows up, SBS slots right in.

Bottom Line: Xie and Smidt have solved the spontaneous symmetry breaking problem for equivariant neural networks, not by breaking the networks, but by giving them the right extra information. The result is a mathematically rigorous, practically usable framework built on classical group theory.

IAIFI Research Highlights

This work turns a classical mathematical concept (subgroup complements) into a concrete engineering recipe for AI systems that model physical symmetry breaking. Abstract group theory becomes a practical tool for machine learning in physics.

The symmetry breaking set framework is the first fully equivariant solution to spontaneous symmetry breaking in neural networks. It lets ENNs model physical phenomena that were previously out of reach while preserving their mathematical guarantees.

Spontaneous symmetry breaking is the mechanism behind the Higgs field, phase transitions, and crystal distortions. Giving AI models the ability to handle it correctly widens the use of machine learning in quantum chemistry, condensed matter physics, and particle physics.

Natural extensions include continuous groups and infinite-dimensional symmetries; the full tabulation of point group solutions is available in the paper (*Transactions on Machine Learning Research*, October 2024; [arXiv:2402.02681](https://arxiv.org/abs/2402.02681); code at github.com/atomicarchitects/equivariant-SBS).

Original Paper Details

Equivariant Symmetry Breaking Sets

2402.02681

YuQing Xie, Tess Smidt

Equivariant neural networks (ENNs) have been shown to be extremely effective in applications involving underlying symmetries. By construction ENNs cannot produce lower symmetry outputs given a higher symmetry input. However, symmetry breaking occurs in many physical systems and we may obtain a less symmetric stable state from an initial highly symmetric one. Hence, it is imperative that we understand how to systematically break symmetry in ENNs. In this work, we propose a novel symmetry breaking framework that is fully equivariant and is the first which fully addresses spontaneous symmetry breaking. We emphasize that our approach is general and applicable to equivariance under any group. To achieve this, we introduce the idea of symmetry breaking sets (SBS). Rather than redesign existing networks, we design sets of symmetry breaking objects which we feed into our network based on the symmetry of our inputs and outputs. We show there is a natural way to define equivariance on these sets, which gives an additional constraint. Minimizing the size of these sets equates to data efficiency. We prove that minimizing these sets translates to a well studied group theory problem, and tabulate solutions to this problem for the point groups. Finally, we provide some examples of symmetry breaking to demonstrate how our approach works in practice. The code for these examples is available at https://github.com/atomicarchitects/equivariant-SBS.