Enhancing Events in Neutrino Telescopes through Deep Learning-Driven Super-Resolution

Authors

Felix J. Yu, Nicholas Kamp, Carlos A. Argüelles

Abstract

Recent discoveries by neutrino telescopes, such as the IceCube Neutrino Observatory, relied extensively on machine learning (ML) tools to infer physical quantities from the raw photon hits detected. Neutrino telescope reconstruction algorithms are limited by the sparse sampling of photons by the optical modules due to the relatively large spacing ($10-100\,{\rm m})$ between them. In this letter, we propose a novel technique that learns photon transport through the detector medium through the use of deep learning-driven super-resolution of data events. These ``improved'' events can then be reconstructed using traditional or ML techniques, resulting in improved resolution. Our strategy arranges additional ``virtual'' optical modules within an existing detector geometry and trains a convolutional neural network to predict the hits on these virtual optical modules. We show that this technique improves the angular reconstruction of muons in a generic ice-based neutrino telescope. Our results readily extend to water-based neutrino telescopes and other event morphologies.

Concepts

The Big Picture

Imagine trying to reconstruct a symphony from a handful of microphones scattered unevenly across a concert hall, each one a hundred meters from the next. You’d catch snippets: a violin here, a cello there. The full picture would be hopelessly incomplete. Now imagine an AI that could listen to those sparse snippets and fill in what the missing microphones would have heard. That’s what a team of Harvard physicists has built for the IceCube Neutrino Observatory, buried under the South Pole.

IceCube detects neutrinos, subatomic particles that stream through the universe nearly unimpeded, by watching for faint flashes of light when a neutrino occasionally slams into an ice molecule. The problem is that IceCube’s 5,160 light sensors are strung through a cubic kilometer of ice with gaps of up to 125 meters between them. Most of the light from any given neutrino interaction passes through those gaps unseen.

This sparse sampling limits how precisely scientists can reconstruct the direction and energy of incoming neutrinos, which limits their ability to trace neutrinos back to cosmic sources. Felix Yu, Nicholas Kamp, and Carlos Argüelles at Harvard’s IAIFI have proposed a fix borrowed from image processing: deep learning super-resolution. The same class of technique that sharpens blurry photos by predicting missing pixels, adapted here for neutrino telescopes.

Key Insight: By training a neural network to predict what “virtual” optical modules would have detected, the team shows it’s possible to sharpen the resolution of neutrino telescopes after the fact, without building a single new sensor.

How It Works

Instead of adding real hardware, the team adds imaginary hardware. They define virtual optical modules (virtual OMs): sensors that don’t physically exist but whose readings the network learns to predict from the sensors that do.

Here’s the pipeline, step by step:

-

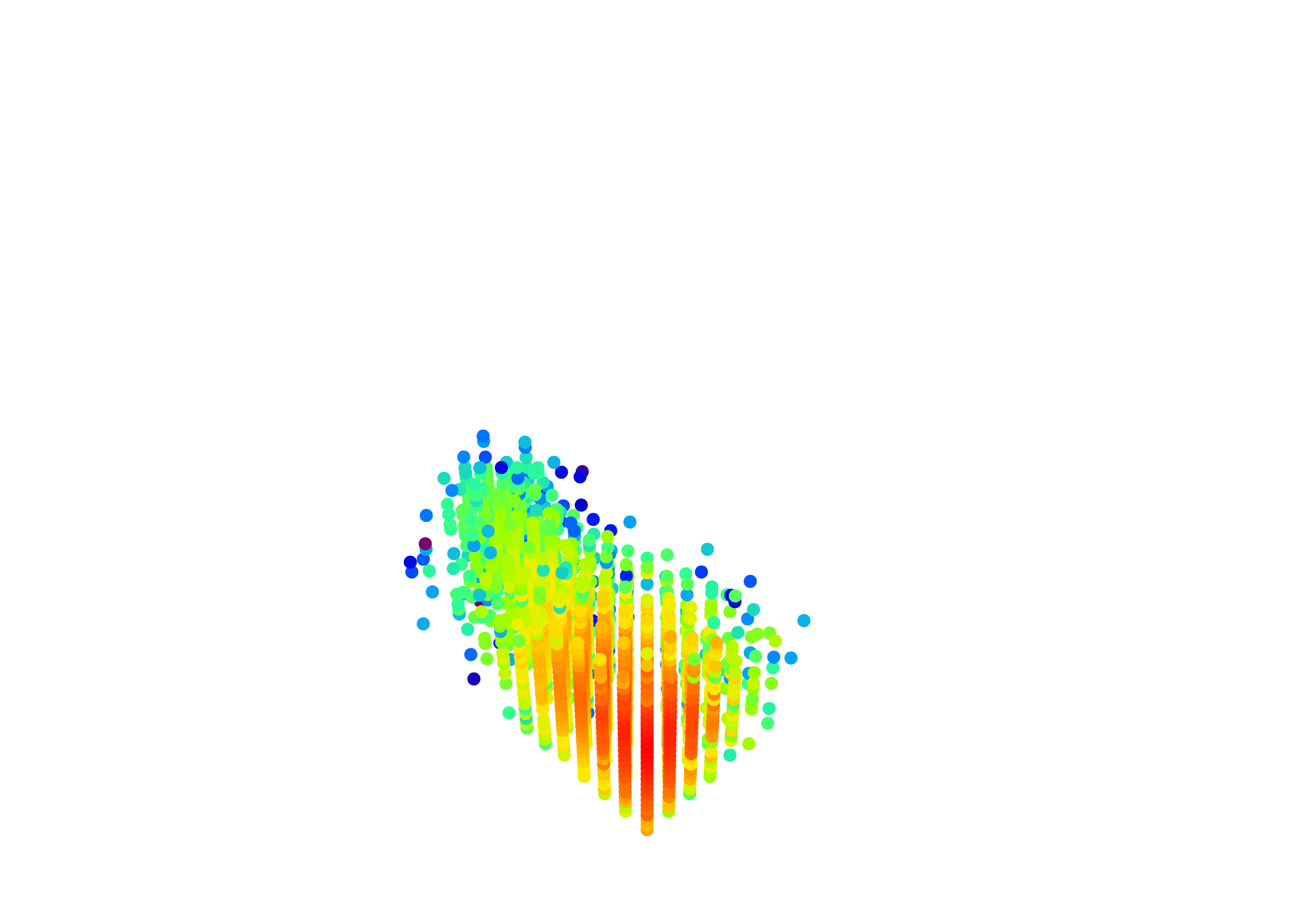

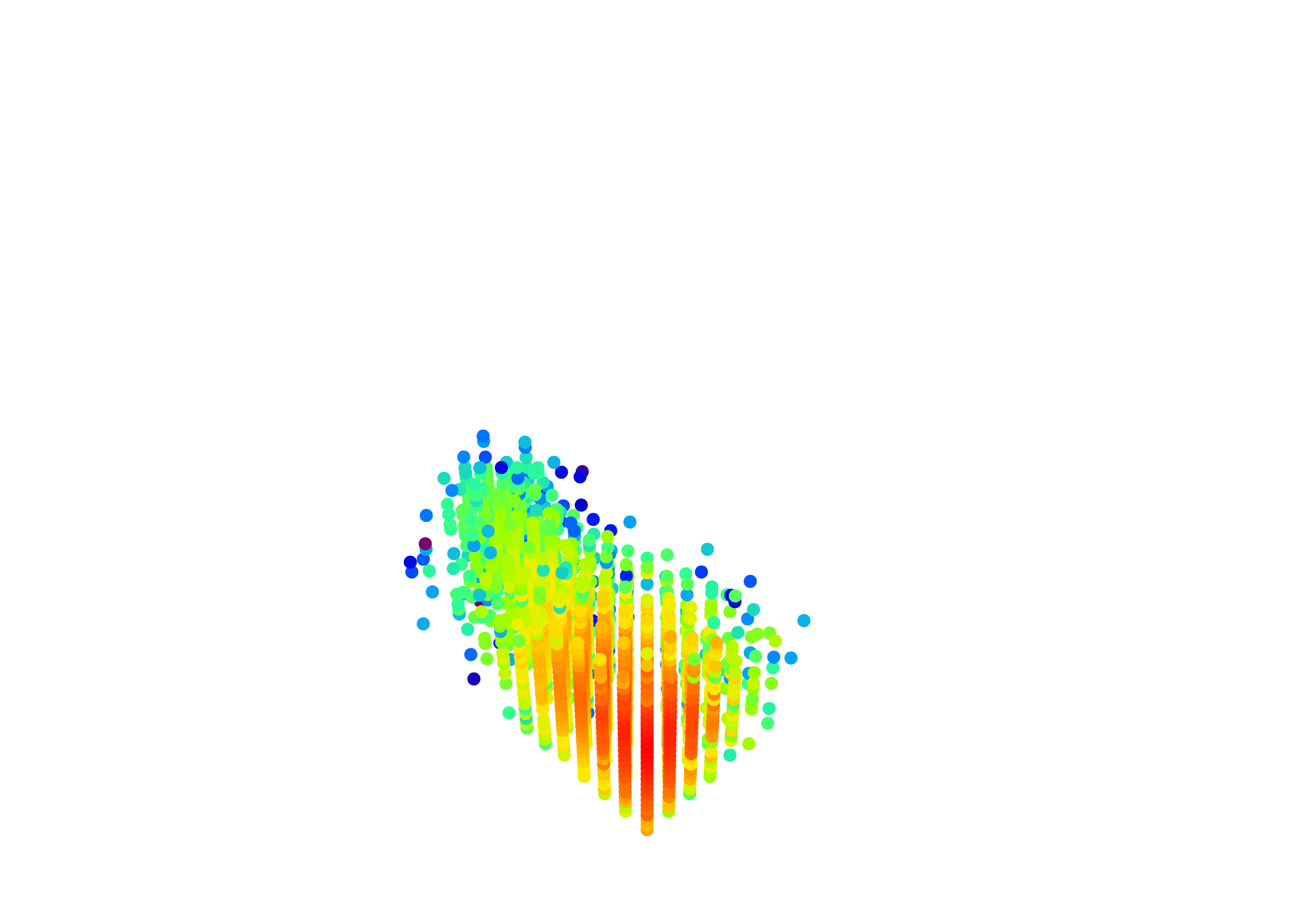

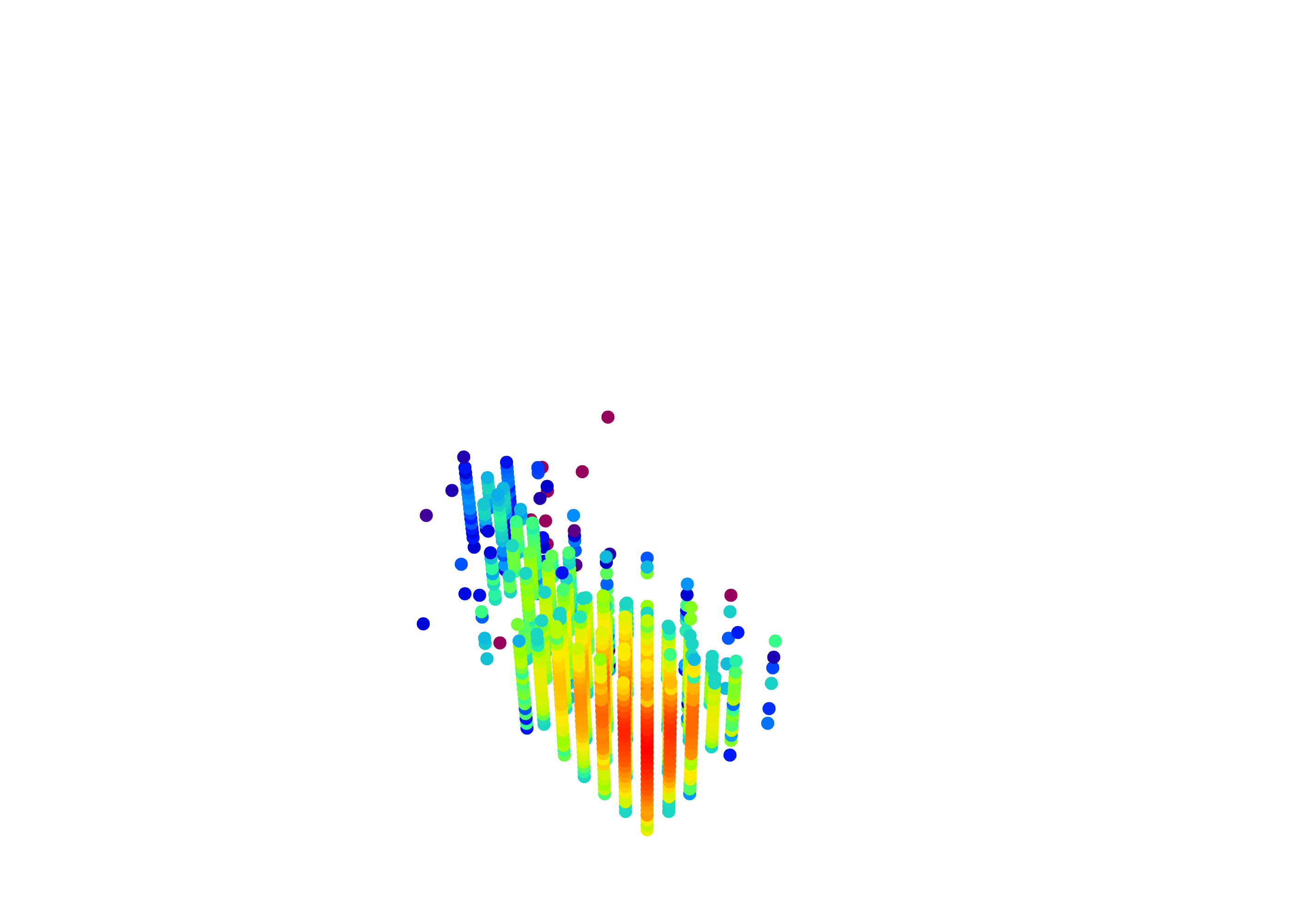

Train on dense simulations. Using

Prometheus, an open-source neutrino telescope simulator, the team simulates a detector with a dense 15×15 grid of strings: 13,705 optical modules total, spaced 60 meters apart horizontally and 15 meters vertically. -

Mask half the strings. During training, every other string is hidden, leaving an 8×8 grid with 120-meter gaps. The network sees the sparse (masked) event and must predict what the hidden strings would have recorded.

-

Encode the timing information. Raw photon hit data is complex. Each sensor records a time series of photon arrivals that varies wildly in length and sparsity. The team first trains a variational autoencoder (VAE) to squeeze each sensor’s time series into a 64-number fingerprint called a latent vector. Preserving timing matters because it encodes where the neutrino came from.

-

Run the super-resolution network. A UNet++, a convolutional architecture originally built for medical image segmentation, takes the 2D grid of latent vectors (arranged by string position) and predicts the latent vectors for the virtual strings. In doing so, it implicitly learns the spatial pattern of photon propagation through the ice.

-

Decode and reconstruct. The predicted latent vectors are decoded back into time series, and the now-complete, super-resolved event feeds into standard reconstruction algorithms.

The network operates in latent space rather than on raw sensor data. Instead of predicting nanosecond-by-nanosecond photon counts across 5,000 bins per sensor, the UNet works with 64-dimensional summaries. Training used roughly 500,000 simulated muon track events (muons are a heavier, unstable cousin of the electron), with 75,000 held aside for testing.

Sensors far from the neutrino interaction record very few photons, which makes their timing distributions hard for the VAE to capture faithfully. But for reconstruction, what matters most is the first hit time and the peak position. The network captures both reliably.

Why It Matters

Sharper angular resolution on neutrino events has direct consequences for neutrino astronomy. The difference between pointing at a specific galaxy (like IceCube’s detection of NGC 1068) and pointing at a vague patch of sky comes down to how well you know a neutrino’s direction. Better resolution means doing science at lower energies, with fainter sources, and with greater statistical confidence.

The technique also works beyond IceCube. The authors extend it to water-based detectors like KM3NeT, Baikal-GVD, and the planned TRIDENT observatory. It applies to other event types too: not just the elongated “track” events from muons, but the compact “cascade” events from electron and tau neutrinos.

Next-generation telescopes like IceCube-Gen2 will instrument ten times the volume of current detectors, but module spacing will increase because the instrumented volume grows faster than sensor counts. Super-resolution offers a way to partially compensate, squeezing better physics out of sparser hardware. Machine learning has mostly been used to analyze detector data after collection. Here, it’s augmenting the instrument itself.

Bottom Line: By teaching a neural network to predict the readings of sensors that don’t exist, this IAIFI team has shown a new strategy for neutrino telescope reconstruction, one that could sharpen the sight of both existing and future detectors without a single additional piece of hardware.

IAIFI Research Highlights

This work takes deep learning super-resolution from computer vision and drops it into particle astrophysics. Image-processing architectures like UNet++ turn out to learn the physics of photon transport through kilometers of polar ice.

The paper introduces a two-stage encoding pipeline (VAE for temporal compression, UNet++ for spatial super-resolution) tailored to the sparse, variable-length time series data common in high-energy physics detectors.

Improved angular reconstruction of neutrino events will sharpen IceCube's and future telescopes' ability to pinpoint astrophysical neutrino sources, strengthening multi-messenger astronomy and the search for extreme cosmic accelerators.

The authors plan to extend this technique to realistic IceCube geometries, cascade events, and water-based detectors. Code is publicly available and the work appears on arXiv ([arXiv:2408.08474](https://arxiv.org/abs/2408.08474)).

Original Paper Details

Enhancing Events in Neutrino Telescopes through Deep Learning-Driven Super-Resolution

2408.08474

Felix J. Yu, Nicholas Kamp, Carlos A. Argüelles

Recent discoveries by neutrino telescopes, such as the IceCube Neutrino Observatory, relied extensively on machine learning (ML) tools to infer physical quantities from the raw photon hits detected. Neutrino telescope reconstruction algorithms are limited by the sparse sampling of photons by the optical modules due to the relatively large spacing ($10-100\,{\rm m})$ between them. In this letter, we propose a novel technique that learns photon transport through the detector medium through the use of deep learning-driven super-resolution of data events. These ``improved'' events can then be reconstructed using traditional or ML techniques, resulting in improved resolution. Our strategy arranges additional ``virtual'' optical modules within an existing detector geometry and trains a convolutional neural network to predict the hits on these virtual optical modules. We show that this technique improves the angular reconstruction of muons in a generic ice-based neutrino telescope. Our results readily extend to water-based neutrino telescopes and other event morphologies.