DribbleBot: Dynamic Legged Manipulation in the Wild

Authors

Yandong Ji, Gabriel B. Margolis, Pulkit Agrawal

Abstract

DribbleBot (Dexterous Ball Manipulation with a Legged Robot) is a legged robotic system that can dribble a soccer ball under the same real-world conditions as humans (i.e., in-the-wild). We adopt the paradigm of training policies in simulation using reinforcement learning and transferring them into the real world. We overcome critical challenges of accounting for variable ball motion dynamics on different terrains and perceiving the ball using body-mounted cameras under the constraints of onboard computing. Our results provide evidence that current quadruped platforms are well-suited for studying dynamic whole-body control problems involving simultaneous locomotion and manipulation directly from sensory observations.

Concepts

The Big Picture

Picture a golden retriever chasing a ball across a muddy field, instinctively adjusting its stride as paws sink into wet earth, eyes locked on that bouncing target. Now imagine programming a machine to do the same. Not on a polished gym floor with overhead cameras and a supercomputer in the corner, but outside, on sand and snow and gravel, using only the sensors it carries on its back.

That’s the problem with legged robots that need to move and manipulate objects at the same time in uncontrolled environments. It’s the difference between a robot arm bolted to a factory floor and a machine that can actually do things in the messy world we live in.

Past attempts at robotic soccer relied on indoor arenas, external motion-capture cameras, or robots that simply shoved a ball with their body. Real dribbling (kicking while running while balancing while tracking a moving target) remained unsolved.

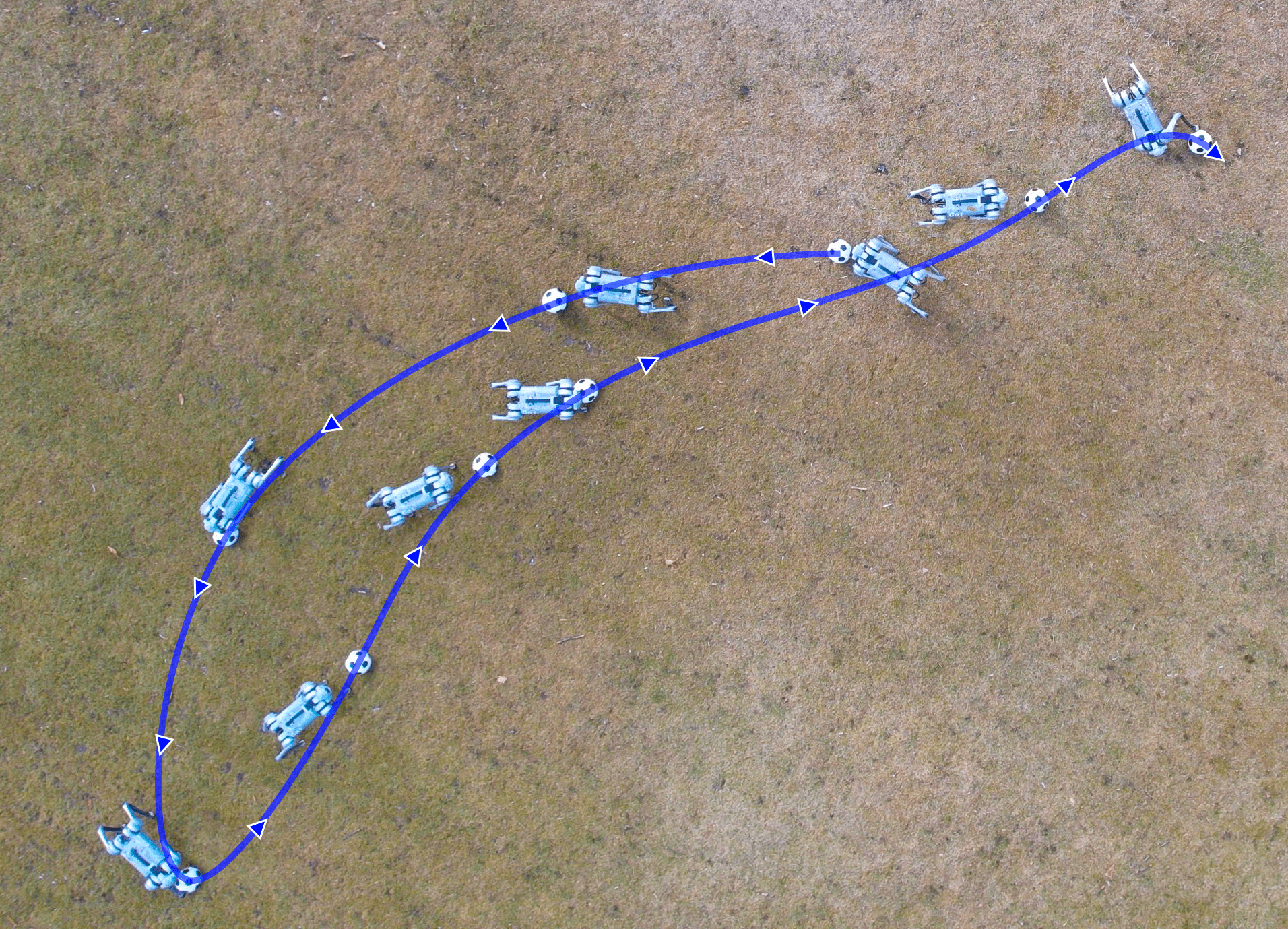

Researchers at MIT’s Improbable AI Lab built DribbleBot, a quadruped robot that dribbles a soccer ball across sand, gravel, mud, and snow using only onboard cameras and computing. No external infrastructure required.

By training entirely in simulation and transferring the learned behavior to reality, DribbleBot shows that off-the-shelf robot hardware is already capable of dynamic whole-body coordination tasks that were previously out of reach.

How It Works

The robot is a Unitree Go1, a small quadruped standing just 40 cm tall (roughly the height of a beagle). It carries two wide-angle fisheye cameras with 210-degree fields of view each, two onboard NVIDIA Jetson Xavier NX computers, and a size-3 soccer ball as its permanent partner.

The core strategy is sim-to-real transfer: train the control policy inside a physics simulator, then deploy it on real hardware. The robot can practice millions of trials virtually instead of destroying its own body in the process. The team used NVIDIA’s Isaac Gym running on a single RTX 3090 GPU.

Simulation alone isn’t enough, though. Three specific engineering problems had to be solved:

-

Variable ball dynamics. A soccer ball rolling across pavement behaves nothing like one rolling through mud. The researchers added a custom ball drag model to the simulator, a mathematical description of how surface friction slows the ball. They randomized those friction parameters so the policy learned to handle a wide range of real-world conditions.

-

Onboard visual perception. Conventional robot cameras have narrow fields of view, which fails when the ball is directly under the robot’s feet. The team switched to fisheye cameras and trained a separate ball detection module using a finetuned YOLOv7 model, accelerated with TensorRT to run at 30 Hz on the Jetson hardware. The dribbling policy never sees raw images; it only receives the estimated ball position. This keeps the policy lightweight and sidesteps the much harder problem of visual sim-to-real transfer.

-

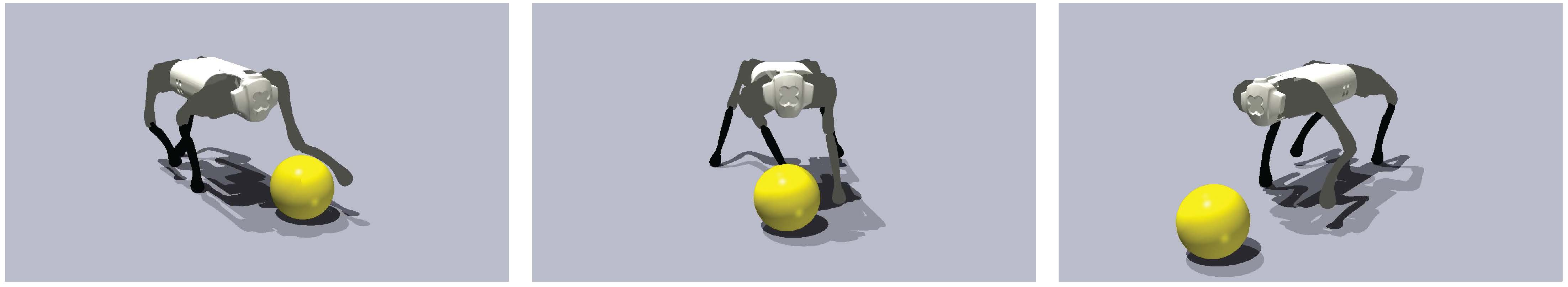

Fall recovery. Rough terrain will occasionally trip the robot. A dedicated recovery policy, trained under harsh falling conditions, lets it stand back up on its own and resume dribbling.

The dribbling policy takes proprioceptive sensor data (joint angles, speeds, and foot contact forces) plus the estimated ball position and a commanded ball velocity. It outputs target positions for all 12 motors at 50 Hz.

Training used Proximal Policy Optimization (PPO), a standard reinforcement learning algorithm. The environment randomized the robot’s starting orientation, leg positions, ball location (anywhere within 2 meters), and target velocity. This forced the policy to learn omnidirectional dribbling rather than a single narrow maneuver.

Why It Matters

DribbleBot matters well beyond the novelty of a robot playing soccer. It’s a proof of concept for a broad class of problems: any scenario where a robot must move through the real world while simultaneously manipulating an object. Think carrying packages over uneven ground, shepherding objects in search-and-rescue, or operating anywhere fixed camera infrastructure doesn’t exist.

The team achieved all of this on consumer-grade hardware. The Go1 costs far less than research-grade platforms, and the Jetson NX units are commodity compute, not server racks. The bottleneck in this kind of robot control is increasingly algorithmic, not hardware.

The modular design matters here too. Splitting perception, dribbling control, and fall recovery into distinct trained components gives you a workable architecture for harder tasks. Future work could extend this to humanoid platforms, non-spherical objects, or multi-robot coordination where the stakes are higher than a soccer match.

Sim-to-real reinforcement learning, combined with careful engineering of terrain dynamics and onboard perception, makes dynamic whole-body manipulation possible in the wild using hardware and compute that already exist today.

IAIFI Research Highlights

This work sits at the intersection of robotics, computer vision, and physics-based simulation. The combination of reinforcement learning with custom terrain dynamics modeling solves a real-world control problem that no single discipline could handle alone.

DribbleBot advances sim-to-real transfer by separating perception from control and randomizing physical parameters during training. The result is a policy that runs on hardware with tight computational constraints.

The custom ball drag model captures the physics of rolling contact across different surface types. Accurate physical modeling in simulation turns out to be what determines whether a learned policy actually works in the real world.

Future directions include extending whole-body dribbling to bipedal platforms and tackling multi-object manipulation. The paper is available as [arXiv:2304.01159](https://arxiv.org/abs/2304.01159) from MIT's Improbable AI Lab.

Original Paper Details

DribbleBot: Dynamic Legged Manipulation in the Wild

2304.01159

Yandong Ji, Gabriel B. Margolis, Pulkit Agrawal

DribbleBot (Dexterous Ball Manipulation with a Legged Robot) is a legged robotic system that can dribble a soccer ball under the same real-world conditions as humans (i.e., in-the-wild). We adopt the paradigm of training policies in simulation using reinforcement learning and transferring them into the real world. We overcome critical challenges of accounting for variable ball motion dynamics on different terrains and perceiving the ball using body-mounted cameras under the constraints of onboard computing. Our results provide evidence that current quadruped platforms are well-suited for studying dynamic whole-body control problems involving simultaneous locomotion and manipulation directly from sensory observations.