Discovering Symmetry Breaking in Physical Systems with Relaxed Group Convolution

Authors

Rui Wang, Elyssa Hofgard, Han Gao, Robin Walters, Tess E. Smidt

Abstract

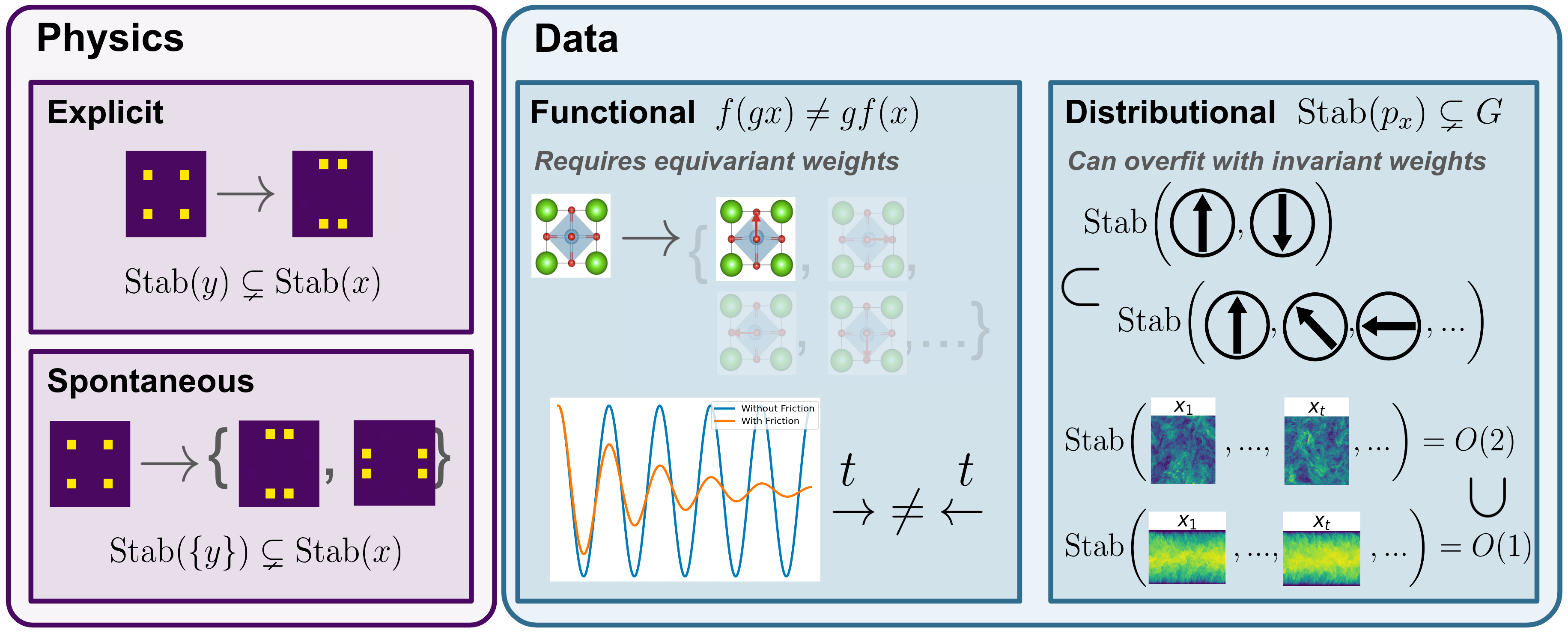

Modeling symmetry breaking is essential for understanding the fundamental changes in the behaviors and properties of physical systems, from microscopic particle interactions to macroscopic phenomena like fluid dynamics and cosmic structures. Thus, identifying sources of asymmetry is an important tool for understanding physical systems. In this paper, we focus on learning asymmetries of data using relaxed group convolutions. We provide both theoretical and empirical evidence that this flexible convolution technique allows the model to maintain the highest level of equivariance that is consistent with data and discover the subtle symmetry-breaking factors in various physical systems. We employ various relaxed group convolution architectures to uncover various symmetry-breaking factors that are interpretable and physically meaningful in different physical systems, including the phase transition of crystal structure, the isotropy and homogeneity breaking in turbulent flow, and the time-reversal symmetry breaking in pendulum systems.

Concepts

The Big Picture

Imagine you’re trying to teach a machine to recognize snowflakes. You build in a rule: snowflakes have six-sided symmetry, so rotate one by 60 degrees and it looks identical. The model learns beautifully. Then you encounter a real snowflake that’s slightly imperfect, bent by a gust of wind, or half-melted. Your perfectly symmetric model has no idea what to do. It was built to see symmetry, and it cannot see the break.

This is not just a snowflake problem. Symmetry and its violation sit at the heart of nearly every interesting phenomenon in physics.

When water freezes into ice, the random molecular dance of the liquid snaps into a rigid, ordered crystal grid, losing its ability to look the same from every angle. A turbulent flow develops pockets of directional preference. A swinging pendulum, pushed by friction or an asymmetric kick, stops looking the same whether you play it forward or backward in time. The breaking of symmetry is where the real physics lives.

A team from MIT, Harvard, and Northeastern has built a neural network framework that doesn’t just tolerate symmetry breaking. It finds where symmetry fails, figures out what kind of failure it is, and puts a number on it.

Key Insight: By replacing rigid, symmetric convolution weights with flexible “relaxed” weights that can learn how much symmetry to enforce, the model discovers and quantifies the specific ways a physical system violates its expected symmetries.

How It Works

Standard equivariant neural networks (models whose outputs transform predictably when you rotate or reflect the input) are workhorses of physics-informed machine learning. Baking symmetry into the architecture gives the model free generalization: rotate the input, and the output rotates in lockstep, no extra training required. But this rigidity becomes a liability when the physical system itself breaks that symmetry.

The fix is relaxed group convolution. In a standard equivariant convolution, all rotated copies of a filter share exactly one weight, regardless of orientation. In a relaxed convolution, each rotated copy gets its own trainable weight.

These relaxed weights are what make the whole approach tick. After training, you can read them like a thermometer. If all the weights for different rotations converge to the same value, the system is symmetric. If they diverge, the pattern of divergence tells you which symmetry broke and by how much.

The authors prove a formal guarantee: a relaxed group convolution model will learn the highest level of equivariance consistent with the data. Not more, not less. When the data is fully symmetric, the relaxed weights converge to uniform values and the model behaves like a standard equivariant network. When the data breaks symmetry in a specific direction, the weights encode that exact directional preference.

The team tested the framework across three physical systems:

- Crystal phase transitions: As a crystal undergoes a structural phase change, its lattice symmetry drops from a higher-symmetry group to a lower one. The relaxed weights tracked this transition, recovering Landau order parameters (the classic physics tool for measuring how “broken” a symmetry is as a system crosses a phase boundary).

- Turbulent channel flow and isotropic flow: Channel flow has walls that break both isotropy (directional uniformity) and homogeneity (positional uniformity), while isotropic flow develops subtle directional biases in finite simulation boxes. The relaxed weights identified both effects independently, even pinpointing where in a turbulent channel the symmetry broke most strongly.

- Pendulum with time-reversal breaking: A conservative pendulum looks identical played forward and backward. Add friction, and it doesn’t. The model detected this time-reversal asymmetry through its relaxed weight pattern, and the magnitude of the learned asymmetry tracked the physical damping coefficient.

On fluid super-resolution tasks, relaxed convolution models outperformed both fully symmetric and fully unconstrained baselines while producing more interpretable results.

Why It Matters

Physics has long used symmetry as a guiding principle precisely because broken symmetry signals something important. The discovery of parity violation, the realization that the weak nuclear force does not treat left-handed and right-handed particles equally, was one of the most shocking results of 20th-century physics. Spontaneous symmetry breaking underlies the Higgs mechanism, superconductivity, and the formation of all ordered matter.

Equivariant machine learning has mostly worked by assuming symmetry and exploiting it. This paper opens the complementary approach: assume symmetry as a prior, then let data reveal where and how it fails.

Almost every physical simulation, experimental dataset, or observational catalog has symmetry that is expected but imperfect. All of them are candidates for this kind of analysis. Cosmological data might reveal subtle violations of statistical isotropy. The same approach could flag hidden asymmetric forces in molecular dynamics simulations, or catch unexpected symmetry-breaking patterns in lattice QCD at finite spacing.

The relaxed weights double as a scientific instrument, returning physically interpretable measurements instead of black-box predictions.

Bottom Line: Relaxed group convolutions let neural networks learn symmetry from data, detecting where it breaks and by how much across systems from crystal lattices to turbulent flows. The result: models that are both more accurate and more scientifically informative than fully symmetric or unconstrained alternatives.

IAIFI Research Highlights

This work connects representation theory, physics symmetry principles, and deep learning architecture design, using group theory to build neural networks that double as scientific instruments for detecting physically meaningful symmetry violations.

Relaxed group convolution provides a principled way to learn the right inductive bias from data, with theoretical guarantees, outperforming both over-constrained symmetric models and unconstrained models on fluid super-resolution benchmarks.

The framework rediscovers known symmetry-breaking effects (phase transition order parameters, turbulent anisotropy, time-reversal violation from damping) in a purely data-driven way, confirming that it works as a general-purpose tool for probing symmetry in physical systems.

Future directions include applying relaxed convolutions to high-energy physics datasets and cosmological observations where even small symmetry violations have major theoretical implications; the work appeared at ICML 2024 ([arXiv:2310.02299](https://arxiv.org/abs/2310.02299)).

Original Paper Details

Discovering Symmetry Breaking in Physical Systems with Relaxed Group Convolution

2310.02299

Rui Wang, Elyssa Hofgard, Han Gao, Robin Walters, Tess E. Smidt

Modeling symmetry breaking is essential for understanding the fundamental changes in the behaviors and properties of physical systems, from microscopic particle interactions to macroscopic phenomena like fluid dynamics and cosmic structures. Thus, identifying sources of asymmetry is an important tool for understanding physical systems. In this paper, we focus on learning asymmetries of data using relaxed group convolutions. We provide both theoretical and empirical evidence that this flexible convolution technique allows the model to maintain the highest level of equivariance that is consistent with data and discover the subtle symmetry-breaking factors in various physical systems. We employ various relaxed group convolution architectures to uncover various symmetry-breaking factors that are interpretable and physically meaningful in different physical systems, including the phase transition of crystal structure, the isotropy and homogeneity breaking in turbulent flow, and the time-reversal symmetry breaking in pendulum systems.