Discovering Sparse Interpretable Dynamics from Partial Observations

Authors

Peter Y. Lu, Joan Ariño, Marin Soljačić

Abstract

Identifying the governing equations of a nonlinear dynamical system is key to both understanding the physical features of the system and constructing an accurate model of the dynamics that generalizes well beyond the available data. We propose a machine learning framework for discovering these governing equations using only partial observations, combining an encoder for state reconstruction with a sparse symbolic model. Our tests show that this method can successfully reconstruct the full system state and identify the underlying dynamics for a variety of ODE and PDE systems.

Concepts

The Big Picture

Imagine trying to understand the rules of chess by watching only half the board. Some pieces move in plain sight, but others are hidden behind a curtain. Could you reconstruct where the hidden pieces are and figure out the rules of the game at the same time? That’s the challenge facing scientists who study turbulent fluids, laser pulses in fiber-optic cables, and other complex physical systems where complete measurements are either impossible or far too expensive.

Partial-observation problems show up everywhere. A weather station measures temperature and pressure but not every air molecule’s velocity. A telescope records the intensity of light but not its phase, the wave’s timing and rhythm, which carries information that brightness alone cannot reveal.

Without the full picture, AI models struggle to learn the true underlying rules. And without those rules, predictions fall apart the moment conditions change.

A team of MIT physicists has built a machine learning framework that does both at once: it reconstructs hidden parts of a system’s state and discovers the symbolic governing equations, all from incomplete observations.

Key Insight: By training a neural network encoder and a sparse symbolic model jointly, this method recovers hidden variables and exact mathematical laws from partial measurements alone.

How It Works

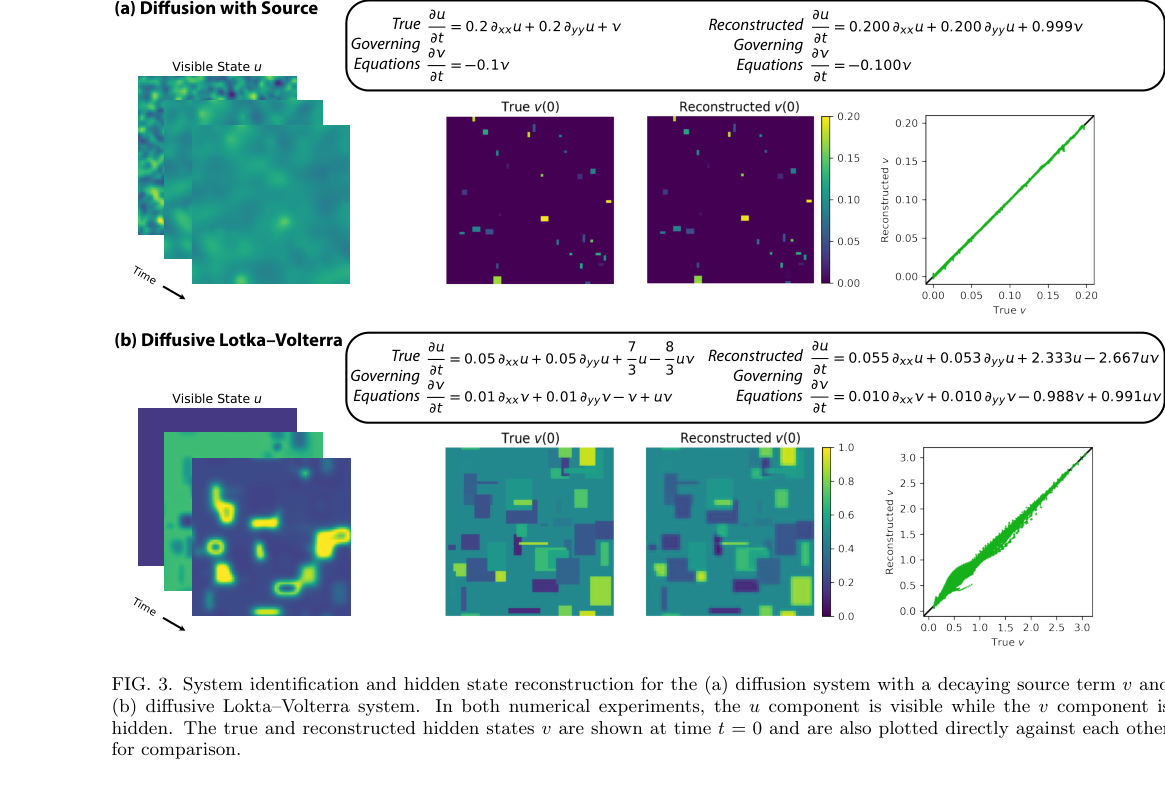

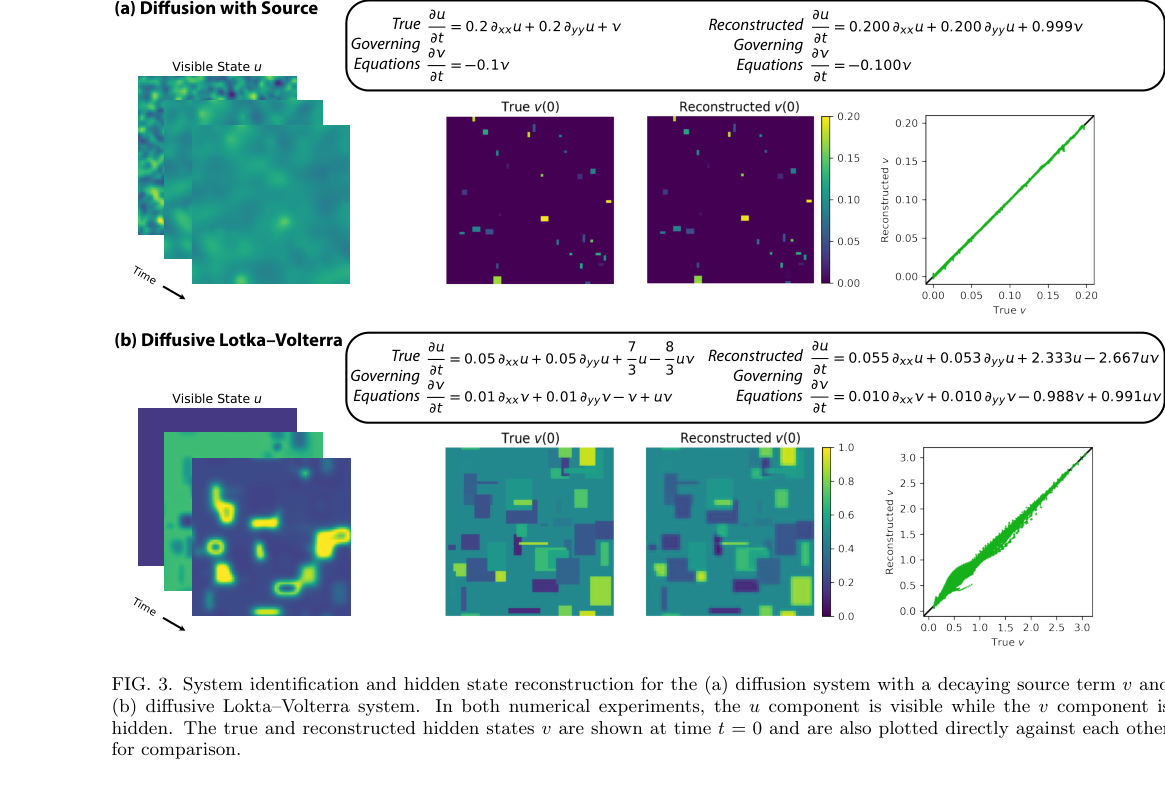

The architecture has two parts. An encoder, a neural network that takes a sequence of visible measurements and infers the values of unobserved variables. And a sparse symbolic model that represents governing equations as a weighted sum of candidate mathematical terms: polynomials, spatial derivatives, trigonometric functions, or whatever is physically plausible.

How do you train these two pieces together when you can only check predictions against partial observations? The trick is higher-order time derivatives. Not just how fast something changes, but how fast that rate of change is itself changing. Think position, velocity, acceleration. Here’s the training loop:

- The encoder produces a reconstructed full state (visible + estimated hidden variables).

- The symbolic model generates predicted time derivatives of that state.

- Automatic differentiation computes exact derivatives through the chain of mathematical operations, yielding higher-order derivatives of the visible states.

- Those predicted derivatives are compared against finite-difference estimates from measurements at nearby moments in time.

- The whole system trains end-to-end to minimize the mismatch.

This sidesteps a chicken-and-egg problem. You don’t need to know the hidden states to train the symbolic model, and you don’t need the governing equations to train the encoder. The training signal flows entirely through observable quantities: time derivatives of what you can measure.

The sparsity piece matters too. Real physical laws are parsimonious. Newton’s second law has three terms, not three hundred. The framework pushes the symbolic model’s coefficients toward zero unless they earn their keep, favoring compact, interpretable equations. What comes out isn’t a black-box prediction. It’s an actual equation you can write on a whiteboard and reason about.

The team tested on several systems of increasing difficulty. For ordinary differential equations, they recovered coupled nonlinear oscillators with hidden coordinates. For partial differential equations, they went after the nonlinear Schrödinger equation, a workhorse of fiber optics and quantum mechanics. There, the method reconstructed the phase of the field from intensity-only measurements, the classic phase retrieval problem, while simultaneously discovering the governing equation. In each case, the symbolic dynamics came out right.

Why It Matters

Fitting interpretable equations to data today requires either complete measurements or strong prior assumptions about how the system works. This framework relaxes both requirements. Equation discovery becomes possible in settings where complete instrumentation is physically out of reach: deep ocean currents, biological neural circuits, plasma in fusion reactors.

There’s a practical AI angle here too. Most data-driven dynamics models are black boxes that work well within training conditions but break the moment something shifts. A symbolic equation captures the structure of the physics, not just its surface statistics. A model that discovers dψ/dt = i(∂²ψ/∂x² + |ψ|²ψ) doesn’t just interpolate; it encodes a law that generalizes across initial conditions, boundary conditions, and system sizes. That’s what symbolic methods buy you over purely neural approaches.

The method has real limitations. It assumes you know the number of hidden state dimensions up front, and scaling to high-dimensional PDEs with many hidden fields hasn’t been demonstrated yet. It also depends on a good candidate library of mathematical terms; if the true dynamics involve functions not in your library, you won’t find them. Physics-informed constraints on the library are one natural way forward.

Bottom Line: Machine learning can reconstruct hidden system states and discover sparse governing equations from partial observations, pushing physics-informed symbolic discovery into a much wider range of real problems.

IAIFI Research Highlights

This work combines machine learning with classical system identification. A neural encoder solves a physics-motivated inverse problem, while the sparse symbolic layer produces equations with genuine physical meaning.

The joint encoder-symbolic architecture, trained end-to-end through higher-order derivative matching, gives researchers a new way to learn interpretable dynamics when only partial measurements are available.

By reconstructing phase and discovering the governing equation for the nonlinear Schrödinger equation from intensity-only data, the method addresses a long-standing measurement problem in quantum optics, fiber communications, and wave physics.

Future work may extend the approach to higher-dimensional PDE systems and relax the assumption that the number of hidden variables is known; see [arXiv:2107.10879](https://arxiv.org/abs/2107.10879) for the full paper from MIT's IAIFI-affiliated researchers.