DexHub and DART: Towards Internet Scale Robot Data Collection

Authors

Younghyo Park, Jagdeep Singh Bhatia, Lars Ankile, Pulkit Agrawal

Abstract

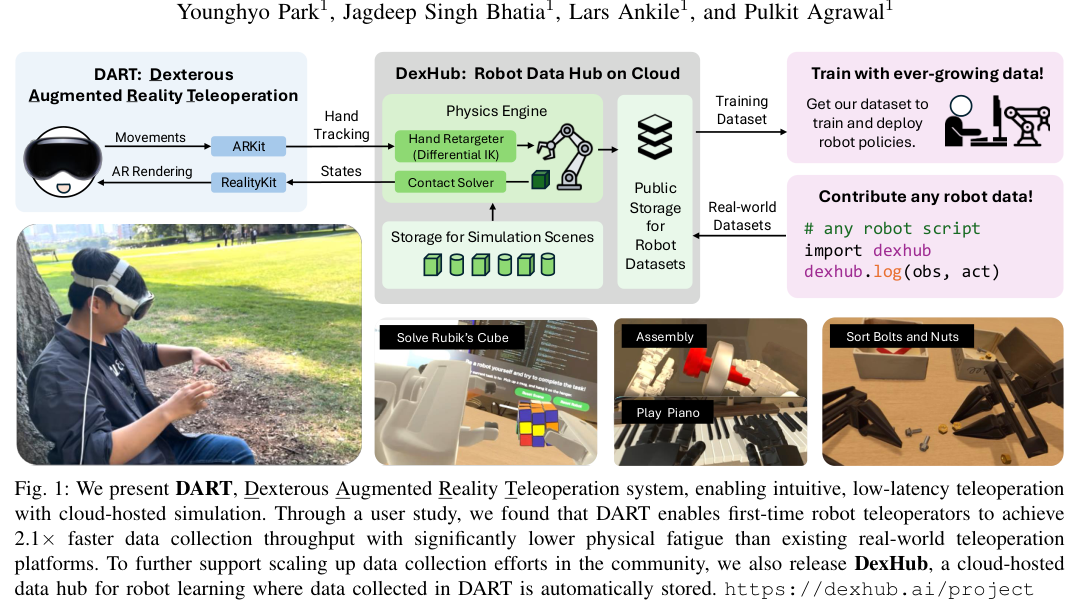

The quest to build a generalist robotic system is impeded by the scarcity of diverse and high-quality data. While real-world data collection effort exist, requirements for robot hardware, physical environment setups, and frequent resets significantly impede the scalability needed for modern learning frameworks. We introduce DART, a teleoperation platform designed for crowdsourcing that reimagines robotic data collection by leveraging cloud-based simulation and augmented reality (AR) to address many limitations of prior data collection efforts. Our user studies highlight that DART enables higher data collection throughput and lower physical fatigue compared to real-world teleoperation. We also demonstrate that policies trained using DART-collected datasets successfully transfer to reality and are robust to unseen visual disturbances. All data collected through DART is automatically stored in our cloud-hosted database, DexHub, which will be made publicly available upon curation, paving the path for DexHub to become an ever-growing data hub for robot learning. Videos are available at: https://dexhub.ai/project

Concepts

The Big Picture

Right now, training a robot is like teaching someone to cook by locking them in a single kitchen with one set of dishes, then making them reset every pot and pan between attempts. Scale that to thousands of learners, each in their own cramped space, and the exhaustion adds up fast.

Building robots that handle real-world unpredictability requires vast training data. Image recognition and language models only became truly capable once researchers pooled enormous shared datasets: millions of labeled photos, billions of web pages. Robot learning needs the same scale.

But robot data doesn’t grow on the web. Someone has to physically move a robot arm, guide it through a task, then reset every object by hand. Hundreds of times, for a single skill. Hardware costs price out most contributors, and scaling up task complexity only makes it worse.

Researchers at MIT’s Improbable AI Lab have a different approach. Their system, DART (Dexterous Augmented Reality Teleoperation), lets anyone demonstrate robot skills through an augmented reality interface connected to a cloud simulation. No robot hardware, no physical resets, no lab access.

Key Insight: By moving robot teleoperation into AR-connected cloud simulation, DART separates data collection from physical infrastructure. Crowdsourced robot learning datasets could then grow the way language and image data grow online.

How It Works

DART rethinks the data collection pipeline. Instead of physically controlling a robot arm, an operator uses a smartphone or AR headset to view and manipulate a high-fidelity simulated environment. The cloud handles all physics and rendering, while the AR overlay gives operators a detailed, occlusion-minimized view (objects don’t block each other from the camera) that often beats what a physical camera setup provides.

This eliminates three bottlenecks in traditional teleoperation:

- Environment setup: No physical lab or robot required. The scene exists in simulation and configures on demand.

- Limited observability: AR rendering gives operators a rich, adjustable view. No camera occlusions, no blurring from video compression.

- Resetting: Hit a button. The environment resets instantly, with randomized object configurations for built-in diversity.

The interface is deliberately game-like. If teleoperation feels like playing a video game rather than operating lab equipment, the pool of potential data contributors gets a lot bigger.

All demonstrations flow into DexHub, a cloud-hosted, publicly accessible database that grows continuously, more a living repository than a static dataset release.

Why It Matters

The user study numbers speak for themselves. DART achieves 2.1× higher data collection throughput compared to real-world teleoperation, with significantly lower physical and cognitive fatigue. Operators no longer have to constantly switch between controlling the robot and resetting objects, a mental tax that compounds over long sessions.

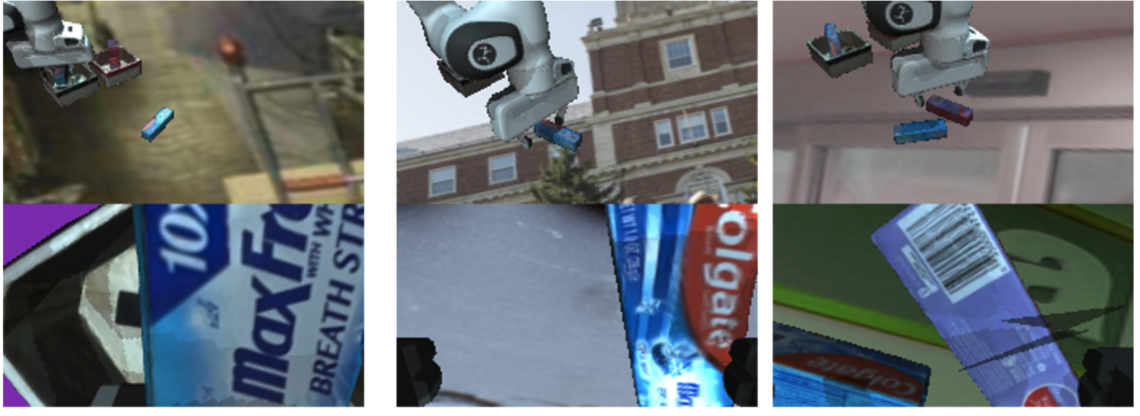

Simulation also opens up training strategies that are impossible with real-world data. Domain randomization (varying lighting, textures, object positions, and physics parameters) produces learned policies that hold up better to visual disturbances than those trained on real demonstrations.

In transfer experiments, DART-trained policies moved successfully to physical robots and outperformed their real-world-trained counterparts on robustness to unseen visual conditions. Data collected without any physical robot produced better policies than data collected with one.

The largest robot datasets today (on the order of 60,000 to 110,000 task recordings from major lab efforts) represent enormous labor investments that still fall short of what modern learning frameworks need. If DART and DexHub scale as intended, the bottleneck shifts from logistics to curation.

Open questions remain. How well does simulation fidelity hold up for increasingly complex manipulation tasks? How much does the sim-to-real gap grow when tasks demand finer contact dynamics, like threading a needle versus stacking dishes? How will DexHub maintain data quality at internet scale, where contributor skill varies enormously? These are real challenges, but the right ones to have.

Bottom Line: DART cuts data collection time in half while removing the physical burden of robot teleoperation, and the policies it trains are more robust than those from real-world data. If DexHub scales, it could be to robot learning what ImageNet was to computer vision.

IAIFI Research Highlights

The work connects robotics, computer graphics, and machine learning, using AR-based simulation as a physics-faithful proxy for real-world robot interaction and opening the door to reinforcement learning approaches that static real-world datasets cannot support.

Crowdsourced simulation data, combined with domain randomization, can produce policies that outperform those trained on real-world demonstrations in robustness to visual distribution shift. This challenges the assumption that physical data is always preferable.

By training policies in simulation that transfer robustly to physical systems, the work probes how learned representations can generalize across the boundary between virtual and physical dynamics.

The team plans to open DexHub as a publicly curated dataset upon release; the project and associated data are described at dexhub.ai, with the full paper at [arXiv:2411.02214](https://arxiv.org/abs/2411.02214).

Original Paper Details

DexHub and DART: Towards Internet Scale Robot Data Collection

2411.02214

["Younghyo Park", "Jagdeep Singh Bhatia", "Lars Ankile", "Pulkit Agrawal"]

The quest to build a generalist robotic system is impeded by the scarcity of diverse and high-quality data. While real-world data collection effort exist, requirements for robot hardware, physical environment setups, and frequent resets significantly impede the scalability needed for modern learning frameworks. We introduce DART, a teleoperation platform designed for crowdsourcing that reimagines robotic data collection by leveraging cloud-based simulation and augmented reality (AR) to address many limitations of prior data collection efforts. Our user studies highlight that DART enables higher data collection throughput and lower physical fatigue compared to real-world teleoperation. We also demonstrate that policies trained using DART-collected datasets successfully transfer to reality and are robust to unseen visual disturbances. All data collected through DART is automatically stored in our cloud-hosted database, DexHub, which will be made publicly available upon curation, paving the path for DexHub to become an ever-growing data hub for robot learning. Videos are available at: https://dexhub.ai/project