Data Compression and Inference in Cosmology with Self-Supervised Machine Learning

Authors

Aizhan Akhmetzhanova, Siddharth Mishra-Sharma, Cora Dvorkin

Abstract

The influx of massive amounts of data from current and upcoming cosmological surveys necessitates compression schemes that can efficiently summarize the data with minimal loss of information. We introduce a method that leverages the paradigm of self-supervised machine learning in a novel manner to construct representative summaries of massive datasets using simulation-based augmentations. Deploying the method on hydrodynamical cosmological simulations, we show that it can deliver highly informative summaries, which can be used for a variety of downstream tasks, including precise and accurate parameter inference. We demonstrate how this paradigm can be used to construct summary representations that are insensitive to prescribed systematic effects, such as the influence of baryonic physics. Our results indicate that self-supervised machine learning techniques offer a promising new approach for compression of cosmological data as well its analysis.

Concepts

The Big Picture

Imagine trying to study the entire ocean by collecting water samples, except instead of a few jars, you have a firehose pumping petabytes of data every day. That’s the situation cosmologists face with the next generation of sky surveys. Instruments like the Vera C. Rubin Observatory and the Dark Energy Spectroscopic Instrument (DESI) will map hundreds of millions of galaxies, generating datasets so vast that traditional compression methods buckle.

The problem isn’t just volume. It’s about what you keep when you compress. Discard the wrong information and your measurement of dark energy or the neutrino mass goes blurry. Keep too much and your analysis pipeline grinds to a halt.

Cosmologists have long relied on hand-crafted “summary statistics” like power spectra, which capture how matter clumps together at different cosmic scales. These are only as good as the human intuition that designed them, and they almost certainly leave something on the table.

Aizhan Akhmetzhanova, Siddharth Mishra-Sharma, and Cora Dvorkin at Harvard and MIT found that self-supervised machine learning can automatically discover compact, information-dense summaries that hold up against messy astrophysical contamination.

By treating different simulations of the same universe as “views” of the same underlying reality, the team trained a neural network to compress cosmological maps into tight summary representations, without ever needing labeled training data. The resulting summaries rival or beat traditional hand-crafted statistics.

How It Works

The approach builds on self-supervised learning. Rather than being fed explicit answers, the model finds structure in unlabeled data on its own. Think of it like learning to recognize faces not from a labeled photo album, but by noticing that two photos taken seconds apart in different lighting should look similar. Same person, after all.

In cosmology, the equivalent of “two photos of the same person” is two simulations that share the same cosmological parameters (like the matter density Ω_m or the amplitude of fluctuations σ_8) but differ in their baryonic physics: the messy details of how gas, stars, and black holes behave inside galaxies. Those differences are like lighting changes, real but irrelevant to the cosmology you’re trying to measure.

The algorithm they use is VICReg (Variance-Invariance-Covariance Regularization), which trains a neural network encoder to satisfy three objectives at once:

- Invariance: Maps with the same cosmological parameters should produce similar summary vectors, regardless of baryonic details

- Variance: The summaries should spread out and use all available dimensions, avoiding collapsed, uninformative representations

- Covariance: Different dimensions of the summary should carry independent information, not redundant copies

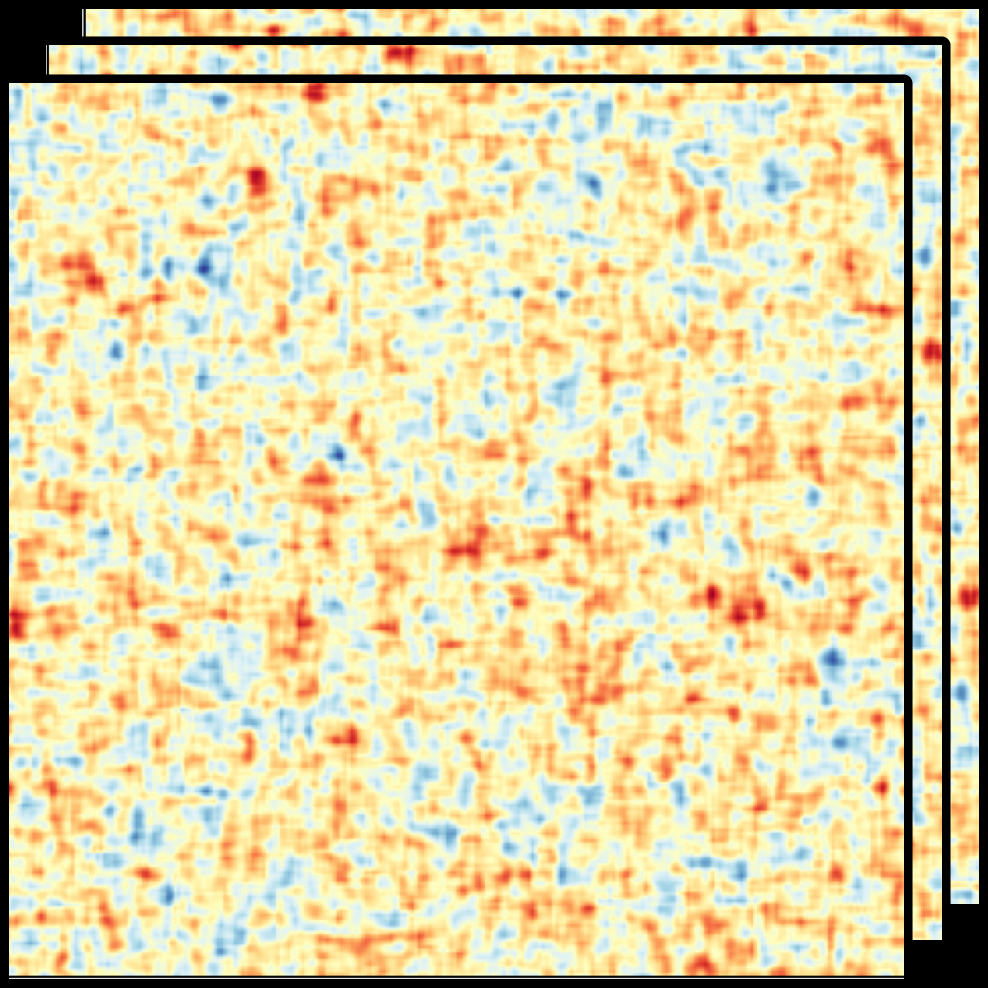

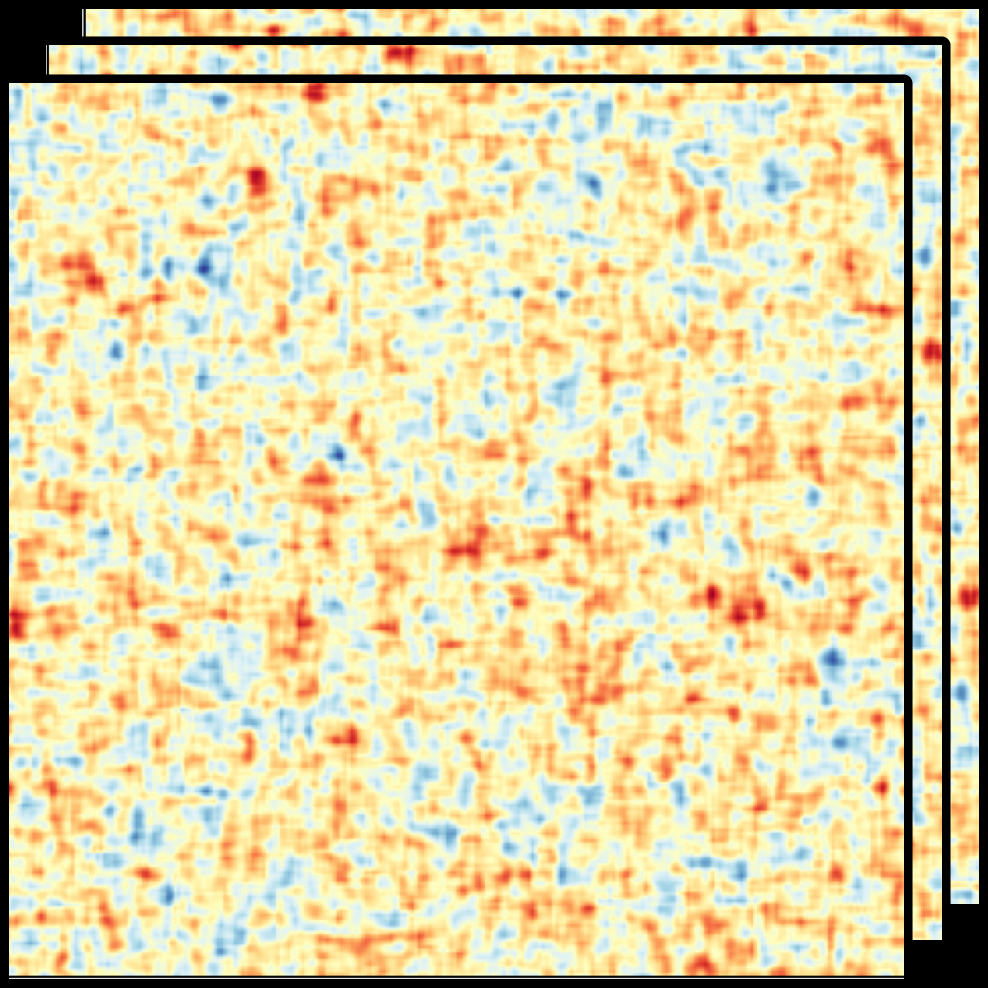

Training data comes from the CAMELS cosmological simulation suite, a library of thousands of simulated universe snapshots spanning a grid of cosmological and astrophysical parameters. Each simulation produces a matter density map: a 2D or 3D snapshot of how mass is distributed across a patch of cosmic volume. Pairs of simulations with matched cosmological parameters but different astrophysical ones become positive training pairs, the cosmological version of “same face, different lighting.”

From each map, the encoder produces a low-dimensional vector (a handful of numbers) that feeds into a simulation-based inference (SBI) pipeline for estimating parameter ranges without an explicit likelihood function. The self-supervised summaries match or exceed a fully supervised neural network trained with explicit parameter labels, and they clearly outperform traditional power spectrum statistics.

The real test is systematics. By designing augmentations around baryonic variations, the team trained a version of the model that is nearly blind to astrophysical contamination.

When supernova and AGN feedback parameters were deliberately varied (active galactic nuclei are the bright cores of galaxies powered by accreting supermassive black holes), the baryonic-invariant summaries barely flinched. They still recovered cosmological parameters accurately. The model learned to see the skeleton of the universe through all the astrophysics layered on top.

Why It Matters

Simulation-based inference is one of the leading frameworks for next-generation cosmological analysis because it sidesteps the need to write down an explicit likelihood function, a task that becomes essentially impossible when data is complex and non-Gaussian. But SBI has a well-known weakness: the curse of dimensionality. The more numbers you feed it, the more simulations you need. Good compression isn’t just convenient. It’s necessary for the whole program to work.

Self-supervised methods give you compression that scales with data complexity and handles diverse data types, from density maps to weak lensing shear fields to 21-cm maps. The simulation-based augmentation is what makes it tick: the compression automatically absorbs whatever systematics are included in the simulation library, no new labels or manual feature engineering needed. As the simulations improve, the method improves with them.

Open questions remain. The current demonstrations use 2D projected maps; extending to full 3D cosmic volumes would increase both the potential information gain and the computational challenge. The method also inherits whatever limitations exist in the underlying simulations. If the simulations miss important physics, the learned summaries may too. Real validation will require testing on actual observational data.

For the next generation of cosmological surveys, self-supervised compression that is both informative and resilient to astrophysical contamination could make the difference between drowning in data and extracting real science from it.

IAIFI Research Highlights

Contrastive self-supervised learning originated in computer vision. Here it becomes a physics-informed compression tool for cosmology, directly connecting modern AI techniques to questions about the large-scale structure of the universe.

The simulation-based augmentation strategy provides a recipe for self-supervised learning in scientific domains. Instead of the usual image transforms (crops, color jitter), physically motivated model variations define what counts as a meaningful "view" of the data.

Precise, systematics-resistant inference of cosmological parameters from massive survey datasets tightens constraints on dark matter, dark energy, and neutrino mass. Upcoming experiments like Euclid and LSST stand to benefit directly.

Future work targets full 3D density fields, real observational data, and integration with sequential SBI pipelines; the paper is available at [arXiv:2308.09751](https://arxiv.org/abs/2308.09751).

Original Paper Details

Data Compression and Inference in Cosmology with Self-Supervised Machine Learning

2308.09751

Aizhan Akhmetzhanova, Siddharth Mishra-Sharma, Cora Dvorkin

The influx of massive amounts of data from current and upcoming cosmological surveys necessitates compression schemes that can efficiently summarize the data with minimal loss of information. We introduce a method that leverages the paradigm of self-supervised machine learning in a novel manner to construct representative summaries of massive datasets using simulation-based augmentations. Deploying the method on hydrodynamical cosmological simulations, we show that it can deliver highly informative summaries, which can be used for a variety of downstream tasks, including precise and accurate parameter inference. We demonstrate how this paradigm can be used to construct summary representations that are insensitive to prescribed systematic effects, such as the influence of baryonic physics. Our results indicate that self-supervised machine learning techniques offer a promising new approach for compression of cosmological data as well its analysis.