Classical Shadows for Quantum Process Tomography on Near-term Quantum Computers

Authors

Ryan Levy, Di Luo, Bryan K. Clark

Abstract

Quantum process tomography is a powerful tool for understanding quantum channels and characterizing properties of quantum devices. Inspired by recent advances using classical shadows in quantum state tomography [H.-Y. Huang, R. Kueng, and J. Preskill, Nat. Phys. 16, 1050 (2020).], we have developed ShadowQPT, a classical shadow method for quantum process tomography. We introduce two related formulations with and without ancilla qubits. ShadowQPT stochastically reconstructs the Choi matrix of the device allowing for an a-posteri classical evaluation of the device on arbitrary inputs with respect to arbitrary outputs. Using shadows we then show how to compute overlaps, generate all $k$-weight reduced processes, and perform reconstruction via Hamiltonian learning. These latter two tasks are efficient for large systems as the number of quantum measurements needed scales only logarithmically with the number of qubits. A number of additional approximations and improvements are developed including the use of a pair-factorized Clifford shadow and a series of post-processing techniques which significantly enhance the accuracy for recovering the quantum channel. We have implemented ShadowQPT using both Pauli and Clifford measurements on the IonQ trapped ion quantum computer for quantum processes up to $n=4$ qubits and achieved good performance.

Concepts

The Big Picture

Imagine trying to understand a mysterious machine by feeding it inputs and observing outputs. Simple enough, until the machine obeys quantum mechanics.

In the quantum world, measuring disturbs what you’re measuring. That’s not a flaw in your instruments; it’s a fundamental rule of physics. For a device with even a modest number of quantum bits, the number of measurements needed to fully characterize its behavior grows exponentially. Fully mapping a 100-qubit quantum device with traditional methods would require more experiments than there are atoms in the observable universe.

This is the problem at the heart of quantum process tomography (QPT): characterizing what a quantum device does, not just for one specific input, but for any possible input. QPT is essential for building trustworthy quantum computers, but its costs have historically been staggering.

Ryan Levy, Di Luo, and Bryan K. Clark have developed ShadowQPT, a new approach that slashes these costs using a technique called classical shadows.

ShadowQPT extends the classical shadows technique from quantum states to quantum processes, allowing full characterization of quantum devices with logarithmically rather than exponentially many measurements for a range of practical tasks.

How It Works

Classical shadows, introduced by Huang, Kueng, and Preskill in 2020, work like a statistical compression scheme. Instead of measuring every property of a quantum state directly, you apply random quantum operations, measure in a fixed basis, and store compact “shadow” records. Later, you estimate many different properties from those records without returning to the quantum device. It’s like taking quick snapshots from random angles and using geometry to reconstruct a 3D object.

ShadowQPT extends this logic from quantum states to quantum processes. The bridge is the Choi matrix, a mathematical object that encodes an entire quantum channel as a single quantum state in a doubled space. Apply shadow tomography to that doubled-up state, and you recover everything about the underlying process.

The team develops two formulations: one using ancilla qubits (extra quantum bits entangled with the input) and one that applies random operations on both input and output sides without ancillas.

The measurement procedure has five steps:

- Prepare random input states by applying random quantum operations to a fixed reference state.

- Run the quantum process on those inputs.

- Apply random measurement operations and record outcomes in the computational basis.

- Store classical shadow records, compact descriptions of each measurement outcome.

- Post-process classically to estimate properties of the Choi matrix.

The real win for hardware comes from the pair-factorized Clifford shadow. Global random Clifford gates are mathematically convenient but expensive to implement across all qubits at once. Instead, the team applies random two-qubit Clifford gates to neighboring pairs. This is far more tractable on real hardware while preserving most of the statistical benefits.

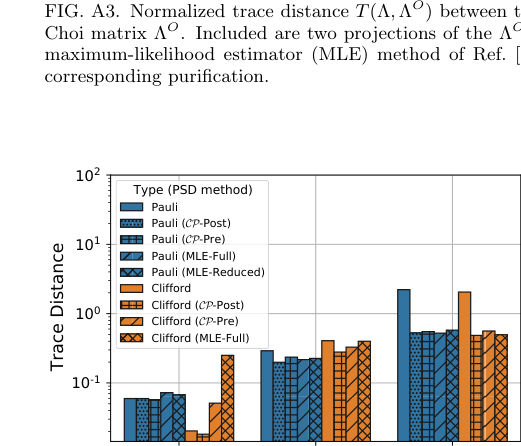

Raw shadow reconstructions can be unphysical, producing matrices with negative probabilities or other artifacts. The team addresses this by projecting the reconstructed Choi matrix back into the space of valid physical channels and applying a purification step. On noisy hardware, these corrections matter.

The efficiency gains are substantial. For tasks like predicting how a process acts on a specific input/output pair, or extracting the behavior of any k-qubit subsystem, measurements scale only logarithmically with qubit count. For a 100-qubit system, that’s the difference between roughly 30 measurements and 10³⁰.

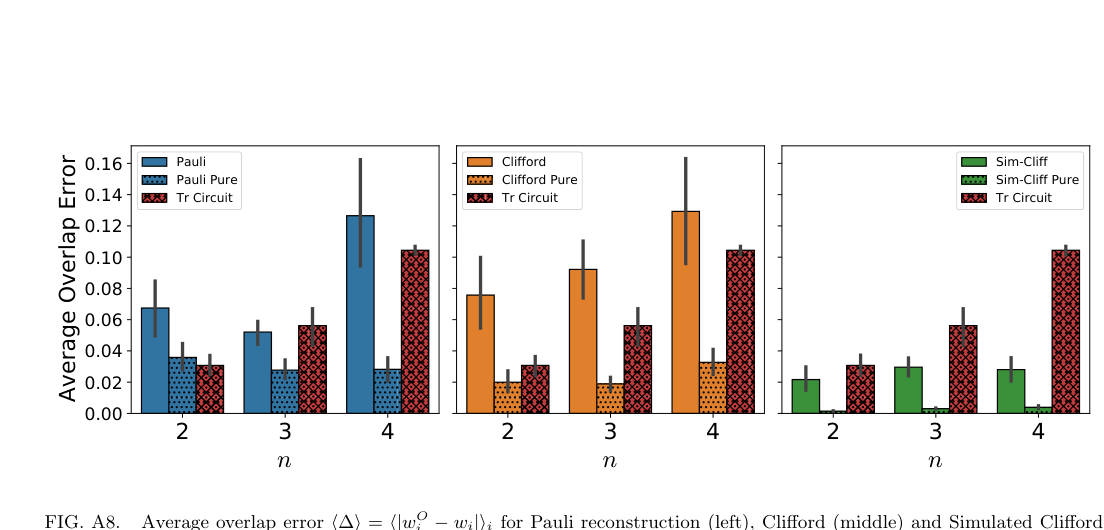

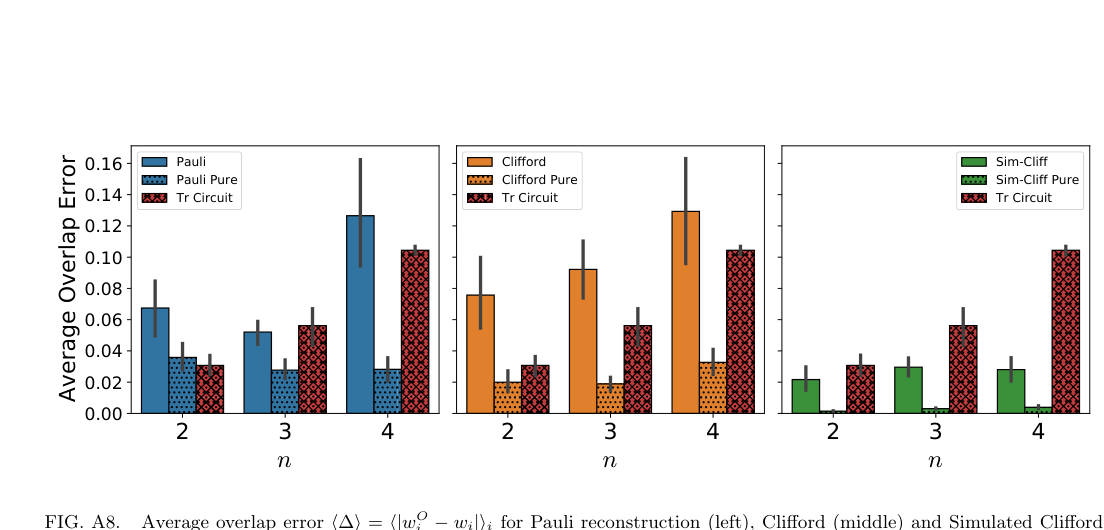

The team validated ShadowQPT on IonQ’s trapped-ion quantum computer, characterizing processes on up to 4 qubits using both Pauli and Clifford measurements. They tested both unitary processes (clean, reversible quantum gates) and non-unitary channels involving noise.

On real hardware, ShadowQPT reconstructions showed good agreement with direct measurements. Clifford shadows outperformed Pauli measurements for full process reconstruction, as theory predicts. The physical projection and purification steps gave a measurable accuracy boost.

The team also demonstrated Hamiltonian learning: reconstructing the physical equations governing a quantum system directly from ShadowQPT data. They recovered parameters of a 1D Ising-type model, with measurement requirements scaling logarithmically in system size.

Why It Matters

Quantum computing is in an era where devices are just large enough to do interesting things but too noisy to run ideal algorithms. Every quantum algorithm on real hardware runs through a noisy quantum channel, not a perfect unitary gate. ShadowQPT gives experimentalists a scalable tool to characterize that channel, to understand not just whether a device is broken, but how it’s broken.

Hamiltonian learning opens a second use case: using quantum computers to study physics itself. If you can efficiently extract the effective Hamiltonian governing a quantum system’s dynamics from process tomography data, near-term hardware becomes a probe of materials, molecules, and field theories. Logarithmic scaling keeps this feasible as systems grow.

Classical shadow methods came out of quantum information theory, but they hold up in the noisier, messier world of NISQ-era (Noisy Intermediate-Scale Quantum) experimentation. ShadowQPT makes that case with real data.

ShadowQPT is the first classical-shadow approach to quantum process tomography, delivering exponential measurement savings for tasks of practical interest and validated on real trapped-ion hardware. It brings us closer to being able to characterize and trust near-term quantum devices at scale.

IAIFI Research Highlights

This work brings together quantum information theory, quantum physics, and statistical methods into a practical tool for quantum device characterization, tying theoretical guarantees to real experimental results.

ShadowQPT's logarithmic measurement scaling reflects a broader principle from learning theory: statistical compression techniques can drastically reduce the data needed to learn complex quantum systems.

By enabling efficient Hamiltonian learning from quantum process data, ShadowQPT gives quantum computers a path to reconstructing the physical laws governing quantum many-body systems, directly supporting IAIFI's mission of using AI to probe fundamental physics.

Future work will extend ShadowQPT to larger qubit counts, more complex noise models, and continuous-variable systems; the full paper is available at [arXiv:2110.02965](https://arxiv.org/abs/2110.02965).

Original Paper Details

Classical Shadows for Quantum Process Tomography on Near-term Quantum Computers

2110.02965

Ryan Levy, Di Luo, Bryan K. Clark

Quantum process tomography is a powerful tool for understanding quantum channels and characterizing properties of quantum devices. Inspired by recent advances using classical shadows in quantum state tomography [H.-Y. Huang, R. Kueng, and J. Preskill, Nat. Phys. 16, 1050 (2020).], we have developed ShadowQPT, a classical shadow method for quantum process tomography. We introduce two related formulations with and without ancilla qubits. ShadowQPT stochastically reconstructs the Choi matrix of the device allowing for an a-posteri classical evaluation of the device on arbitrary inputs with respect to arbitrary outputs. Using shadows we then show how to compute overlaps, generate all $k$-weight reduced processes, and perform reconstruction via Hamiltonian learning. These latter two tasks are efficient for large systems as the number of quantum measurements needed scales only logarithmically with the number of qubits. A number of additional approximations and improvements are developed including the use of a pair-factorized Clifford shadow and a series of post-processing techniques which significantly enhance the accuracy for recovering the quantum channel. We have implemented ShadowQPT using both Pauli and Clifford measurements on the IonQ trapped ion quantum computer for quantum processes up to $n=4$ qubits and achieved good performance.