Challenges for Unsupervised Anomaly Detection in Particle Physics

Authors

Katherine Fraser, Samuel Homiller, Rashmish K. Mishra, Bryan Ostdiek, Matthew D. Schwartz

Abstract

Anomaly detection relies on designing a score to determine whether a particular event is uncharacteristic of a given background distribution. One way to define a score is to use autoencoders, which rely on the ability to reconstruct certain types of data (background) but not others (signals). In this paper, we study some challenges associated with variational autoencoders, such as the dependence on hyperparameters and the metric used, in the context of anomalous signal (top and $W$) jets in a QCD background. We find that the hyperparameter choices strongly affect the network performance and that the optimal parameters for one signal are non-optimal for another. In exploring the networks, we uncover a connection between the latent space of a variational autoencoder trained using mean-squared-error and the optimal transport distances within the dataset. We then show that optimal transport distances to representative events in the background dataset can be used directly for anomaly detection, with performance comparable to the autoencoders. Whether using autoencoders or optimal transport distances for anomaly detection, we find that the choices that best represent the background are not necessarily best for signal identification. These challenges with unsupervised anomaly detection bolster the case for additional exploration of semi-supervised or alternative approaches.

Concepts

The Big Picture

Imagine you’re a security guard at the world’s busiest airport, tasked with spotting suspicious behavior. The catch: nobody’s told you what suspicious looks like. All you can do is study the millions of ordinary travelers passing through each day, build up an intuition for “normal,” and hope that anything truly unusual stands out on its own.

That’s the challenge physicists face at the Large Hadron Collider (LHC). Particle collisions produce an overwhelming flood of routine subatomic interactions, the ordinary physics we already understand. The hope is that exotic new physics will announce itself by looking different.

Despite years of searching, the LHC has turned up no clear evidence of new particles beyond the Standard Model (physicists’ current best theory of all known matter and forces). One reason may be that searches have been too narrowly tailored to specific hypothetical signals. A more flexible strategy is unsupervised anomaly detection: train an algorithm only on “boring” background events, then flag anything that looks unusual.

No assumptions about what new physics looks like are needed. But a study from Harvard physicists at IAIFI shows this approach faces deeper challenges than previously appreciated. Katherine Fraser, Samuel Homiller, Rashmish Mishra, Bryan Ostdiek, and Matthew Schwartz systematically probed why a class of neural networks called variational autoencoders struggle with particle physics anomaly detection, and uncovered a geometric insight that points toward better methods.

Key Insight: The choices that best compress and represent background events are not necessarily the choices that best expose signal events as anomalies. This tension sits at the heart of unsupervised anomaly detection.

How It Works

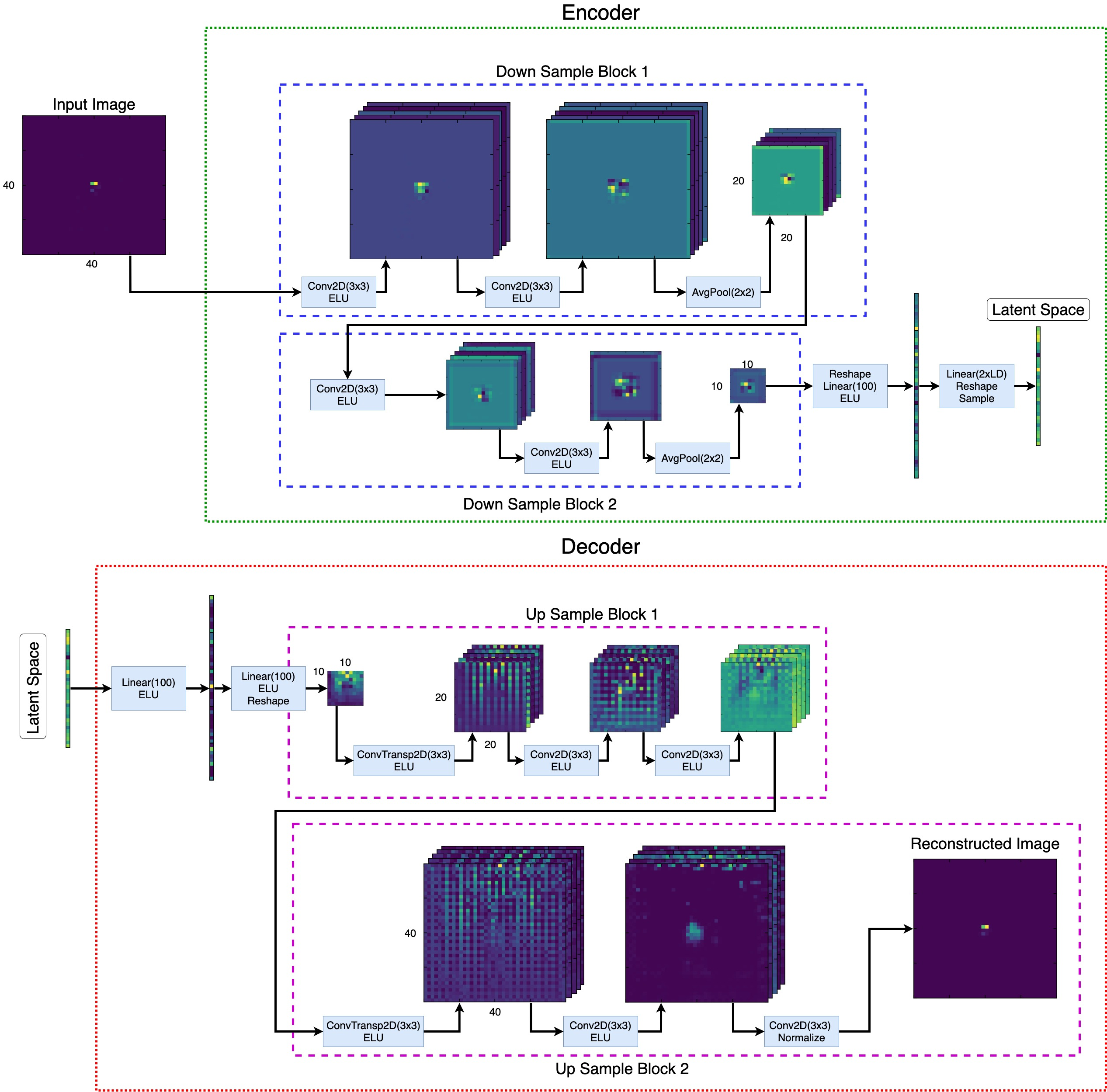

The workhorse of unsupervised anomaly detection in particle physics is the variational autoencoder (VAE), a neural network that learns to compress data into a compact representation and then reconstruct it. Think of it like a lossy photo compressor: squeeze an image down, then expand it back. If the original was typical, the reconstruction will be faithful. If it was unusual, details get lost.

Train a VAE on background-only data and it learns the structure of normal events. When a signal event shows up, the VAE can’t reconstruct it well. The gap between the original and the network’s best attempt, called the reconstruction error, works as an anomaly score.

The team tested this on jets, the sprays of particles produced when quarks and gluons scatter at high energy. Their background consisted of ordinary QCD jets, the routine particle showers that dominate most LHC collisions. Their signals were top quark jets and W boson jets, both with distinctive multi-pronged substructure compared to the smooth QCD baseline. Jets were represented as images: two-dimensional maps of energy deposits in the detector.

Performance depends heavily on hyperparameters, tuning knobs set before training. These include the size of the latent space (the compressed internal representation, analogous to the small file in the photo compressor analogy), the relative weight of the reconstruction loss versus the KL divergence (a penalty that keeps the compressed representation organized), and the distance metric used to measure reconstruction error. Systematic variation of these choices revealed:

- Optimal settings for detecting top jets were often non-optimal for W jets

- Small changes in KL weight dramatically shifted performance, sometimes reversing which signal the network could best identify

- No single configuration worked best for all signals simultaneously

For a tool that’s supposed to be model-agnostic, this is a problem. If tuning for one signal hurts sensitivity to another, the whole point is undermined.

While probing the VAE’s internals, the researchers noticed something unexpected: distances between events in the latent space were strongly correlated with Wasserstein distances (also called optimal transport distances) between the original jet images. Wasserstein distance has a straightforward physical interpretation. It measures how much “work” it takes to rearrange the energy deposits in one jet image to match another, treating energy as dirt that needs to be shoveled from one pile to another.

This connection hinted that the VAE was learning a compressed version of optimal transport geometry, which raised an obvious question: why use an autoencoder at all? The team developed an event-to-ensemble distance approach. Select a set of representative background events, then score each new event by its optimal transport distance to those representatives. No neural network training required. Just a physically interpretable measure of how far an event sits from typical background.

The results matched the best VAE configurations in performance, and they made the same fundamental tension visible: the set of representative events that best describes the background is not the set that best separates signal from background.

This lines up with a deep statistical principle. The best density estimator concentrates resources on high-probability regions, the bulk of the background. Anomaly detection needs sensitivity at the edges, where rare signal events hide. These two goals pull in opposite directions.

Why It Matters

The autoencoder paradigm implicitly assumes that learning to represent background well will naturally make signal events stand out. This paper shows that assumption is fragile: the optimization target (reconstruction fidelity on background) isn’t aligned with the actual goal of discovering new signals.

The optimal transport connection offers a computationally lighter and more interpretable alternative to VAEs. You can understand exactly why a particular event gets flagged, in terms of jet geometry. But it runs into the same wall. Both approaches are caught in the same tension between description and discrimination.

The most actionable conclusion is the case for semi-supervised approaches, methods that incorporate even weak or indirect information about what signals might look like. Hybrid strategies that pair the broad sensitivity of unsupervised methods with even minimal signal priors are likely the most productive direction for new physics searches at the LHC.

Bottom Line: Variational autoencoders for particle physics anomaly detection are more sensitive to hyperparameter choices than previously recognized. The optimal transport geometry they implicitly learn can be used directly, but no unsupervised method can fully escape the tension between modeling background and detecting signal. Semi-supervised approaches are a more promising path forward.

IAIFI Research Highlights

This work builds a concrete mathematical link between VAE latent spaces and optimal transport geometry, connecting machine learning methodology with physically motivated jet metrics.

The study shows that VAE latent geometry under MSE training encodes Wasserstein-like distances, and that direct optimal transport scoring matches autoencoder performance, providing a more interpretable, training-free anomaly detection baseline.

By systematically mapping the failure modes of unsupervised anomaly detection for QCD jets, this work clarifies the theoretical basis for model-agnostic new physics searches at the LHC and motivates more targeted semi-supervised strategies.

Future work should explore semi-supervised and hybrid methods that better align training objectives with signal discovery goals; the full analysis is available at [arXiv:2110.06948](https://arxiv.org/abs/2110.06948).

Original Paper Details

Challenges for Unsupervised Anomaly Detection in Particle Physics

2110.06948

["Katherine Fraser", "Samuel Homiller", "Rashmish K. Mishra", "Bryan Ostdiek", "Matthew D. Schwartz"]

Anomaly detection relies on designing a score to determine whether a particular event is uncharacteristic of a given background distribution. One way to define a score is to use autoencoders, which rely on the ability to reconstruct certain types of data (background) but not others (signals). In this paper, we study some challenges associated with variational autoencoders, such as the dependence on hyperparameters and the metric used, in the context of anomalous signal (top and $W$) jets in a QCD background. We find that the hyperparameter choices strongly affect the network performance and that the optimal parameters for one signal are non-optimal for another. In exploring the networks, we uncover a connection between the latent space of a variational autoencoder trained using mean-squared-error and the optimal transport distances within the dataset. We then show that optimal transport distances to representative events in the background dataset can be used directly for anomaly detection, with performance comparable to the autoencoders. Whether using autoencoders or optimal transport distances for anomaly detection, we find that the choices that best represent the background are not necessarily best for signal identification. These challenges with unsupervised anomaly detection bolster the case for additional exploration of semi-supervised or alternative approaches.