Applications of Lipschitz neural networks to the Run 3 LHCb trigger system

Authors

Blaise Delaney, Nicole Schulte, Gregory Ciezarek, Niklas Nolte, Mike Williams, Johannes Albrecht

Abstract

The operating conditions defining the current data taking campaign at the Large Hadron Collider, known as Run 3, present unparalleled challenges for the real-time data acquisition workflow of the LHCb experiment at CERN. To address the anticipated surge in luminosity and consequent event rate, the LHCb experiment is transitioning to a fully software-based trigger system. This evolution necessitated innovations in hardware configurations, software paradigms, and algorithmic design. A significant advancement is the integration of monotonic Lipschitz neural networks into the LHCb trigger system. These deep learning models offer certified robustness against detector instabilities, and the ability to encode domain-specific inductive biases. Such properties are crucial for the inclusive heavy-flavour triggers and, most notably, for the topological triggers designed to inclusively select $b$-hadron candidates by exploiting the unique kinematic and decay topologies of beauty decays. This paper describes the recent progress in integrating Lipschitz neural networks into the topological triggers, highlighting the resulting enhanced sensitivity to highly displaced multi-body candidates produced within the LHCb acceptance.

Concepts

The Big Picture

Imagine trying to drink from a fire hose pumping 4 terabytes of data every single second. That is what physicists at CERN’s LHCb experiment face during Run 3. Protons smash together 30 million times per second inside the Large Hadron Collider, and each collision produces a torrent of particles that must be instantly evaluated, filtered, and either saved or discarded forever. Once an event is thrown away, it is gone.

The LHCb detector hunts for some of the rarest phenomena in nature: the decays of b-hadrons, heavy, unstable particles built around a bottom quark. They live for just a few picoseconds before disintegrating into showers of lighter particles. These decays may help explain why the universe contains matter at all, and could point to entirely new physics beyond current theories.

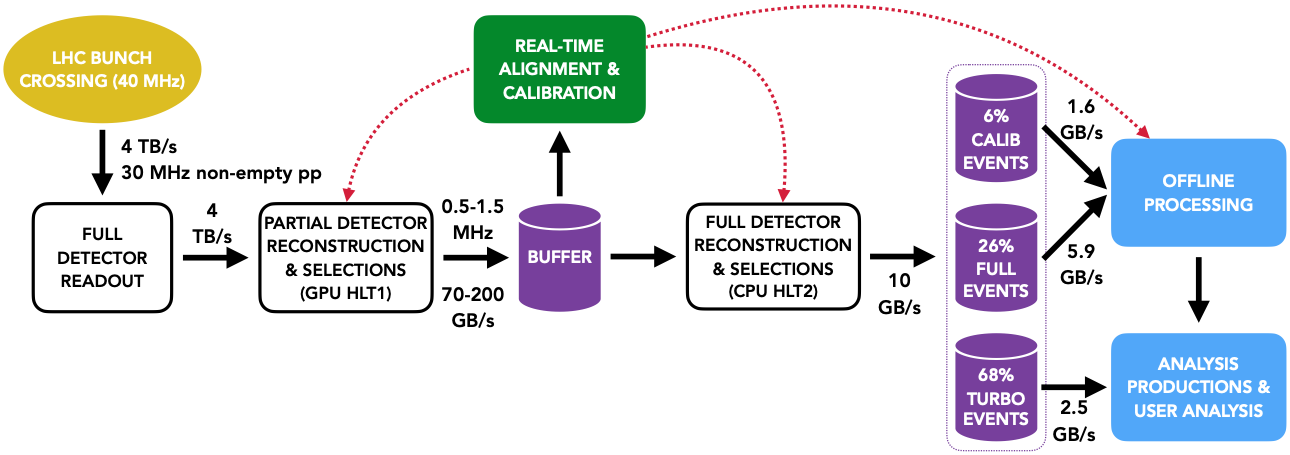

Finding them requires a trigger system, an automated decision engine that slashes the 4 TB/s flood down to about 10 GB/s, running at the speed of the collisions themselves.

A team from MIT, CERN, TU Dortmund, and Meta AI has now embedded a class of constrained neural networks directly into LHCb’s trigger system. The result: mathematical guarantees of stability and improved sensitivity to rare b-hadron decay signatures.

Key Insight: By constraining neural networks to be both Lipschitz-bounded and monotonic, the LHCb team built classifiers that are provably stable under detector noise. They can make irreversible decisions at 30 MHz without falling apart when instruments hiccup.

How It Works

The LHCb trigger operates in two stages. HLT1 (High Level Trigger 1) runs on GPUs, performing a rapid partial reconstruction of each event from charged-track information alone and cutting the data volume by a factor of 20. What survives flows into a buffer for real-time alignment and calibration before reaching HLT2, a CPU-based system that applies offline-quality reconstruction for more refined decisions.

The topological triggers sit inside HLT2. They search for decay signatures based on geometric shape, specifically the hallmark of b-hadron decays: a displaced secondary vertex, a point in space shifted millimeters to centimeters from the original proton-proton collision. B-hadrons travel at near light speed before decaying, so they leave a measurable gap between the collision point and where their decay products appear. The topological triggers reconstruct these secondary vertices from pairs (two-body) or triplets (three-body) of final-state tracks, covering a broad range of b-hadron decays.

The real advance is the neural network architecture powering these triggers. Standard deep learning classifiers can behave unpredictably when inputs shift slightly, say because a detector module is running warm or a calibration drifts. In a system where every discarded event is a permanent loss, that unpredictability is unacceptable.

Enter Lipschitz neural networks. These impose a strict mathematical cap on how much the network’s output can swing in response to a small change in its inputs. Think of it as a sensitivity limit baked into the architecture: no matter what the detector throws at the network, large jumps in the classifier score are forbidden. In practice:

- A small perturbation in input features from detector instabilities can only produce a proportionally small change in the classifier score

- The bound is certified: not just empirically observed, but proven by construction through architectural constraints on weight matrices

- Systematic uncertainties for downstream physics analyses become far easier to evaluate

The team also enforces monotonicity. Certain physical quantities should, by straightforward physics reasoning, always push a classifier toward accepting an event. A longer decay distance or higher transverse momentum should always increase the likelihood that a candidate is a real b-hadron. Monotonicity encodes that domain knowledge directly into the model.

This matters especially for BSM (Beyond the Standard Model) searches. Exotic particles absent from training data may still carry the right kinematic fingerprint to pass the trigger. The monotonicity constraint forces the network to respond to the physics of the decay, not to whether it recognizes the parent particle.

The two-body and three-body topological triggers each use a curated set of input features: vertex displacement significance, track quality, transverse momentum, vertex fit quality. Monotonicity requirements are assigned to the physically motivated ones. The networks are compact enough to run within HLT2’s real-time compute budget, no small feat given the data volume flowing in from HLT1.

Why It Matters

The real payoff here is reliability. The topological triggers must stay dependable over months and years of continuous operation, across shifting detector conditions, without human intervention. Lipschitz monotonic networks provide a formal contract: the classifier will not suddenly start rejecting good events because a sensor drifted out of calibration.

That kind of guarantee has been nearly impossible to achieve with the boosted decision trees these networks replace, or with standard unconstrained deep learning.

Any experiment that must make real-time, irreversible event selections under imperfect detector stability could adopt the same approach. Particle physics triggers run around the clock at the boundary of known physics, and this work shows that neural networks with formal behavioral guarantees can meet those demands.

For BSM searches, the monotonicity constraint offers a real advantage: the trigger becomes more sensitive, not less, to the unexpected.

Bottom Line: Lipschitz monotonic neural networks are now making irreversible decisions at 30 million collisions per second inside CERN’s LHCb detector, with certified stability against detector noise and enhanced reach for undiscovered particles. This is one of the first deployments of formally guaranteed neural networks in a production particle physics trigger.

IAIFI Research Highlights

This work puts certified deep learning theory into an operational particle physics trigger, showing that formal AI guarantees and experimental physics requirements can be designed together and deployed in production.

Deploying Lipschitz-bounded monotonic neural networks in a real-time, safety-critical system shows that formally constrained ML models can meet stringent performance and compute requirements, making a concrete case for mathematical guarantees in high-stakes AI applications.

By replacing legacy decision-tree triggers with provably robust neural networks, LHCb gains improved sensitivity to displaced multi-body b-hadron decays and to potential BSM states absent from training samples, expanding the physics reach of Run 3.

Future work will extend these architectures to additional inclusive triggers and higher-multiplicity decay topologies as LHCb accumulates its Run 3 dataset; full technical details are available at [arXiv:2312.14265](https://arxiv.org/abs/2312.14265).