A reconfigurable neural network ASIC for detector front-end data compression at the HL-LHC

Authors

Giuseppe Di Guglielmo, Farah Fahim, Christian Herwig, Manuel Blanco Valentin, Javier Duarte, Cristian Gingu, Philip Harris, James Hirschauer, Martin Kwok, Vladimir Loncar, Yingyi Luo, Llovizna Miranda, Jennifer Ngadiuba, Daniel Noonan, Seda Ogrenci-Memik, Maurizio Pierini, Sioni Summers, Nhan Tran

Abstract

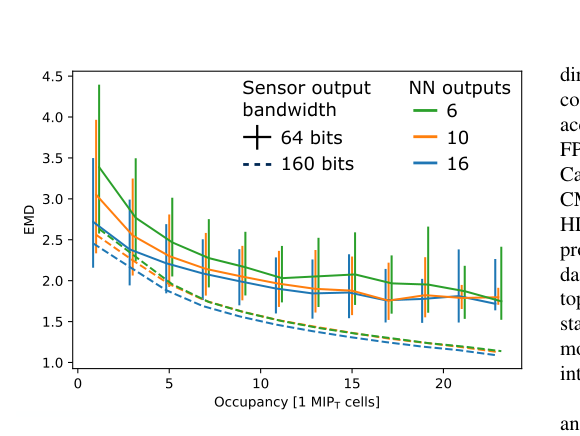

Despite advances in the programmable logic capabilities of modern trigger systems, a significant bottleneck remains in the amount of data to be transported from the detector to off-detector logic where trigger decisions are made. We demonstrate that a neural network autoencoder model can be implemented in a radiation tolerant ASIC to perform lossy data compression alleviating the data transmission problem while preserving critical information of the detector energy profile. For our application, we consider the high-granularity calorimeter from the CMS experiment at the CERN Large Hadron Collider. The advantage of the machine learning approach is in the flexibility and configurability of the algorithm. By changing the neural network weights, a unique data compression algorithm can be deployed for each sensor in different detector regions, and changing detector or collider conditions. To meet area, performance, and power constraints, we perform a quantization-aware training to create an optimized neural network hardware implementation. The design is achieved through the use of high-level synthesis tools and the hls4ml framework, and was processed through synthesis and physical layout flows based on a LP CMOS 65 nm technology node. The flow anticipates 200 Mrad of ionizing radiation to select gates, and reports a total area of 3.6 mm^2 and consumes 95 mW of power. The simulated energy consumption per inference is 2.4 nJ. This is the first radiation tolerant on-detector ASIC implementation of a neural network that has been designed for particle physics applications.

Concepts

The Big Picture

Imagine a camera with six million pixels firing 40 million times per second. Every second, it generates more raw data than any cable on Earth can carry. That’s the situation facing the CMS experiment at CERN’s Large Hadron Collider, where a new energy-measuring detector called the High-Granularity Calorimeter (HGCAL) is being built for an upgrade that will push the LHC to its highest-ever proton collision rates. The physics is within reach, but the data problem threatens to choke it off before a single interesting event gets recorded.

For decades, physicists handled this with simple filters at the detector edge: discarding readings below a minimum signal threshold, dropping quiet channels, keeping only the loudest hits. These brute-force approaches throw away data indiscriminately. What if you could put a small neural network directly on the detector chip, one that compresses data while preserving the physics, and can survive years of punishing radiation? A team from Fermilab, MIT, CERN, and a dozen other institutions just showed how.

The result is the first radiation-tolerant, on-detector application-specific integrated circuit (ASIC) running a neural network purpose-built for particle physics. Smaller than a fingernail and sipping milliwatts of power, it sits at the heart of one of the world’s most complex scientific instruments.

Key Insight: By embedding a reconfigurable neural network autoencoder directly into detector hardware, the team achieves physics-preserving data compression at the source, cutting the data transmission bottleneck before it even begins.

How It Works

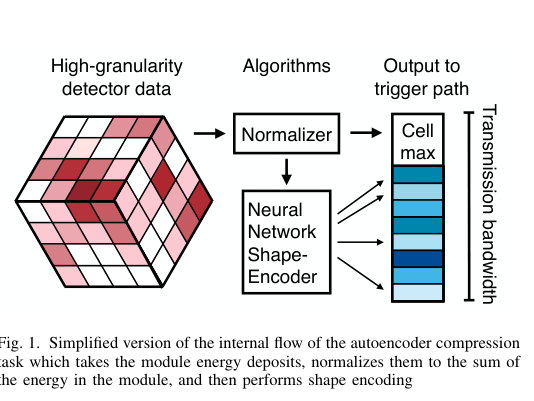

The core algorithm is an autoencoder, a neural network that learns to squeeze data through a narrow bottleneck and reconstruct it on the other side. Think of it like describing a painting over the phone: you can’t transmit every brushstroke, so you learn to capture the essence. Here, the autoencoder encodes the HGCAL’s energy deposits into a compact representation for transmission off-detector, where downstream systems decode it or analyze the compressed form directly.

The real trick isn’t just compression. The neural network architecture is fixed in silicon, but the weights (the learned parameters that define what the network actually does) are stored in programmable memory. Swap the weights, and you swap the compression algorithm.

Different regions of the HGCAL use different sensor shapes and see different particle cascade patterns, so each chip can be individually tuned after manufacturing. Beam conditions change? Update the weights. Detector aging shifts the calibration? Update the weights. No new chip required.

Fitting a neural network onto a chip this small and power-constrained took aggressive optimization:

- Quantization-aware training: Instead of standard 32-bit floating point, the network was trained assuming low-precision integer arithmetic from the start, shrinking the silicon footprint without wrecking accuracy.

- High-level synthesis (HLS): The team used the hls4ml framework to translate a trained neural network directly into hardware description language, automating chip design that would otherwise take years of manual effort.

- Radiation-hardened gate selection: The chip was fabricated in a 65-nanometer process. Every logic gate was chosen from a library validated to survive 200 million rads of ionizing radiation, the dose that detector electronics will accumulate over the HL-LHC’s full lifetime.

The resulting chip occupies 3.6 mm² of silicon, draws 95 mW of power, and burns just 2.4 nanojoules per inference. One complete compression of an HGCAL trigger data packet uses roughly the same energy as lifting a fine grain of sand by a millimeter. The chip has to do this 40 million times per second.

Why It Matters

The HL-LHC will collide protons at higher rates than any previous machine, and the HGCAL’s fine-grained imaging will be critical for picking rare collision events out of the flood. That’s how you get precision measurements of the Higgs boson and other key physics targets. But none of it works if the data can’t leave the detector. On-detector compression isn’t a convenience; it’s what makes the physics program viable.

On the AI side, this work shows that machine learning tools have matured enough to go from trained model to radiation-hardened silicon. The hls4ml workflow and quantization-aware training used here lay out a reusable path for deploying neural networks at the extreme edge, in any environment where power, size, and radiation tolerance are hard constraints.

The reconfigurability angle is worth watching too. A single chip design, mass-produced once, can serve dozens of different detector regions simply by loading different weights. That kind of flexibility from fixed hardware has applications well beyond particle physics.

Bottom Line: This team built and validated the first radiation-tolerant on-detector neural network ASIC for particle physics, showing that machine learning can survive and operate at the literal frontier of experimental science.

IAIFI Research Highlights

This work connects modern deep learning (autoencoder architectures, quantization-aware training) with the hardware constraints of experimental particle physics, producing a chip that satisfies both physics performance requirements and the extreme engineering limits of detector front-end electronics.

The paper lays out an end-to-end automated path from trained neural network to radiation-hardened ASIC hardware via hls4ml, giving other teams a reusable workflow for deploying ML models in power- and area-constrained environments.

The reconfigurable on-detector neural network enables the CMS HGCAL to compress its 40 MHz trigger data stream while preserving the calorimetric energy profiles needed for real-time event selection at the HL-LHC, without overwhelming off-detector bandwidth.

Future directions include deploying this design in actual CMS hardware and extending the approach to other detector subsystems; the full paper is available at [arXiv:2105.01683](https://arxiv.org/abs/2105.01683).

Original Paper Details

A reconfigurable neural network ASIC for detector front-end data compression at the HL-LHC

2105.01683

["Giuseppe Di Guglielmo", "Farah Fahim", "Christian Herwig", "Manuel Blanco Valentin", "Javier Duarte", "Cristian Gingu", "Philip Harris", "James Hirschauer", "Martin Kwok", "Vladimir Loncar", "Yingyi Luo", "Llovizna Miranda", "Jennifer Ngadiuba", "Daniel Noonan", "Seda Ogrenci-Memik", "Maurizio Pierini", "Sioni Summers", "Nhan Tran"]

Despite advances in the programmable logic capabilities of modern trigger systems, a significant bottleneck remains in the amount of data to be transported from the detector to off-detector logic where trigger decisions are made. We demonstrate that a neural network autoencoder model can be implemented in a radiation tolerant ASIC to perform lossy data compression alleviating the data transmission problem while preserving critical information of the detector energy profile. For our application, we consider the high-granularity calorimeter from the CMS experiment at the CERN Large Hadron Collider. The advantage of the machine learning approach is in the flexibility and configurability of the algorithm. By changing the neural network weights, a unique data compression algorithm can be deployed for each sensor in different detector regions, and changing detector or collider conditions. To meet area, performance, and power constraints, we perform a quantization-aware training to create an optimized neural network hardware implementation. The design is achieved through the use of high-level synthesis tools and the hls4ml framework, and was processed through synthesis and physical layout flows based on a LP CMOS 65 nm technology node. The flow anticipates 200 Mrad of ionizing radiation to select gates, and reports a total area of 3.6 mm^2 and consumes 95 mW of power. The simulated energy consumption per inference is 2.4 nJ. This is the first radiation tolerant on-detector ASIC implementation of a neural network that has been designed for particle physics applications.