A Cosmic-Scale Benchmark for Symmetry-Preserving Data Processing

Authors

Julia Balla, Siddharth Mishra-Sharma, Carolina Cuesta-Lazaro, Tommi Jaakkola, Tess Smidt

Abstract

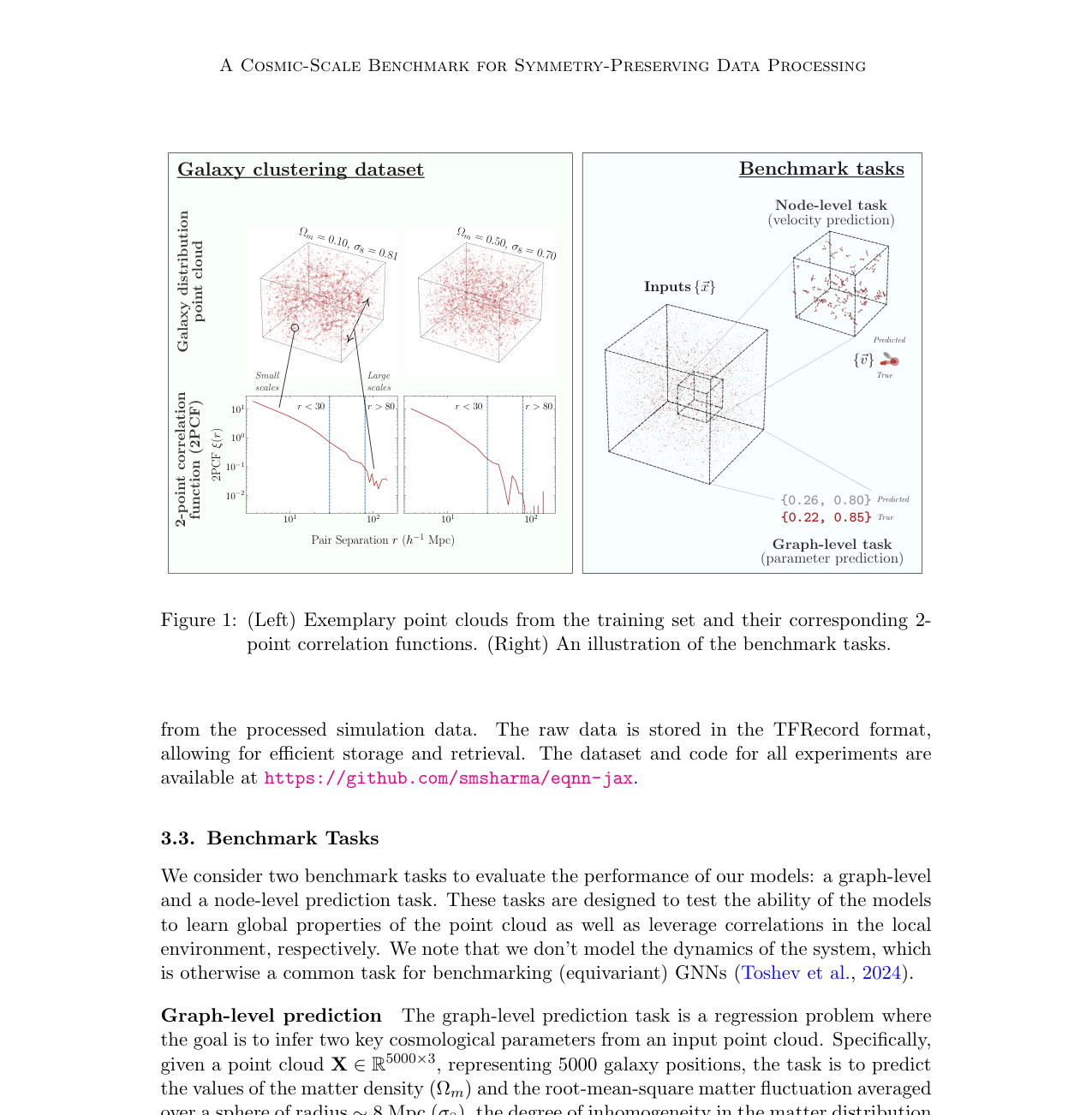

Efficiently processing structured point cloud data while preserving multiscale information is a key challenge across domains, from graphics to atomistic modeling. Using a curated dataset of simulated galaxy positions and properties, represented as point clouds, we benchmark the ability of graph neural networks to simultaneously capture local clustering environments and long-range correlations. Given the homogeneous and isotropic nature of the Universe, the data exhibits a high degree of symmetry. We therefore focus on evaluating the performance of Euclidean symmetry-preserving ($E(3)$-equivariant) graph neural networks, showing that they can outperform non-equivariant counterparts and domain-specific information extraction techniques in downstream performance as well as simulation-efficiency. However, we find that current architectures fail to capture information from long-range correlations as effectively as domain-specific baselines, motivating future work on architectures better suited for extracting long-range information.

Concepts

The Big Picture

Imagine trying to understand a city’s traffic patterns by looking only at individual intersections. You’d miss the highway rush hours and continental pressure systems that shape everything. Scale that problem to the entire observable Universe, where galaxies scatter across hundreds of millions of light-years, and you’ve got the challenge cosmologists face daily.

Mapping those galaxies is one of astronomy’s most powerful tools. The way galaxies cluster into filaments (thread-like chains), walls (vast flat sheets), and voids (enormous empty regions) encodes information about dark matter, dark energy, and the expansion history of the cosmos. Next-generation surveys like the Dark Energy Spectroscopic Instrument (DESI) will deliver petabytes of galaxy position data: enormous three-dimensional maps, called point clouds, containing millions of galaxy positions. Getting the most physics out of those datasets requires algorithms that can simultaneously read galaxies’ tight local neighborhoods and their continent-spanning correlations.

A team of MIT and IAIFI researchers tested whether symmetry-aware graph neural networks (GNNs), which treat objects as nodes in a web of connections, are up to that challenge. These networks are powerful, but they have a blind spot for the very long-range structure that makes cosmological data so rich.

Key Insight: Equivariant graph neural networks that respect the Universe’s symmetries outperform standard neural networks on galaxy clustering tasks, but they still struggle to capture large-scale correlations as well as classical physics-based methods.

How It Works

The Universe looks the same everywhere and in every direction, a property physicists call homogeneity and isotropy. Galaxy distributions shouldn’t change if you rotate, translate, or reflect your coordinate system. Any algorithm that bakes in this symmetry should be more accurate and more efficient than one that learns it from scratch.

The mathematical version of that constraint is E(3)-equivariance: a network whose outputs transform in lockstep with any rotation, reflection, or translation applied to the input. E(3) refers to all the ways you can move or mirror an object in three-dimensional space without distorting it. Rotate your galaxy catalog 90 degrees, and the network’s outputs rotate accordingly. No wasted capacity memorizing redundant orientations.

The benchmark dataset comes from the Quijote suite, a collection of N-body simulations that track millions of individual particles as dark matter and galaxies evolve under gravity over cosmic time. From these simulations, the team curated point clouds of roughly 10,000 galaxy positions each, significantly larger than the O(100–1,000) point clouds used in prior GNN cosmology studies. Each galaxy carries its 3D position plus physical properties like velocity.

The downstream task is cosmological parameter inference: given a snapshot of galaxy positions, can the network recover the underlying physics parameters that generated that Universe? Things like matter density or the amplitude of primordial fluctuations. The team implemented and compared several architectures using their new eqnn-jax library:

- Non-equivariant GNNs: Standard message-passing networks (which propagate summaries between neighboring nodes) with no built-in symmetry

- E(3)-equivariant GNNs: Networks whose operations transform correctly under rotations and reflections, including SEGNN and related architectures

- Domain-specific baselines: Classical statistics like the power spectrum (how much galaxy clustering exists at each distance scale) and the two-point correlation function (how likely two galaxies are to be near each other), refined by physicists over decades

Why It Matters

The results tell a clear story with an important asterisk. Equivariant networks beat their non-equivariant counterparts, and do so more efficiently, requiring fewer training simulations to reach the same performance. That efficiency is a big deal in cosmology, where a single N-body simulation can eat thousands of CPU-hours. A symmetry-aware network that needs far fewer training examples for the same accuracy isn’t just convenient; it can make the difference between a method that’s practical and one that isn’t.

But domain-specific baselines, particularly those that explicitly compute long-range statistics like the power spectrum, still hold an edge on correlations that stretch across the full simulation volume. Modern GNNs, even equivariant ones, aggregate information through local message-passing steps. Each layer propagates information one hop further through the galaxy graph, so very distant galaxies can’t effectively “talk” to each other without stacking many expensive layers. Cosmological data, shaped by physics that operated across the entire observable Universe in its first moments, demands a global awareness that current architectures can’t quite deliver.

That gap is also the paper’s most actionable finding: it tells you exactly what to build next. Architectures with global attention mechanisms, hierarchical pooling, or physics-inspired positional encodings could push performance well past current limits. Similar multiscale problems show up in climate modeling, molecular dynamics, and particle physics, so progress here would reach well beyond galaxy surveys.

Bottom Line: Symmetry-preserving graph neural networks outperform standard networks in both accuracy and data efficiency on cosmic large-scale structure, but closing the remaining gap with classical long-range statistics will require new architectures built to see across cosmic distances.

IAIFI Research Highlights

This work connects geometric deep learning with observational cosmology, using the Universe's fundamental symmetries as a design principle for neural networks while pushing forward our ability to extract physics from next-generation galaxy surveys.

The paper introduces a large-scale, physically structured point cloud benchmark and the `eqnn-jax` library, giving the machine learning community a rigorous testbed for equivariant architectures on O(10⁴)-point datasets, well beyond typical graph learning benchmarks.

E(3)-equivariant networks match or exceed classical cosmological statistics on simulation efficiency, opening the door to ML-driven analysis of petabyte-scale galaxy surveys like DESI.

Future work on long-range information extraction through transformers, hierarchical GNNs, or physics-inspired global statistics could close the remaining performance gap. Full paper: [arXiv:2410.20516](https://arxiv.org/abs/2410.20516)